|

Jack Ganssle's Blog This is Jack's outlet for thoughts about designing and programming embedded systems. It's a complement to my bi-weekly newsletter The Embedded Muse.

Contact me at jack@ganssle.com. I'm an old-timer engineer who still finds the field endlessly fascinating (bio).

This is Jack's outlet for thoughts about designing and programming embedded systems. It's a complement to my bi-weekly newsletter The Embedded Muse.

Contact me at jack@ganssle.com. I'm an old-timer engineer who still finds the field endlessly fascinating (bio). |

On N-Version Programming

August 8, 2018

The conventional wisdom is that a very effective way to get higher-reliability software is to have independent teams develop two or more copies of the project from a common set of requirements. All versions run together and a voting algorithm or other means is used to shut down a faulty program and let the other(s) continue. The idea is that, while all versions would have errors, it was unlikely that two would fail in the same way at the same time.

Sounds promising, doesn't it? But at least one study suggests otherwise.

John Knight and Nancy Leveson ran an experiment in 2002 where 27 programmers developed 27 versions of a project from a common set of requirements. Each was subject to one million automated tests. Surprisingly, a lot failed in exactly the same manner on the same input data.

Their conclusion (with many caveats): "For the particular problem that was programmed for this experiment, we conclude that the assumption of independence of errors that is fundamental to the analysis of N-version programming does not hold. Using a probabilistic model based on independence, our results indicate that the model has to be rejected at the 99% confidence level."

Their caveats are many, but the results are striking. The faults that were correlated between versions were of many varieties, but common was a lack of deep understanding of the finite precision of floating point numbers, and a surprising weakness with geometry (the problem had to do with radar images from missiles).

These programs were small, on the order of a thousand lines of code. One would think that such a small code base wouldn't be hard to get right.

The subjects were not required to use any particular software engineering approach, and one assumes the usual "just code it up" attitude dominated.

In the past other researchers have wondered about the efficacy of n-version code. In addition to the results of the Knight/Leveson experiments, many feel that a flaw is that most of these systems of systems are built from a common set of requirements. Once a problem grows in scope it becomes very difficult to perfectly define the requirements. Perfect code built to imperfect requirements means a failure will be experienced by all of the programs.

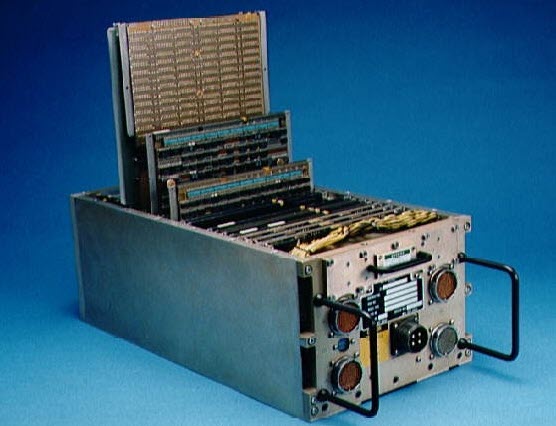

The Space Shuttle famously used N-version programming. Four computers ran identical code and watched each other for problems. A fifth had a completely different code base, built from different requirements. And more interestingly, those specs were much simpler than those for the four main machines. The fifth could only make the Shuttle do the bare minimum needed to get into orbit, maintain orbit, and land. That's a very appealing approach as simpler systems tend to be more correct.

One of the Shuttle's five IBM AP-101S computers

Oh – those requirements for the Shuttle? Capers Jones has numbers for typical sizes of requirements documents in pages. Extrapolating to the Shuttle's 420,000 lines of code, typical projects would weigh in with around 4000 pages – if they were 100% captured, which is almost unheard of. The Shuttle's software requirements document for the four main computers was 40,000 pages! The team spent a third of their schedule nailing those down.

I do think n-version programming can be a boon to reliability. But if the specs aren't perfect, and if poor software engineering techniques are used, the benefits may not be as much as hoped for.

Feel free to email me with comments.

Back to Jack's blog index page.

If you'd like to post a comment without logging in, click in the "Name" box under "Or sign up with Disqus" and click on "I'd rather post as a guest."

Recent blog postings:

- Non Compos Mentis - Thoughts on dementia.

- Solution to the Automotive Chip Shortage - why use an MCU when a Core I7 would work?

- The WIRECARE - A nice circuit tester

- Marvelous Magnetic Machines - A cool book about making motors

- Over-Reliance on GPS - It's a great system but is a single point of failure

- Spies in Our Email - Email abuse from our trusted friends

- A Canticle for Leibowitz - One of my favorite books.

- A 72123 beats per minute heart rate - Is it possible?

- Networking Did Not Start With The IoT! - Despite what the marketing folks claim

- In-Circuit Emulators - Does anyone remember ICEs?

- My GP-8E Computer - About my first (working!) computer

- Humility - On The Death of Expertise and what this means for engineering

- On Checklists - Relying on memory is a fool's errand. Effective people use checklists.

- Why Does Software Cost So Much? - An exploration of this nagging question.

- Is the Future All Linux and Raspberry Pi? - Will we stop slinging bits and diddling registers?

- Will Coronavirus Spell the End of Open Offices - How can we continue to work in these sorts of conditions?

- Problems in Ramping Up Ventilator Production - It's not as easy as some think.

- Lessons from a Failure - what we can learn when a car wash goes wrong.

- Life in the Time of Coronavirus - how are you faring?

- Superintelligence - A review of Nick Bostrom's book on AI.

- A Lack of Forethought - Y2K redux

- How Projects Get Out of Control - Think requirements churn is only for software?

- 2019's Most Important Lesson. The 737 Max disasters should teach us one lesson.

- On Retiring - It's not quite that time, but slowing down makes sense. For me.

- On Discipline - The one thing I think many teams need...

- Data Seems to Have No Value - At least, that's the way people treat it.

- Apollo 11 and Navigation - In 1969 the astronauts used a sextant. Some of us still do.

- Definitions Part 2 - More fun definitions of embedded systems terms.

- Definitions - A list of (funny) definitions of embedded systems terms.

- On Meta-Politics - Where has thoughtful discourse gone?

- Millennials and Tools - It seems that many millennials are unable to fix anything.

- Crappy Tech Journalism - The trade press is suffering from so much cost-cutting that it does a poor job of educating engineers.

- Tech and Us - I worry that our technology is more than our human nature can manage.

- On Cataracts - Cataract surgery isn't as awful as it sounds.

- Can AI Replace Firmware - A thought: instead of writing code, is the future training AIs?

- Customer non-Support - How to tick off your customers in one easy lesson.

- Learn to Code in 3 Weeks! - Firmware is not simply about coding.

- We Shoot For The Moon - a new and interesting book about the Apollo moon program.

- On Expert Witness Work - Expert work is fascinating but can be quite the hassle.

- Married To The Team - Working in a team is a lot like marriage.

- Will We Ever Get Quantum Computers - Despite the hype, some feel quantum computing may never be practical.

- Apollo 11, The Movie - A review of a great new movie.

- Goto Considered Necessary - Edsger Dijkstra recants on his seminal paper

- GPS Will Fail - In April GPS will have its own Y2K problem. Unbelievable.

- LIDAR in Cars - Really? - Maybe there are better ideas.

- Why Did You Become an Engineer? - This is the best career ever.

- Software Process Improvement for Firmware - What goes on in an SPI audit?

- 50 Years of Ham Radio - 2019 marks 50 years of ham radio for me.

- Medical Device Lawsuits - They're on the rise, and firmware is part of the problem.

- A retrospective on 2018 - My marketing data for 2018, including web traffic and TEM information.

- Remembering Circuit Theory - Electronics is fun, and reviewing a textbook is pretty interesting.

- R vs D - Too many of us conflate research and development

- Engineer or Scientist? - Which are you? John Q. Public has a hard time telling the difference.

- A New, Low-Tech, Use for Computers - I never would have imagined this use for computers.

- NASA's Lost Software Engineering Lessons - Lessons learned, lessons lost.

- The Cost of Firmware - A Scary Story! - A hallowean story to terrify.

- A Review of First Man, the Movie - The book was great. The movie? Nope.

- A Review of The Overstory - One of the most remarkable novels I've read in a long time.

- What I Learned About Successful Consulting - Lessons learned about successful consulting.

- Low Power Mischief - Ultra-low power systems are trickier to design than most realize.

- Thoughts on Firmware Seminars - Better Firmware Faster resonates with a lot of people.

- On Evil - The Internet has brought the worst out in many.

- My Toothbrush has Modes - What! A lousy toothbrush has a UI?

- Review of SUNBURST and LUMINARY: An Apollo Memoir - A good book about the LM's code.

- Fun With Transmission Lines - Generating a step with no electronics.

- On N-Version Programming - Can we improve reliability through redundancy? Maybe not.

- On USB v. Bench Scopes - USB scopes are nice, but I'll stick with bench models.