|

|

|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

|

Contents |

|

|

|

Editor's Notes |

Tip for sending me email: My email filters are super aggressive and I no longer look at the spam mailbox. If you include the phrase "embedded" in the subject line your email will wend its weighty way to me.

Robert Dennard has passed away. He was the inventor of the DRAM, and found what became known as Dennard Scaling, which showed that as IC geometries shrink, many other goodness factors accrue. Dennard Scaling faded away at the 90 nm node, but was an important driver of the industry for three decades. Steve Leibson has a nice tribute to Robert Dennard here.

|

|

Quotes and Thoughts

|

|

A study of 4000 software projects found quality to be the number one cause of schedule problems: Jones, Capers. Assessment and Control of Software Risks. Englewood Cliffs, N.J.: Yourdon Press, 1994. |

| Tools and Tips

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

Jonathan Morton responded to the note in the last Muse that the Z80, after 47 years, is going away:

|

> A ~47 year run for a single Micro. Do any others do longer?

With the Z80 originally introduced in 1976, the obvious comparison is to its direct contemporary, the 6502 - which was first introduced the previous year, in 1975. In many ways, the 6502 was to the Motorola 6800 family what the Z80 was to the Intel 8080 family.

The first year's 6502 production run lacked a working ROR instruction, which was added to the 1976 model, but software workarounds for the lack of this instruction continued to be included (often as optional patches) in commercial and hobbyist software for some time afterwards, to cater to early adopters who were still using the 1975 model - which would have included owners of the Apple I.

The 6502 remains in production today. Like the Z80, it has long since moved to a CMOS manufacturing process and acquired a variety of backward-compatible enhancements. The current model is the W65C02S, manufactured on TSMC's .6µm CMOS process. Officially specified to run at 14MHz, it is often found by hobbyists to be capable of considerably more - or alternatively, it is a fully-static design which can be run at very low speeds and reduced supply voltages if desired. It is possible to detect the execution of a "stop" instruction and gate off the clock signal for extra power savings.

Rumour has it that the 6502 remains a reasonably popular microprocessor for embedded applications that don't need a lot of computational horsepower, though often as a "hard core" rather than a discrete silicon product. For such applications, the W65C02S is useful for technical evaluation and prototyping. Very little external glue logic is required to interface to a JEDEC-standard SRAM and EEPROM; in extreme cases this can be reduced to a single 74HC00 device!

|

D. R. wrote:

|

Regarding the Z80 you said in the Embedded Muse 490: "A ~47 year run for a single Micro. Do any others do longer?"

I know you are not counting parts that cannot be bought new today when comparing the Z80's ~47 year longevity. But it is interesting (to me anyway) what the first microprocessor was: "The first chips that could be considered microprocessors were designed and manufactured in the late 1960s and early 1970s, including the MP944 used in the F-14's Central Air Data Computer.[1] Intel's 4004 of 1971 is widely regarded as the first commercial microprocessor.[2]"[3]

Also you said: "Bob found that an open-source version of the Z80 is coming out: https://www.eenewseurope.com/en/open-source-z80-to-replace-end-of-line-chip/"

It should be noted that the Z80 has been available as an Open-Source FPGA Core since Dec 12, 2014, almost ten years ago.[4]

* References:

1. Laws, David (2018-09-20). "Who Invented the Microprocessor?". Computer History Museum. Retrieved 2024-01-19.

https://computerhistory.org/blog/who-invented-the-microprocessor/

2. Augarten, Stan (1983). The Most Widely Used Computer on a Chip: The TMS1000 (Texas Instrument). New Haven and New York: Ticknor & Fields. ISBN 978-0-89919-195-9. Retrieved 2009-12-23.

https://americanhistory.si.edu/smithsonian-chips

3. Microprocessor Chronology

https://en.wikipedia.org/wiki/Microprocessor_chronology

4. A-Z80 CPU, Open-Source FPGA Core in Verilog, License: LGPL

https://opencores.org/projects/a-z80

|

Steve Leibson wrote a nice history of the Z80. |

|

Freebies and Discounts |

|

James Grenning and Wingman Software are offering two pairs of seats for his newly released self-paced Test-Driven Development for Embedded C/C++ training course. James is the author of Test-Driven Development for embedded C and founder of Wingman Software. His courses have trained thousands of embedded software developers in TDD and SOLID design principles around the world.

The introductory price is currently $500 per learner, and the course's value could be immeasurable to your career. Each prize consists of two seats so you and a colleague can learn together.

The course has over a dozen exercises, ninety short videos presentations and demos, reference material as well as linked content. The best way to determine if TDD can be valuable to you, is to experience it. James' course is designed to guide you through an experience so you can decide if it can change your life. Find out more about the course here https://wingman-sw.com/training/self-paced-tdd.

James is also offering a consolation prize for all entries, $100 off the introductory price.

Enter via this link. |

|

Software - The Root of All Evil |

|

A reader who wishes to be anonymous wrote:

|

Recently, we've come into new corporate leadership in a large company. The new head of engineering has concluded, based on meetings with all engineering groups (mechanical, electrical, software, PM, manufacturing, etc.) that the software team is essentially the root of all evil in the company. Software is the reason that projects are delayed and run past schedule, and we "need to fix it". Now, we know that software/firmware is the bottom of the engineering pit here. Last to get requirements, but also first in line to catch all the blame. My question for the group here is, how common is this perception, and what have you done to combat this? |

I'd appreciate readers' thoughts.

"The software team is the root of all evil" is sort of amusing when one considers that software is a huge part of what gives value to a product. Some years ago I read that the average car buyer bases 70% of the perceived value of a vehicle on the software. A modern EV has less "stuff" in it than a car with an engine, yet far more software than one would imagine.

Software is nearly always the choke point of a project. It's impossible to fully test the code until pretty much everything else is done, and that testing inevitably turns up problems.

When someone complained that "software is too expensive" Tom DeMarco replied "compared to what?" Imagine building any non-trivial embedded system without code - the costs stagger the imagination. When I hear this complaint I ask my interlocutor "what would it cost to make a machine that does Excel with no programmed components - just logic circuits." That machine might be as big as the computer built by Deep Thought in the Hitchhiker's Guide to the Galaxy. The cost: well, it would make the tens of millions spent writing Excel look like a Blue Light Special.

Yet I feel there will always be this tension between software developers and management. The latter wants a product now. The former work under real constraints. And the inexorable pressures of capitalism mean that if we could write a million lines of code in a month, pretty soon management would need it in two weeks.

|

|

An Instrumentation Tool |

|

Branko Premzel has an interesting, new (and free) tool:

|

I think it's pretty clear to you why code instrumentation is important. After an unsuccessful search for a code instrumentation solution suitable for hard-real-time control systems a few years ago, I started collecting ideas, experimenting and then developing a suitable solution in my spare time. It was a challenge for me to see how optimal a solution could be. It was only recently that it was ready for release and is now available on Github.

This new solution is useful in general, not just for the purposes for which I originally designed it. It is my contribution to the open source community.

The new toolkit is suitable for both large RTOS-based systems and small, resource-constrained systems. The toolkit is not a replacement for existing event-oriented solutions such as Segger SystemView or Tracealyzer, as it is based on a different concept. It provides minimally intrusive data logging and flexible data decoding. The code is optimized for 32-bit devices and executes very fast with very low stack usage. For example, only about 35 CPU cycles and 4 bytes of stack are required to log a simple event on a device with a Cortex-M4 core. Nearly 200 CPU cycles and a maximum of about 150 to 510 bytes of stack (depends on logging mode and options) are required to log an event with some other tools.

The solution is essentially a reentrant timestamped fprintf() function running on the host instead of the embedded system. There is no data encoding and no printf-style functions/strings in the embedded system. The memory footprint of the logging library is only 1 kB when all functions are used. The small number of logging functions (they are differentiated only by data size, not by data type) and the familiar printf syntax make the solution easy to use and learn. Any data type can be logged and printed (decoded on the host), including structures and bitfields. Print output can be redirected to any number of custom files, such as CSV or event log files. This allows the use of existing log and event viewers and graphing tools. The solution is ready to use for devices with an ARM Cortex-M core. Any debug probe, communication channel, or media can be used to transfer data to the host.

Custom triggers, flexible on-the-fly filtering, and sorting of data into custom files during decoding help manage the flood of data from the embedded system. Built-in statistics allow quick identification of data and time extremes. The toolkit is ideal for reverse engineering poorly documented code and analyzing complex, difficult-to-reproduce problems. Its low stack requirements virtually eliminate the possibility of stack overflows when instrumenting code.

The new toolkit is intended for developers of various embedded systems from real-time control to IoT. See the RTEdbg toolkit presentation or go to github.com/RTEdbg/RTEdbg The toolkit distribution file includes library, documentation, demo code for ARM Cortex-M, and setup for Keil MDK, IAR EWARM, MCUXpresso, and STM32CubeIDE. Comments on the new solution and documentation are welcome.

|

|

|

Failure of the Week |

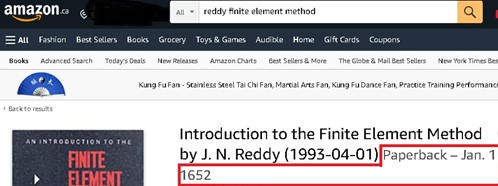

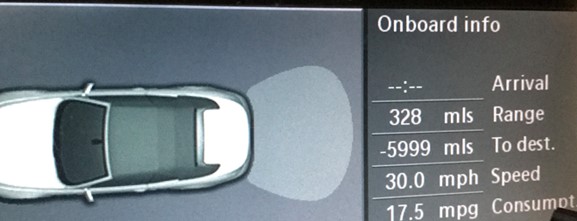

Greg Hansen sent this gem:

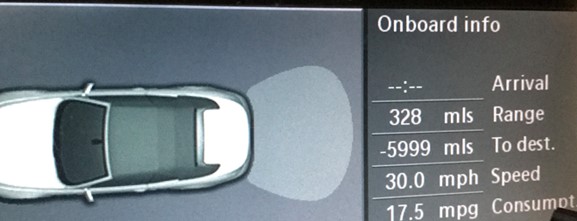

Ian McMill is in negative distance mode:

Have you submitted a Failure of the Week? I'm getting a ton of these and yours was added to the queue. |

|

Jobs! |

|

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

|

Joke For The Week |

These jokes are archived here.

I took this picture at the airport in Oslo. Not sure I'd care to fly on this jet:

|

|

About The Embedded Muse |

|

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster. |