|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

| Contents |

|

| Editor's Notes |

Over 400 companies and more than 7000 engineers have benefited from my Better Firmware Faster seminar held on-site, at their companies. Want to crank up your productivity and decrease shipped bugs? Spend a day with me learning how to debug your development processes.

Attendees have blogged about the seminar, for example, here, here and here.

Jack's latest blog: On Discipline |

| Quotes and Thoughts |

Strategies for writing bug-free code: How could I have prevented this bug? How could I easily have prevented this bug? Steve Macquire |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

Bill Knight suggested:

|

I sent in a limited review about the VNWA <https://www.sdr-kits.net/introducing-DG8SAQ-VNWA3> offered by SDR-Kits a few years ago. Seeing the review of the $50 VNA in this month's newsletter I thought it might be useful to mention it again for those who might need a bit more. While really good at the time the VNWA's hardware has been refined and software is outstanding and has been continually improved. The Yahoo user group <VNWA@yahoogroups.com> is very active with the author of the firmware/software as well as other members always ready to assist new users. The VNWA 3 covers a range from 1KHz to 1.3GHz and is powered by the PC USB-bus. It offers a dynamic range of 90db up to 500MHz and better than 50db above 500MHz. For its features, the price is very affordable at $436 to $589 depending upon options. |

Curtis Hendrix likes Hippomocks:

|

I've been using a neat unit testing mocking framework called Hippomocks (https://github.com/dascandy/hippomocks) to help unit test C code for Cortex M micros. It's a single header file that provides excellent C mocking support, in addition to C++ mocking (which I haven't used).

It's difficult to isolate code for unit testing with C's static linking, but Hippomocks makes it much easier. It does this by dynamically changing the function address at runtime to point to your fake or mocked function call. The downside is Hippomocks only works on Windows or Linux, so running unit tests on the target hardware is out.

I setup my firmware projects to build for both the target hardware and for Windows. I do most of the core firmware development using Test Driven Development by compiling the firmware into a Windows .lib and tests are developed with MSTest. This is nice because I can develop and test the firmware without the target hardware. Granted, there's no replacement I've found for testing actual firmware on actual hardware, but I've found a drastic decrease in the amount of time spent with a debugger attached to the target hardware to hunt down logic errors.

Unit Testing doesn't catch all the errors in the firmware, but it does have a significant decrease in the number of errors, especially when starting out.

A really simple example of how mocking works can be found here: https://github.com/dascandy/hippomocks/blob/master/HippoMocksTest/test_cfuncs.cpp

I really like Hippomocks because it makes isolating C code for unit testing extraordinarily easy. Functions can be replaced with simple stubs that do nothing or return a constant value, or they can be replaced entirely by providing a C++ lambda. |

Timm Knape has a commenting tool:

|

I found it helpful to look at the dual of the problem. Instead of embedding comments into code I embed code into comments. That's not new: I learned about it ages ago at university with Knuth's Literate Programming.

But it was a little bit confusing for me: To understand a program you have to read the whole source code. I searched for a small tool, where you can interrupt reading at any time and generate a program that has all the functionality you read about, but nothing more. As I am very impatient I didn't found anything and wrote it myself.

Under https://itmm.github.io/pwg/ you can see the generated documentation of a simple password generator in C++. As a general format I used slides with notes because I believe that a slideful of code is the right amount to cope with at a time.

The tool for extracting code and HTML documentation from a markdown-like text file is freely available at https://github.com/itmm/hex and I am using it personally for over a year now and found it very powerful to write compact embedded and other code. |

|

| Freebies and Discounts |

Mike Wisbiski win the miniDSO scope last month.

This month's giveaway is an Amazon Echo Show 5.

Enter via this link. |

| Convolutions, Redux |

In the last Muse I really blew it in an article on convolutions. Many people caught the mistake; Michael Tayler-Grint wrote:

|

It's possible that I'm simply confused, but I think there's a mistake in your section on convolutions. You say that the simple moving average can be calculated as AN+1 = (XN+1 + N * AN)/(N+1), but this seems to be the equation for the cumulative moving average. The term AN has some contribution from the first term, X0, and all subsequent terms up to XN, so the result isn't the simple (boxcar) moving average.

The computationally less intensive way of calculating the simple moving average is AN = AN-1 + ((XN - XN-M) / M), where M is the filter length. You need to store the previous result as well as the last M samples but you do save some addition instructions, with the speed-up being roughly proportional to M.

I can't think of a generic shortcut for calculating a weighted moving average, but for certain sets of weights it feels like it should be possible. I think a geometric series should work with an equation similar to the simple moving average but haven't worked it through. |

Eric recommended http://dspguide.com/ for more on convolutions.

Michael Covington wrote:

|

You mentioned convolutions... Selective averaging is important in astrophotography, because an underexposed digital image (the only kind we ever get) is full of random noise and may also contain high-amplitude one-time errors from airplanes or meteors passing by, or even cosmic rays. As you note, averaging N images reduces random noise to sqrt(N) of its original level, and we often average hundreds of images. (I, being a wimp, usually work with sets of 10 to 20.) In addition, extreme outliers need to be completely rejected because they are completely erroneous (e.g., pixels that are very bright because of a passing airplane -- they should not contribute anything to the final image). An iterative kappa-sigma algorithm is often used, where everything farther out than K times the standard deviation is discarded and then a new standard deviation is computed and the whole thing is done again.

Here is an example of the result of averaging 15 images taken with an almost-off-the-shelf Nikon DSLR. It shows nebulae so faint that they are not well known to science. http://www.covingtoninnovations.com/michael/blog/1904/index.html#x190430

(For your joke section, I invite people to add to the list here, which you're welcome to copy and improve:

http://www.covingtoninnovations.com/michael/blog/1909/index.html#x190907A) |

Harold Kraus contributed:

|

Yeah, recognizing the convolution of the moving average (really most any digital filter) on a time sampled signal helps you realize that the high frequency components in the noise get aliased into your filtered "average". We have a commercial test rack that has a 200Hz PWM signal driving servoed solenoid actuators and that current noise gets into most every instrument that naturally sample at integer milliseconds, so the PWM signal can be seen replicated in the data but at much lower frequency, on the order of 5-30 MHz; a simple rolling average on that, besides having terribly slow response, just moves the frequency around. |

|

| More on Ultra-Low Power Design |

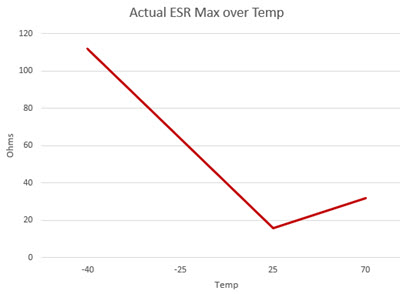

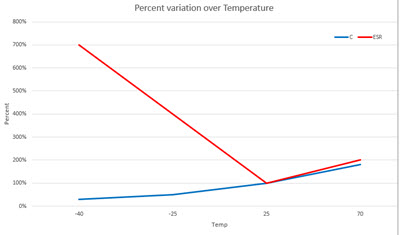

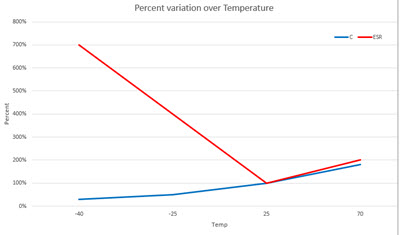

In the last Muse I noted some results from my research on building systems that can run for years on coin cells. Several readers had comments. Paul Carpenter, for instance, has some interesting data about supercapacitors. These devices provide farad-level capacitance in small packages. ESR, or equivalent series resistance, is the inherent resistance that is effectively in series with a perfect capacitor, and Paul found this varies a lot:

He notes that some supercaps freeze at -40C! He also mentioned that he saw a circuit that used a Schottky diode to ensure a battery inserted backwards wouldn't destroy the circuit. But it used a boost converter to overcome the diode's potentially 0.4V drop when forward biased. When the MCU slept it consumed so little current that the diode's forward drop was close to zero... so the voltage presented to the MCU exceeded that absolute max rating.

William Watson had some comments about low-power, noting sections of my report::

|

2 - On Typical Specifications

A major processor vendor came out with a CMOS version of their well-known processor, with truly wonderful power consumption figures. Frustratingly, when we got some into our lab, we couldn't come close at all to the "typical" current consumption listed. After lots of effort pushing on them, we were informed that they despaired of range of current consumption due to variations of loads on the address and data buses, program-to-program differences, etc. As a result, the typical current listed was for the one consistent configuration: with the processor held in reset!

9 - Reverse Battery Protection

Adding a series diode for protection against a reversed battery means that you pay in lost voltage for protection all the time when the battery is correctly inserted, and none when the battery is reversed. Instead, you could use a reversed diode across the battery input. Then you'd pay with leakage current during normal operation, and risk shorting the battery out if someone installed it backwards. Looking at Digikey for SMT diodes with low leakage current (selecting all with 100 nA or less) then picking the cheapest without regard to other characteristics, I fund this part:https://www.onsemi.com/pub/Collateral/BAS19LT1-D.PDF - 100 nA leakage at 100 Volts, 1.0 Vf at 100 mA, 1.25 at 150 mA. $0.0148 Q10,000

Your battery curves showed 2.8 V at 10 mA and 2.6 V at 30 mA when new, so 200 mV over 20 mA or 10 Ohms. That fits well with the (essentially) open circuit 2.9 Volts. You might be able to get 150 mA from the battery, dropping its output to 1.4 Volts. That's pretty close to the diode's Vf at 150 mA. Would -1.25 V fry your circuits? Maybe… so this "protection" might not really help.

Depending on the peak current consumption for your application, you might be able to insert a series resistor in line with the battery and reduce the peak current through the diode and across the rest of your circuit.

Of course, the leakage current max of 100 nA is at 25C. If you go up to 150C, the current goes up to 100 uA! Oops!

With an actual Low-Leakage diode, the MAX leakage current is only 80 nA at 150C.

https://www.diodes.com/assets/Datasheets/BAV116HWFQ.pdf

Vf stays relatively low even at 150 mA of forward current. About $0.075 Q3000.

Blindingly doing a search for "low leakage diode" and accepting what Digikey turns up, I see this Shottkey part: (Sorry - the link was deleted).

It has nice curved for reverse leakage at different temperatures, and a Vf of only 0.6V at 100 mA. Of course, it costs $0.26 Q1, $0.044 Q3000, so the battery holder you suggest might be a better deal anyway!

The FET solution that TI proposes may be better, with much less loss, for not much greater cost.

|

Before one uses a reverse-biased diode across a battery, I'd check with UL to see if that violates their rules. |

| This Week's Cool Product |

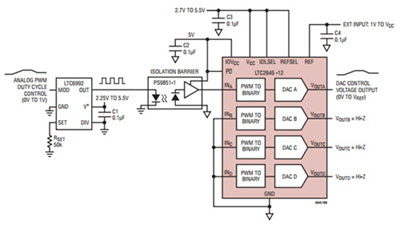

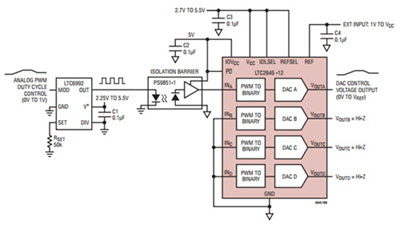

I came across this circuit more or less by accident and thought it pretty clever:

It's a way to transmit analog data over a single-wire (plus ground) digital link. The LTC6992 converts an analog input into a pulse-width modulated bit stream, which is reconstructed by the LTC2645. The latter digests PWM inputs into DAC outputs. Being digital, one could send the signal over potentially quite long distances without losing fidelity, using a protocol-free RS-482/422 link. Though the LTC2645 can output signals with 12 bits of resolution, the LTC6992 appears to not offer anywhere near the same precision, with errors of several percent possible. Still, this is easier than stringing a couple of MCUs together.

Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad. |

| Joke For The Week |

These jokes are archived here.

From Tom Razov:

A QA engineer walks into a bar. Orders a beer. Orders 0 beers. Orders 99999999999 beers. Orders a lizard. Orders -1 beers. Orders a ueicbksjdhd. First real customer walks in and asks where the bathroom is. The bar bursts into flames, killing everyone. |

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster.

|