|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

| Contents |

|

| Editor's Notes |

Tip for sending me email: My email filters are super aggressive and I no longer look at the spam mailbox. If you include the phrase "embedded" in the subject line your email will wend its weighty way to me.

Sid Jones is looking for help with an interesting and unusual project:

|

I’m restoring some old intel microcomputer kit.

I've got it back together, refreshed the 1702A EPROMS with a home-brew programmer, build a 20mA 100 baud to USB serial interface, but I'm still missing the original paper tape software.

Might it be possible for you to put a notice in your missive, requesting anybody who might have access to said paper tapes or any source code, or further info to get in contact, please?

|

You can contact Sid here:jonesthechip@logicmagic.co.uk

On a sad note, Don Lancaster passed away last year at 83. Steve Leibson has a nice article about him.

|

| Quotes and Thoughts |

From Krish Rao: " You can't walk away from risks you've created for others." -Nassim Nicholas Taleb. |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. |

| Engineers: Born or Made? |

Are engineers born or made? The piece in the last Muse engendered a lot of feedback. Charles Manning wrote:

|

The born or made question is interesting. I lean very much towards the "born" side, but I think the young engineer still needs some nurturing to develop. By "nurturing", I mean opportunities to develop - not necessarily being handed resources.

For example, my first "opportunites" came when I was about 5 and needed to fix kerosene lamps and fridges and sort out torch batteries etc that my less competent parents could not fathom (artist and lawyer... no hope). Without that our seaside vacations would not have had light and fresh food.

I started playing with crystal sets when I was about 6 - before I could read properly. My first crystal set did not work because I failed to read the text properly and did not understand the need to scratch the enamel coating off the wire to solder it. A few months later I was fiddling with bits of wire, batteries and bulbs and had an epiphany - the enamel was an insulator but could be removed. I went back to my crystal set and it worked!

I think modern educators are under the impression that any children can be dipped in the STEM bucket by making science "exciting". I think this is really a waste of time and money. For the person wired to become an engineer it is already exciting/interesting without the drama. It would be much better to expend the resources on those who show an interest and flair rather than a "no child left behind"

policy.

Real STEM is extremely boring to many people, but is exciting to those that really have the aptitude. Why give those that are not going to make it the wrong impressions?

Watching paint dry might be the idiom of an extremely boring activity, but to an engineer it can be a fascinating experience.

I am still a few years away from retirement (almost 62). I still study and spend at least 10 hours a week just finding out new stuff. I can't see that ever stopping.

|

Marinna Martini sent this:

|

"When I hear people promoting STEM for this group of people or that, I shudder. One can learn the subjects, but being an engineer is much more than that. It starts inside."

With all due respect to Vlad Z in Muse#481, STEM promotion is needed for many groups for whom the opportunity to express that engineering creativity is denied, either by circumstance, poverty or societal norms.

It takes a programs like the now common STEM promotion to get those people out of the closet (including out of the closet of their own minds). If we don't do that, so much talent we really need now will be lost. |

Jim Dahlberg has his suspicions:

|

"Born or Made?"

I don't know for sure, but I suspect made. I have had several people that I have just met, ask me if I am an engineer. There is something in our personality, presumably the way we talk, that is different than most people and some people can sense those differences.

|

Jan Woellhaf has a familiar story:

|

Vlad Z's response to an article in your last Muse could have been written by me. I have exactly the same history. I still remember my frustration that the cardboard box/straight pin/rubber band pinball "machines" I made at age 4 or 5 didn't work like I wanted them to.

My buddy Franklin made the mistake of letting me "repair" his wind-up alarm clock when I was 8 or 9. When the repair was complete, the clock's time was exactly correct twice a day and the hands could even still be set. The clock was missing a few parts, however, but I had an impressive collection of gears and intricate (we called such things "delicatessen") parts hanging from my wall.

We lived on the Denver University campus, and at one time or another, I explored every room, including the Chancellor's office one day when he wasn't there. The students at the amateur radio club, W0ANA (and my call sign is now WA0ANA in their honor), were especially kind to me and happy to answer my endless questions. When I was 12 my folks bought me a Heathkit communications receiver. I loved constructing it and learned about B+ from it the hard way. One of the guys at the ham shack gave me the parts to build a CW transmitter and I was soon heard on the air as KN0WRN.

Years later, at BYU, I bought my first slide rule. I still have it, although until today I think the last time I actually used it was probably 1975. That's when I got my first electronic "scientific" calculator, a TI SR-50, which I also still have.

I just used my slide rule to divide 481 by 12. I got a tiny bit over 4. Let's see ... that must be 40. If you've published one Muse per month for 481 months, you've been at it for 40 years! Is that possible? If so, you deserve some kind of award.

|

Dave Telling mirrors many of us:

|

Dittos to what Vlad Z said about engineers. His experience echoes mine and number of engineers with whom I have worked over the years. On the other side of that coin, we had a recent EE grad come to work for us who had decided engineering would be a good income generator, but who had zero prior “engineering/tech” experience – no tech hobbies, no particular interest in electronics, and it showed in his inability to do even simple designs without intense supervision. I believe that he went to work for a local telco, doing some software work.

The rest of the engineering staff all had some level of personal involvement with either electronic or mechanical projects, and had the engineering “mindset” – the “what if we did…” attitude.

I absolutely agree that trying to force/entice people into a career path for which they have no inherent interest will result in less than optimum results.

|

Matt Boland recalls his childhood:

|

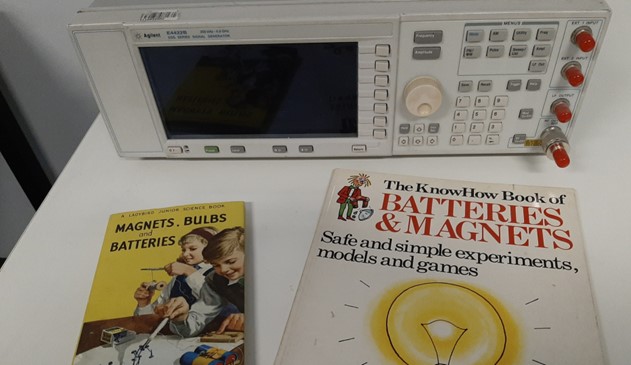

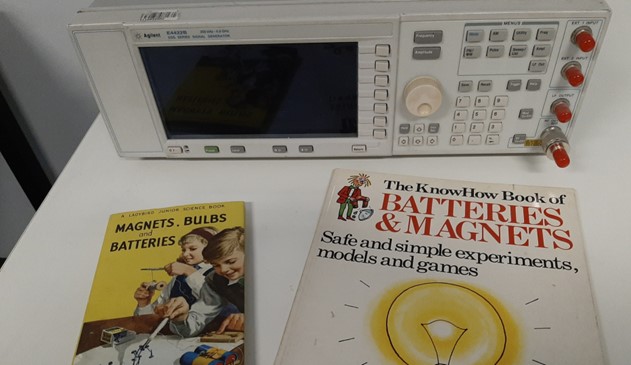

I just bought two of my favourite books from my childhood off ebay. The “Are Engineers born or made” thread in your last few newsletters prompted me to take a photo and send it in.

The Ladybird book was given to me by my Mum when I was five years old in 1972. It describes basic electric components, magnets, electromagnetism, electroplating and more. It came with me everywhere and I read it cover to cover hundreds of times.

A friend at school put me onto the Usborne book in grade 4 at school. That would have been 1976. I borrowed that book over and over and finally I saved up and bought a copy for myself. It had heaps of great stuff to build and obviously covered much of the same stuff as the Ladybird book.

I did most of the experiments from both books. Mum bought me the book as I always pulled every battery operated toy I had apart within hours of receiving it. I was always after the lightbulbs and motors so I could make my own cool stuff.

I would have to say that engineers are born. For me, I was definitely made better at engineering by caring people. By Mum, and by my teacher Mr Prindable in grade 7 who both listened to me and encouraged me.

The books are in front of my Agilent E4422B RF SigGen.

|

|

| Hogwarts School of Software Engineering |

In response to the total collapse of the economy after last week's exploitation of security holes in every electronic product on the market by Lord Voldemort, headmaster Severus Snape announced the renaming of Hogwarts to Hogwarts School of Software Engineering.

"There is evil in the world," Professor Snape snarled, "and it's worse than a pack of werewolves. We have learned that he-who-can't-be named found an abandoned Dell notebook in the slag heaps of Azkaban prison and took an on-line C programming course. His army of malevolent Dementors have now mastered the black arts of the script kiddies."

Snape later noted that the Dementors (and their master) are able to work their evil ways only because of the gaping security holes left in electronic products by the engineers (and their bosses) who don't know a GPOS protection profile from the SKPP. And users are at least as culpable as so many never bother to change or even implement passwords. "The happy days of Harry Potter 1 through 7 were like the innocent eons of the Garden of Eden," he said. "When Adam and Eve were cast out they found a world of connected poorly-secured devices. Then the Internet of Things came about, and the Things were mostly useful as attack vectors. Think 50 billion connected devices, one he-who-can't-be-named, and a couple of million Dementors who neither need food nor bathroom breaks, all trained in the Dark Arts of tunneling through firewalls."

Hogwarts will continue to recruit eleven-year-olds with magical skills, but now the course material will contain rigorous instruction in highly-disciplined firmware engineering. Coursework will change to reflect this new focus. For instance, Defense Against the Dark Arts will now feature in-depth training in IPsec, deep packet inspection, and thwarting DDoS attacks.

But the school will be about much more than security.

"We're facing a software crisis," Snape muttered, "the need for software far outstrips the industry's ability to create it. The only possible solution is magic."

In the Transfiguration class young magicians will focus on refactoring legacy code from the creaky disaster of technical debt into robust, bug-free maintainable software by using their wands to issue the correct spells.

Study of Ancient Runes will expose students to the cryptic languages of yore that are still found in many systems, like Perl, APL and Lisp. The Potions class will cover design patterns, and Charms will show students how to consign crummy code forever to the Chamber of Secrets.

Snape concluded with: "When the first class graduates from the revamped Hogwarts we expect that no muggle will ever again be allowed to contend with the mysteries of software engineering."

|

| More on Testing |

The last Muse also included an article about testing. In it I mentioned there are tools that will construct automatic tests from the code. Alas, I neglected to mention that these tests will check only against the code, not against requirements. While the former is indeed useful, requirements should be the defining issue.

Harold Kraus made this clear:

|

Note that "a function is fully tested" is relative. In the DO-178C context you mentioned, what "fully tested" means is dependent to the assessed hazardous effects of error, moreover, "fully tested", here, also means the degree of coverage of correctness of outputs with respect to requirements only.

Yes, v(G) gives a "minimum number of tests one must run in order to insure" a degree of coverage, that particular degree of coverage being Branch Coverage.

Please note that with respect to source code, Branch Coverage is not exactly the Decision Coverage that DO-178C requires. This has been recent for us to learn. Decision Coverage requires covering both true and false results of all Boolean expressions, not just those in control of structural branch statements [Rierson, 2013]:

- “Decision – A Boolean expression composed of conditions and zero or more Boolean operators. If a condition appears more than once in a decision, each occurrence is a distinct condition.”

- “Decision coverage – Every point of entry and exit in the program has been invoked at least once and every decision in the program has taken on all possible outcomes at least once.”

Note also that depending on the definition of Path Coverage, Path Coverage is not same as Branch Coverage; some use Path Coverage and Branch Coverage synonymously, but a common definition of Path Coverage is that ever combination of branches is followed.

Your three paths do accomplish Branch Coverage, but there is one more unique path that could be taken, 1-3-4-5-6-7. RTCA determined that exhaustive Path Coverage of this sort was excessive for even Level A, but I can imagine that if you ran only 3 out of four paths on arbitrary code, you might miss an erroneous output, especially with C shenanigans.

And a final word about automated test generation where the tool generates test cases based on the tool's analysis of the code instead of requirements. Automated test generation from source code is just as doomed to succeed as the manual kind.

|

Charles Manning commented:

|

Also, a comment on testing...

You might have seen the various discussions on branchless programming.

This is where code is rewritten with the goal of reducing branches (ie. reducing or eliminating if then else and similar constructs).

The main reason to do this is reduce branching costs on highly pipelined processors. A CPU pipeline has multiple instructions in overlapping stages of execution. If a branch occurs then typically the pipeline needs to be flushed and a lot of work is thrown away.

Considering there are CPUs with over 30 pipeline stages, there is some value in getting this right.

Various CPUs take steps like branch prediction to reduce the costs, but clearly a branch that is not there is better than a branch that is there. Hence the motivation for branchless processing.

For example we can replace

if (a < b)

greater = b;

else

greater = a;

with the branchless version:

greater = (a < b) * b + (a >= b) * a;

It seems to me that would reduce the cyclomatic complexity too.

I do have some reservations about cyclomatic complexity though. I think that in complexity terms there is no real difference between these two examples. ie. trivial selection cases that are exhaustive should not drive up complexity scores.

It would be interesting (well to me anyway) to see what others think.

|

Lars Pötter wrote:

|

I agree that more testing and more measuring would be better, but wouldn't it always be so? I think you shipped an important disclaimer, as relying on tools for testing can go wrong.

Maybe we should tell software engineers that they do not need do testing, as their managers will do it:

A colleague of mine used cyclomatic complexity to evaluate the quality of software that others created for him. He needed to only look at the functions with a high cyclomatic complexity rating to find the worst parts of the code. Those were also usually the spot where the bugs were hiding.

An external appraiser told us that he evaluates a code base by linting it. He starts with no rules disabled and then looks at the issues that are reported the most. In the beginning these are usually harmless things where the style guide deviated from the lint defaults. He then disables this category of issues and repeats. And this leads him to the real issues of the code base.

But I disagree on the tools to automatically create tests. Automatic tests can only find some kinds of issues. And a tool that creates thousands of test then can eliminate some classes of errors. But if this then leads to a 100% code coverage target. What in such scenarios always means only line coverage. What then leads to the removal of dead code, then I get a very bad feeling. You might end up with a code base that is worse than before. It now spends a lot of time on range checking values that have been range checked before and even though the team is convinced that they now have the best software ever they still run into bugs. Maybe even more than before, but definitely different issues than before. Which might mean harder to find issues.

What those tools can not do is think about what the result of the tests should be. What is the best thing to do in an edge case can be a different question. If some values or behaviors make even sense is something that the tool can not decide.

For the best bang for the buck I would suggest to do static analysis(lint, cyclomatic complexity,..) and to then look into the code where the static analysis is unhappy. And to add tests to the complex functions. Also add descriptions to the test that explain the use case.

It is an often forgotten reality that test break. When working with a code base sooner or later test will break. Automatically generated

(stupid) test break the first and they will probably just be removed.

Having a good documented test on the other side could be the equivalent of the senior engineer saying "Oh if you change this, how will it work when ..." If one has a good answer to that then the test can be changed, otherwise one might realize that the test is correct and the code needs more love.

Doing something without thinking can be better than not doing anything.

It can also be dangerous and thinking and then doing is always better.

|

|

| Failure of the Week |

From Curt Bruns - a hot day in AZ:

And this from Anne Adamczyk:

Have you submitted a Failure of the Week? I'm getting a ton of these and yours was added to the queue. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

| Joke For The Week |

These jokes are archived here.

Real programmers don't document. If it was hard to write, it should be hard to understand. |

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster. |