|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

| Contents |

|

| Editor's Notes |

Tip for sending me email: My email filters are super aggressive and I no longer look at the spam mailbox. If you include the phrase "embedded muse" in the subject line your email will wend its weighty way to me.

|

| Quotes and Thoughts |

Code chock full of #ifdefs is not 'portable code'. It is, at best, code that’s been ported a lot. There’s a big difference. (From here). |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

Tyler Herring sent this info about running ancient DOS code on a modern x86:

|

- In some cases even programs run from the DOS command line no longer function.

I ran into this issue about 6 years ago trying to run an old 16-bit program that was built with QBASIC as part of a legacy toolchain I was handed... The version of Windows on my computer would not even pretend to run it.

I was familiar with a program called DosBox because it has been popular to run a lot of older video games I grew up on (Commander Keen, Wolfenstein 3D, etc.) that basically emulates an x86 with DOS. So just to see what would happen, I tried it out and it was actually able to run our old 16-bit program.

I had old build files available to ensure that the output I generated matched what was actually expected. Once we later dug up the original source code and were able to run the program on new input files, we could unwind the secrets of what it was doing.

|

In the very early 80s, before C was an option for embedded work, I wrote Basic compilers for the Z80 and x86 (when the IBM PC came along). Though these versions could run programs under CP/M and DOS, they also generated ROMable code for embedded systems. Some of that code made it onto the space shuttle. There were a couple of unique features: multitasking was built-in (essentially compiled apps had an RTOS), and it run just like an interpreter, except the RUN command did a very fast compile. But for some reason it hasn't worked under the DOS cmd window for years. I tried DosBox and the old code came to life, running seemingly perfectly. We did sell about 10k copies of that compiler. Here is an x86 executable and here's the manual. No guarantees after all these years!

Peter House has some great tool recommendations:

|

I have always used MS Word for it's ability to easily create tables and control the style of the document using style sheets and have been using Word since DOS version 1!

Lately, I cannot open some of my earlier Word documents*** using recent versions of Word and this is a problem going forward. While Microsoft does offer some filters to allow Word to open previous versions, they are limited and not easy to use. Mostly those earlier documents are fairly easy to open in Notepad++ and get the text out but this is no longer possible in most version of Word for Windows and especially after the introduction of unicode.

I am now using .MD or Markdown files to create new documentation. Markdown is what it's name implies, a simple text markup language which is geared down to make the documents easy to read even if a viewer is not available. Headings, tables, bulleted lists are all simple and it is possible to have other more advanced features using style sheets although, for me, the inserted style tags reduce the native readability of the naked document. Github, a subsidiary of Microsoft since 2018, supports the MD format and now nearly every github project has at least a Readme.MD file in it's root folder to provide basic notes about the project. Good projects have several of these Markdown files to provide more granular project descriptiveness. These markdown files on github provide some great examples on how to use markdown for project documentation. Unfortunately, in many instances they do not provide good examples.

Notepad++ and VSCode provide viewer support for creating and editing Markdown files. Microsoft has a viewer accessory in the "Power Toys" accessory pack available for File Explorer so it can show formatted Markdown in the File Explorer 'view' pane.

*** This seems to be a trend in applications. Several other applications cannot open their older files in the newer versions. CorelDraw will no longer open versions prior to version 7 and this has caused me to stop using CorelDraw and transition completely to InkScape, and open source program with open source file support. Fortunately, there are websites available to convert the old CorelDraw files to .svg, or Scalable Vector Graphics format. My thanks to the cloud!

|

When it comes to the trade press, Fabrizio Bernardini disagrees with me about Circuit Cellar:

|

Regarding your note on Circuit Cellar, considering also your international audience, I would like to mention Elektor, the widely read electronics magazine in Europe. Elektor has been for some time tied with Circuit Cellar, but now they separated again.

I subscribe to both of them, and I like both of them, but these days I see Elektor more oriented toward people who like to learn and experiment (even at FPGA, SDR, and other new technologies level) than Circuit Cellar, sometimes too much oriented toward the industry.

The easiness with which now you can have your magazines loaded in your iPad makes browsing them very convenient as well as note taking on the same device.

Not to forget that younger generations are losing contact with the magazine format, which has a lot of benefits compared to the casual browsing on the Internet. Keeping certain magazines alive is important for the cultural aspects of any discipline.

|

|

| Freebies and Discounts |

Kaiwan Billimoria kindly sent us three copies of his new book Linux Kernel Debugging for this month's contest. It's a massive tome, at 600+ pages that anyone working on Linux internals would profit from.

Enter via this link. |

| A Tool To Manage Defects in the Field |

The folks at Percepio have been “bug”ging me to look at their DevAlert tool. I wrote “bug”ging, as that’s what DevAlert is all about. Managing bugs.

Suppose you have thousands of devices deployed around the world and a defect occurs. You have a few options:

- Ignore the bug report as you’re only weeks from retiring.

- Have an army of support people dealing with the angry emails and phone calls. They will create possibly a blizzard of bug reports which engineering can slog through.

- Log bugs to the cloud.

Options 2 and 3 have a major problem: A bug report will generally not contain much debugging information. And, if there are thousands of deployed devices, your in-box and support people can be overwhelmed by the mountain of reports about the same bug. For any non-trivial problem you’ll likely get a slew of conflicting information from the customer (“when I press the red button when the moon is full, and the fans are on in the next room, then…”). Without engineering information about the problem, all you and your support team get are reports about symptoms. Wouldn't it be nice to have fingerprints as well? Maybe a snapshot of the system’s internal states?

If DevAlert is integrated into the IoT device the developer can add code, sort of like very smart assert macros, that log system data to a ring buffer. When an error occurs that buffer is sent to the cloud. The buffer can be as long or as short as you like. Maybe just the program counter. Or perhaps the entire task control block. When writing the application engineers add statements that log system parameters to the ring buffer. That's not a bad idea, anyway, to ease debugging.

I think the real power of DevAlert is in the tools which manage all of that cloud data. Instead of getting hundreds of reports about the same defect the tool digests this data into a single event.

When the system is chugging along doing its thing there’s no need for any Internet traffic.

DevAlert can be used for more than bug tracking as any anomaly can send debugging info to the cloud. An MPU exception or watchdog timeout that the code automatically recovers from should fire a burst of data to the DevAlert cloud, as those events, while maybe not creating symptoms, indicate some problem is lurking in the system. If it were me, I’d log every exception the system can handle, as well as every event that the code was smart enough to mitigate against.

I asked Percepio if DevAlert adds new vulnerabilities to hacker attacks. It turns out DevAlert only broadcasts to the cloud, never receiving anything, so the tool doesn't increase the device’s attack surface.

DevAlert is like the assert macro on steroids. See this article for more empirical data about the efficacy of assert.

The bottom line: DevAlert pushes data about problems to the cloud. Importantly, it characterizes each event so the developers are not overwhelmed with multiple reports of identical bugs. And it has a mechanism to include trace data.

As one who has had to fly around the world carrying heavy debugging tools, the idea of getting the info you need to your desk sure is appealing. More DevAlert info is here, and some videos of it in action are here. |

| On Subscription-Based Tools |

A number of people wrote regarding my spiel about subscription-based tools in the last issue. Ian Cull wrote:

|

"In the embedded space we often have to provide support for years and even decades"

Our company moved from PCs to Mac machines nearly 15 years ago, running our Windows tools under Parallels on Mac; when we move to a new Mac, we copy the virtual machine and it continues to run exactly as it did, no matter that the computer itself might have changed. Even now, those tools continue to work on the M1 processor with Parallels supporting Windows Arm, and that environment simulating the Intel processor. I expect that it's possible to do the same on PCs (running software virtually).

|

Paul Crawford has run into this problem:

|

I just read the article about "On Subscription-Based Tools" and it is a serious problem. Recently I had issues with activating a Lattice CPLD compiler because it had a then-free but time-limited license and that had expired and is no longer offered. This really p-ssed me off as I did not need support for it, or new features, just to allow an existing legacy design to be slightly modified for a legacy chip!

For some of my older CAD tools I have been using VM to run them, so have some on Windows 2000, some on XP, some on Win7, etc, and largely independent of the actual machine's hardware (generally Linux host). You can also fudge the start date on the VM if you have date-code issues like w2k 2-digit storage, etc, but it will not get around tools that

*require* access to DRM servers that have since been shut down.

Just as some music fans found out to their cost and turned to pirated sources that did not expire! |

Vlad Z emailed:

|

In the embedded space we often have to provide support for years and even decades; the rail industry routinely requires a guarantee of 30 years of support. How one accomplishes that baffles me. Even if you store a PC loaded with the tools in a closet, odds are the capacitors will go bad long before those three decades elapse.

In one company I worked for, they had exactly that, a PC with Win2000. In another, the solution was much more rational: a VM with Win2000 and the development environment. The VM's snapshot was backed up periodically.

In a company where I was responsible for the backups and releases, in addition to the development, we backed up the toolset along with the sources upon a major release.

I had to deal with the need to rebuild and fix old releases at various times in my career. I do not understand how people can even contemplate subscription services for the tools for embedded system development.

|

Then there's this from Glenn Hamblin:

|

I really don't like the subscription model for software, I am one of those weirdos that archives my projects with tools that were used to generate them. And subscription totally breaks this model. I had paid $1000 for Eagle and used it for a lot of projects. But since Autodesk bought it and Subscritionized it I've turned to KiCad. Of course, at work I use Altium.

|

Peter House's experience mirrors my own regarding getting funding for tools:

|

Subscription based software tools is great for the tool developer sand the vulture capitalists and extremely bad for the embedded developers and their customers. The developers have always had a cost centric business model which is difficult to make large amounts of money to attract investors. I believe this will always be true. |

Tom Van Sistine wrote:

|

I recently built a Linux Mint system for work, most things eventually worked. But I have an old HP scope that uses 30 year old Benchlink that installs from 3 floppy disks. I was able to get it running again in XP home running in virtual box. Thank goodness it is not subscription based! I can now capture scope shots once again; I could not do that in Windows 10 for five years.

An Iso image of that computer you would store in a closet might be an option, again if the software is not subscription based or requires a newer license server, but even that server could be a VM.

|

|

| C Is NOT Obsolete |

In the last issue readers expressed their opinions that, in general, C is here to stay. Kip Leitner wrote:

|

I've used both C and C++ in embedded systems without fanfare or flamewars. Those who complain about "code bloat" with C++ are probably electrical engineers first and programmers second. Nearly all C++ compilers these days allow the disabling of C++ features (such as RTTI, polymorphism, exception handling and multiple template inclusion) to effectively turn C++ in a language with more syntactic sugar, better memory management, default parameters, constructors/destructors and a few other things to make it more modular. Numerous studies have shown run times of C++ vs C within 2-3% on decent compilers and similar code footprint.

A certain class of processors, problems (such as sockets and other dynamical systems programming needs), and available RAM call for 'C'. That said, there is also some need for certain embedded systems designs where the the nature of the problem clearly benefits from well-executed Object-Oriented design with an encapsulation strategy to hide details of implementation. This abstraction is absolutely essential if the design is going to be ported to new hardware. C++ supports the abstractions semantics better than C. Period.

This terminology isn't just gobbldegook. I'm currently the 8th programmer on a 6 year old real-time embedded system with a large installed industrial base. I've been doing this kind of work for more than 30 years. The 'C' code uses various proprietary code for lists, queues, maps, FIFOs which makes the programmer responsible for learning all the unique terminology of the previous tribe of programmers. Boost and the STL support all this out of the gate in C++. There are somewhere between 500 and 1,000 functions and hundreds of global variables used as "flags" everywhere. There is no OS and 5 different semi-independent threads of interrupt-driven execution interoperate precariously based on all these flags.

C++ alone would not have improved the poor SW architecture, but enforcing a severely restricted C++ to

My_Data.fn1();

My_Data.fn2();

My_Data.fn3();

would sure have fixed a lot of problems associated with holding the complex mental model in one's mind necessary to run the system.

|

Mateusz Juźwiak sent this link to a tweet by Mark Russinovich:

In it, Mr. Russinovich advocates getting rid of C/C++ in favor of Rust. I disagree, but am hearing a lot of chatter about the language... though see few real embedded projects being done in it. |

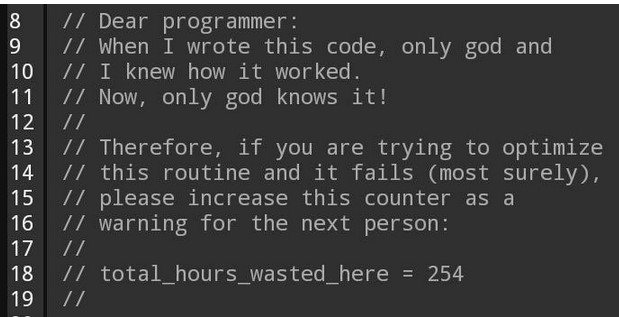

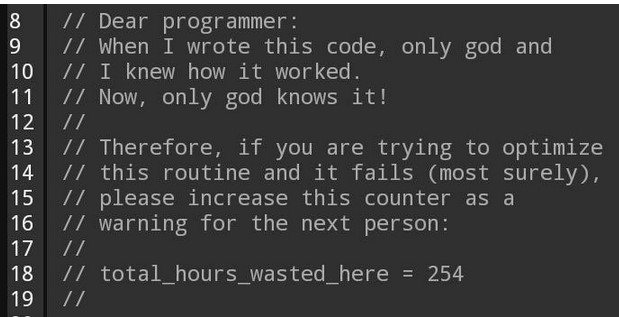

| Failure of the Week |

From Wayne Borglum:

Clyde Shappee sent this:

Have you submitted a Failure of the Week? I'm getting a ton of these and yours was added to the queue. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

| Joke For The Week |

These jokes are archived here.

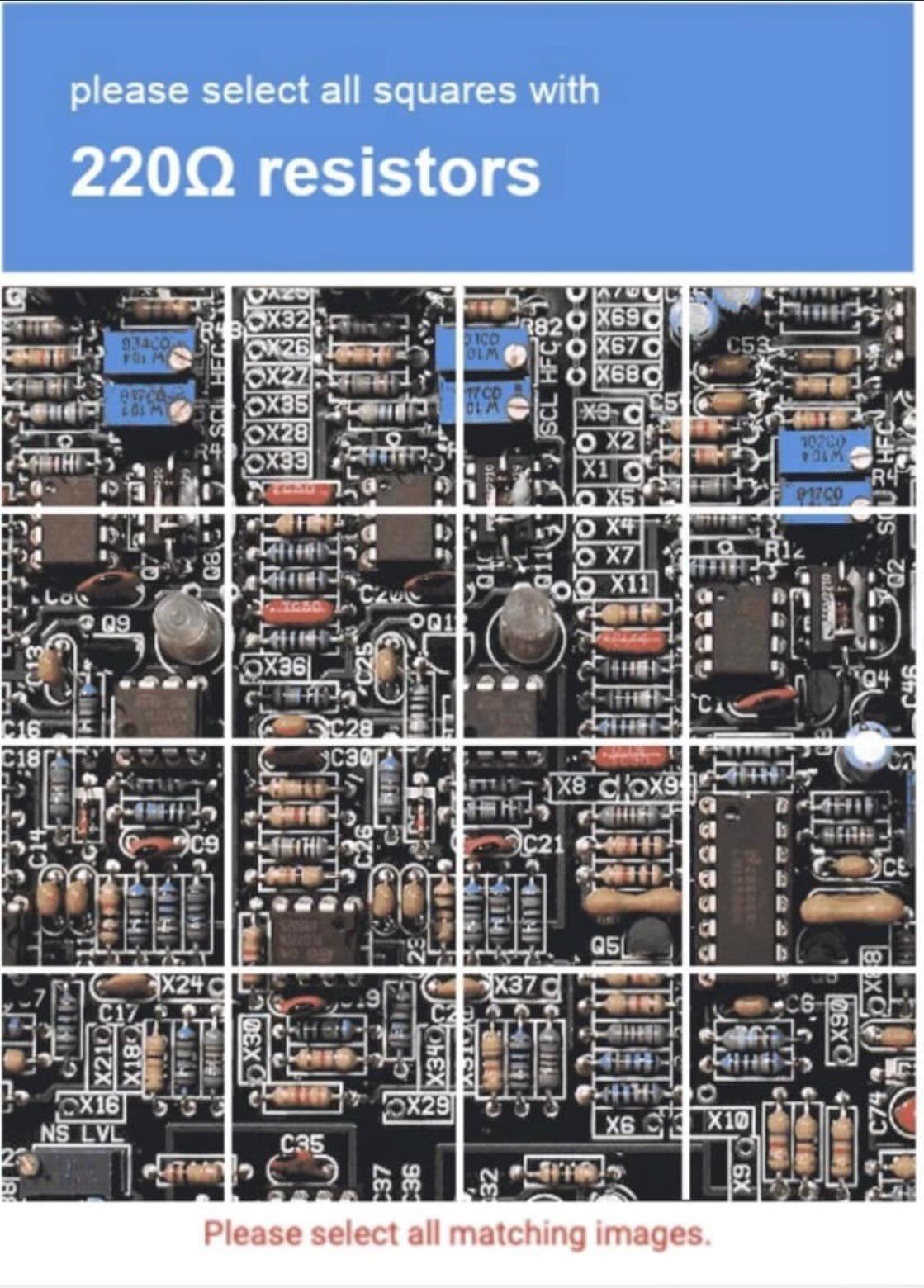

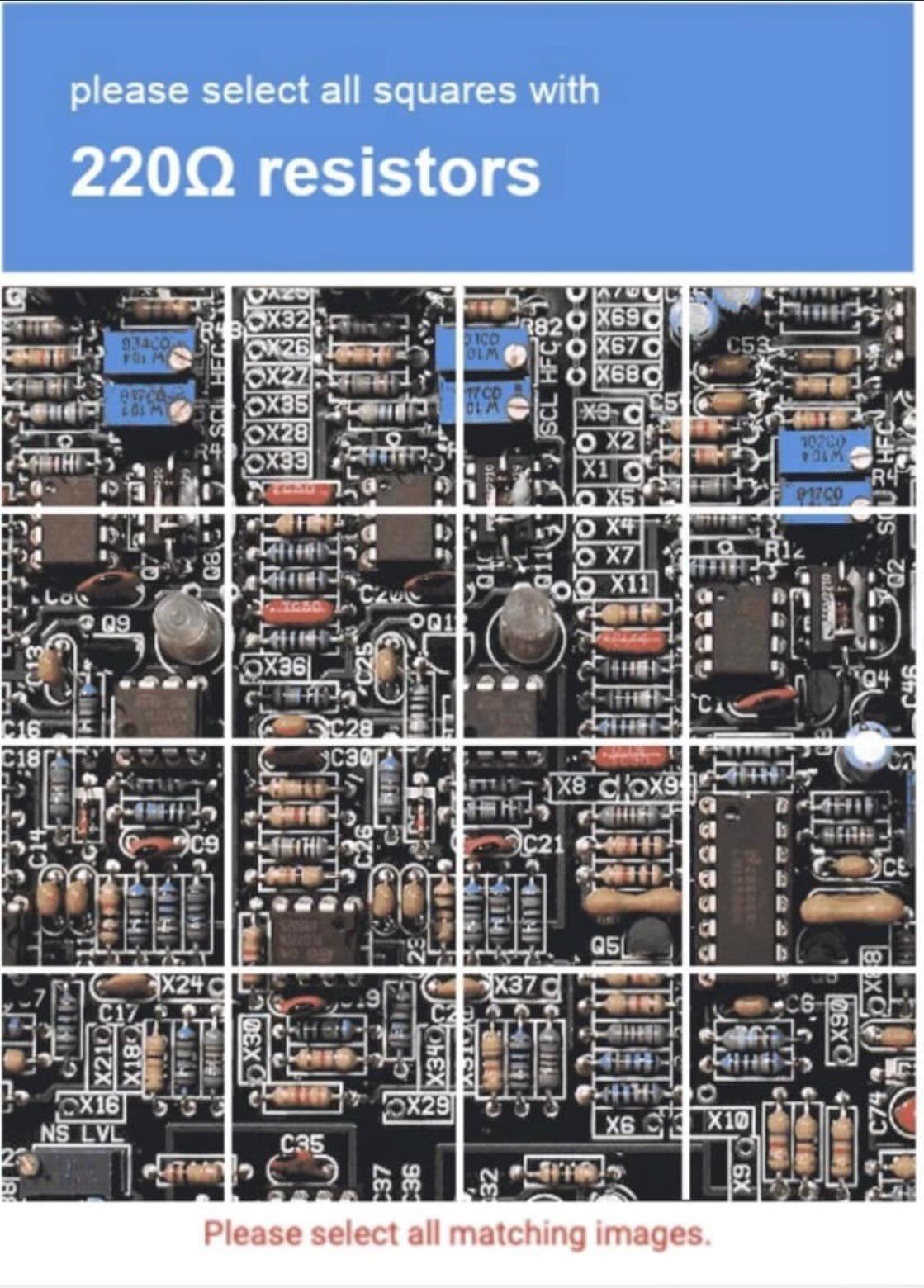

George Farmer sent this priceless captcha:

|

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster. |