|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

| Contents |

|

| Editor's Notes |

Tip for sending me email: My email filters are super aggressive and I no longer look at the spam mailbox. If you include the phrase "embedded muse" in the subject line your email will wend its weighty way to me. |

| Quotes and Thoughts |

Edsger Dijkstra's magnificent observation: "If you want more effective programmers, you will discover that they should not waste their time debugging; they should not introduce the bugs to start with." |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

The folks at Memfault discuss Trice, an open source library that gives embedded developers printf-like debug capabilities with practically no overhead in memory or CPU usage.

Another printf-like debug capability is Eclipse's dynamic printf, which offloads the normal printf-heaviness onto the IDE. It's a nice concept and there's a good explanation here. |

| Freebies and Discounts |

This month's giveaway is a 0-30 volt 5A lab power supply.

Enter via this link. |

| Naming Conventions |

The Linux printk function has a number of logging levels, which include KERN_EMERG, KERN_ERR and others.

What, exactly, does EMERG mean? Emergency? Emerging? Emergent? The latter sounds like part of the title of a horror movie.

Or ERR – perhaps that's error, but it could be to err, or erroneous. Maybe even erogenous (definitely my preference). Combine that with KERN (definition: "a part of the face of a type projecting beyond the body or shank") and the puerile possibilities are positively provocative.

Why is every index variable named i, j or k? Or, for nested loops, ii. Or my personal favorite, iii? The reason is because 60 years ago, when Fortran came out, variables starting with the letters i through n were, by default, integers. Few remember this, but most of us still mindlessly practice it.

It's interesting that some believe long names yield self-documenting code yet so many of us abbreviate to the point of obfuscation.

The code has to do two things: work, and express its intent to a future version of yourself or to some poor slob faced with maintaining it all years from now after you're long gone. If it fails to do either it's junk. I've met plenty of developers who say they really don't care what happens after they move on to another job or retire. But if we are professionals we must act professionally and do good work for the sake of doing good work.

Clarity is our goal. Names are a critical part of writing clear code. It's a good idea to type a few additional characters when they are needed to remove any chance of confusion.

Pack the maximum amount of information you can into a name. I had the dreary duty of digging through some code where no variable name exceeded three characters. They all started with "m". The mess was essentially unmaintainable, which seemed to be one of the author's primary goals.

We've known how to name things for 250 years. Carl Linnaeus taught scientists to start with the general and work towards the specific. Kingdom, Phylum, Class, Order, Family, Genus, Species. Since in the West we read left-to-right, seeing the Kingdom first gets us in the general arena, and as our eyes scan rightward more specificity ensues. So read_timer is a really lousy name. Better: timer_read. timer_write. timer_initialize. A real system probably has multiple timers, so use timer0_read, timer0_write. Or even better, timer_tick_read, timer_tick_write.

The Linnaean taxonomy has worked well for centuries, so why not use a proven approach?

Avoid weak and non-specific verbs like "handle," "process" and "update." I have no idea what "ADC_Handle()" means. "ADC_Kalman_Filter()" conveys much more information.

I do think that short toss-away names are sometimes fine. A tight for loop can benefit from a single-letter index variable. But if the loop is more than a handful of lines of code, use a more expressive name.

Don't use acronyms and abbreviations. In "Some Studies of Word Abbreviation Behavior" (Journal of Experimental Psychology, 98(2):350-361,) authors Hodge and Pennington ran experiments with abbreviations, and found that a third of the abbreviations that were obvious to those doing the word-shortening were inscrutable to others. Thus, abbreviating is a form of encryption, which is orthogonal to our goal of clarity.

There are two exceptions to this rule. Industry-standard acronyms, like "USB", are fine. Also acceptable are any abbreviations or acronyms defined in a library somewhere - perhaps in a header file:

/* Abbreviation Table

* tot == Total

* calc == Calculation

* val == Value

* mps == Meters per second

* pos == Position

*/

Does a name have a physical parameter associated with it? If so, append the units. What does the variable "velocity" mean? Is it in feet/second, meters/second... or furlongs per fortnight? Much better is "velocity_mps", where mps was defined as meters/second in the header file.

One oh-so-common mistake is to track time in a variable with a name like "time." What does that mean? Is it in microseconds, milliseconds or clock ticks? Add a suffix to remove any possibility of confusion.

The Mars Climate Orbiter was lost due to a units mix-up. We can, and must, learn from that $320 million mistake.

Do you have naming rules? What are they? |

| Testing For Unexpected Errors - Part 2 |

In the last issue I wrote about testing for unexpected errors. Many readers replied.

Mat Bennion wrote:

|

"A reader posed an interesting question: in a safety-critical system how do you test for unexpected errors?"

"Anticipating and handling errors is one of the most difficult problems we face. What is your approach?"

In practical terms, as you point out, there should be no "unexpected" errors. So in aerospace we expect random bit flips and tin whiskers. You can systematically walk through your design asking "what if?" or "early / late / missing / wrong" for each signal or bus. Then we test the handlers the same as anything else, and if that means carefully shorting a memory line to check a "walking 1s" test or bombarding the unit with neutrons, so be it. Many exceptions can be tested in a debugger, e.g. on an ARM, just hit "pause" and tweak the program counter with a bad address to test your handler. As you point out, this only tests your software plus one trigger path, so you're still relying on ARM to have done a good job. If you don't want to rely on ARM, you can develop your own core 😊. Or ask manufacturers for their internal test data, which they can summarise under NDA.

You also mentioned booting: USB-driven relays are very cheap and can be used to power cycle a unit from a Python script – leave this running over the weekend and it's a great way to spot rare problems. |

Mateusz Juzwiak had some good insights:

|

I've been working as a programmer and system designer for safety-critical systems for a few years now. My main focus is mobile machinery industry, and most of our programs run on already safety-certified controllers - we use so called Limited Variability Languages, like Structured Text or ladder diagrams. This allows us to focus on an application itself and almost completely forget about MCU-related issues like memory CRC checks, internal MCU watchdogs, brown-out detection etc. One might say that it's peanuts compared to safety-related embedded systems - however I think that it allows us to focus better on the good design of the software and procedures-related aspects of system development.

First of all - you answered a question about "unexpected error". There are several safety-related standards that mention error (failure) types, and none of them describes 'unexpected error'.

We have to deal with three types of failures:

- Random hardware failures, for example: sensor broken, relay stuck-at-closed/open, digital input stuck-at-value, clock failure etc.

- Systematic failures: bug in the code, incorrectly performed tests, incorrect commissioning

- Common cause failures: mostly related to environmental issues, like high temperature or flooding. I will not cover this here.

It's really important to understand the difference between the hardware failures and systematic failures, because there are completely different measures to counteract them.

For dealing with hardware failures, the generic process is following: define what is failure mode of each of your components (eg: relay can be stuck open or stuck closed, sensor can give out of range value), determine what is effect for your system, and define how you deal with that failure. You may use detection features (eg: voltage measurement after the relay to check if it's operating correctly, or measuring actuator current), add redundant hardware to double the needed function, or just ignore the failure (that's also an option!). It's up to system designer how to deal with that failures, however it should be defined and written down. Such approach is called Failure Mode and Effect Analysis (FMEA). It may have different forms (DFMEA, FMEDA etc.), but general idea is the same. Then of course some actions must be implemented into the software and hardware, like correct reaction to the out of range sensor value.

This looks very complicated, however, performing such analysis even for small embedded system is possible and can be done even by a single developer. Just open an excel, write down things you are expecting to fail or forseeing that can fail, and see which of them can be dangerous for your system or process. Then implement protective features.

In such a way it's possible to eliminate theoretically unexpected failures like in B737 MAX, where invalid sensor value caused disaster. But what if reaction for a forseen fault was defined incorrectly? If someone didn't assumed that two AOA sensors can fail in the same time?

This is an example of systematic failure.

So there was an error in the design or development process that was not catched, or no process was defined at all. Different safety standards handle systematic failures in a different way, but there is one universal answer for avoiding systematic failures: define process, execute the process and upgrade your process. It applies both to management activities, specification phase as well as code development process.

I'm providing the list of what should be defined for each serious project, and some questions that should be seriously answered before doing any programming. My recommendation is to write down the answers together with your team/boss, so after some time in the project, when you'll get blind you can check them and get back on track.

- Specification/requirements documents - How do we create it, who is responsible for it? How do we introduce changes in the specification?

- Specification review process - do we have it or do we accept what we got?

- System/software architecture - who is responsible for it? Do we create it or just write code having software design in our heads? Does the architecture address all system- and software-level important issues, like: interfaces between software modules, error reporting system, failure handling and reaction to failures, reaction to out-of-range values, adding new modules to the system, communication with external world, handling configuration and passing configuration data to system modules, additional safety features like software results cross checking, stack protection techniques (eg canary)? About hardware: if we need additional external watchdog, brown-out detection etc?

- Architecture review process - who and when, or we trust in our architect/tech lead?

- Coding standard, including features you're allowed and not allowed to use by your programming language and system, do and dont's for this specific project

- Code review process:

- who and when is reviewing the code,

- code review templates - so we can be sure that everybody are doing the review in the same way. For bigger teams/more serious projects code review protocols may be implemented to have formal confirmation that code review was done according to requirements

- Test plan:

- What are we testing and how, and what not?

- What are standards for unit tests? Black box or white box unit tests?

- Integration tests including test specification - do we have them?

- Test review process - do we review tests before running them? If yes, there should be code review process/templates defined

- Release plan:

- Do we release single version of software or our systems must support multiple customers? How do we deal with it?

- Who is responsible for releasing new version of software? How we do that?

Look that all these things might be applied to a popular nowadays "Scrum" or other "Iterative development" techniques, as well as traditional V model.

It might look strange that the answer for "how to expect unexpected failures?" the question is about preparing and keeping to the documentation. But this is how in reality safety systems are developed and implemented. That's also how safety standards treat systematic failures.

And one more last word - for such development it's usually not needed to have a professional safety-trained development team. If you're willing to improve your systems it's good if you introduce some of the mentioned features, not all of them at once. |

Wouter van Ooijen contributed:

|

"A reader posed an interesting question: in a safety-critical system how do you test for unexpected errors?"

IMO there are two categories:

1- things that don't affect the computation system integrity

Here the basic approach should be to specify (and hence test) the required behaviour. All behaviour, no just the anticipated input values! This should eliminate all special cases, because they are not special. The sensor is disconnected or reports an out-of-bounds or otherwise unreasonable vale? Specify what the computation system should do. This is NOT something to be left to the sensor driver to handle 'sensibly': what is sensible is a systems issue. Depending on the situation, clamping within the expected range, using the last value, or halting the system could be the correct response, but in another situation it might be exectly the wrong response. So don't leave this to the unit/module programmere to handle 'sensibly': specify, and test.

This is where an embedded system spec (especially one a for critical system) differs from a run-off-the-mill softeware app. There are no special cases, everything (including all weird errors) should be part of the normal operation. (This is one of the reasons I find exception mechanisms like C++ exceptions much less usefull in embedded software than in desktop software. There are other reasons, like heap use.)

2- things that do affect the computation system integrity

How you can deal with these situations depends on how much of your computation system you can still trust. In situations I have met the best option was often to distrust everything and just sit still and be quiet, so other parts of the hardware can detect and handle the situation. This requires that

- those parts can detect that something has gone south from the absense of action from the computation system (all cativities should require periodic activation, not just one 'enable' message)

- those parts can do something to mitigate the danger (remove gas, cut the ignition cord, apply brakes, shut the reactor down, etc.)

In other words: design the other components as passive safe (must go to a safe situation when the computation system is silent)

There are some additional issues that can be handled ad-hoc, like

- ECC memory works best when the memory contents are periodically refreshed. If the HW doesn't do this autonomously, do it in SW when there is nothing better to do

- some HW degrades over time. Checking it periodically or at start-up might detect a problem before it becomes an issue.

- If you have RAM memory surrounded by unimplemented memory regions that can trap on read/write, arrange your stack and/or heap (juk! no heap if you can avoid it!) to grow outwards toward that unimplemneted memory, NOT inwards into each other.

If this still doesn't make you feel safe, give your system to a bunch of students for monkey-testing ;) |

Stephan Grünfelder likes static analysis:

|

Let me introduce myself as trainer and book author for testing embedded software in German speaking countries. I am replying to a question in your latest newsletter: "But how do you simulate that error at every possible divide in the code?"

That is what tools like Polyspace, Astrée are made for. With the help of abstract interpretation they can proof the absence of divisions by zero, overflows, and many other "unexpected errors". This is something quite different to testing: you can never use testing as proof that the code under test has no bugs of a certain kind. Analysis can do that to a certain extent.

The final question in your section on Testing of Unexpected Errors is "Anticipating and handling errors is one of the most difficult problems we face. What is your approach?"

I have worked for a German automotive supplier and have learned a lesson from a US customer: "test your FMEA". The customer requested us to make a plausilization of our FMEA by executing test cases that demonstrate that the reasoning and the derivations truly work in the field. This idea somehow made it into the respective norm, the ISO 26262. It requests to combine analysis with test. Neither the analysis nor the test alone is enough to verify a safety critical device. |

|

| Yet More on Design for Debugging |

In the last issue readers had comments about designing for debugging, which resulted in more interesting discussion.

J.G.Harston has some specific ideas on LEDs:

|

> _3. LEDs. Please please HW guys - give me at least one LED. You can

> find the space. When other stuff doesn't work or you are in the

> bootloader with no debug - a simple LED can make such a difference ...

I would follow that up with: make your LEDs *distinct*.

All too often equipment has multiple undifferentiated LEDs that assume you know what each LED means and have the eyesight of a cat. A year ago I had my head stuck through a ceiling tile fiddling with a router, speaking to the ISP on the phone.

Me: There's an LED lit.

Them: What colour is it?

Me: LED coloured.

Them: Which LED is lit?

Me: One of them.

FFS, do *NOT* indicate states with a single indicator that varies between sort-of-off-orangy-red and sort-of-off-reddy-orange. When you're prodding unknown equipment with the all-too-often non-existant labels, the only observable state is "on" and "off".

For example, the cable box at the other side of the room. One LED means the power is on and it's not operating. Two LEDs means the power is on, and it's actively sending a signal to the TV. From here I can't tell what colour they are, I don't care what colour they are, and I don't need to know what colour they are, they're either on or off, and there's either zero, one, or two of them on. I don't need to know which LED is on when only one is one as there is no possible case for it to be anything other than "power on, no signal".

The opposite example: a few weeks ago my boiler stopped working. One of the LEDs was flashing, so I looked in the manual. The manual listed them X: this, Y: this, Z: this. After several minutes frustated prodding trying to rectify the Z error state I realised they were mounted Z,Y,X on the actual panel. |

Kevin Banks suggests logging to FRAM:

|

Having some sort of "persistent circular log" in your embedded devices is a common practice, but a few years ago I discovered someplace new to store them in - Ferroelectric Random Access Memory (FRAM). These chips are non-volatile (and don't require a battery), but they can be written to a lot more than typical FLASH memories without wearing out.

Two vendors of these that I am aware of are Fujitsu and Cypress.

As one quick example datasheet link - https://www.fujitsu.com/uk/Images/MB85RS1MT.pdf

These days when new boards are being designed, I lobby for one of these to be added to the design, so I can pull an "FRAM log" off the unit after the fact, and look for clues as to what recently happened. |

Bob Baylor addresses FPGAs:

|

Spare outputs, with or without LEDs, are indeed very useful. In a complicated FPGA design, we include a (at least 4 bits wide) debug output port in nearly every verilog module of any serious complexity along with a config (input) register that controls a multiplexer of key internal signals (there may be dozens) to the debug output port. This continues throughout the module hierarchy up to the top where the LED (or just a header) are connected. Important: The debug code and muxes remain in the design even in production for two reasons:

1) you might need to debug it again

2) eliminating the debug bits will require a rebuild and the tools may change something that only worked with the debug signals present.

Yes, I know, #2 is kind of scary and crazy but why take the chance? Without the debug bits, you can't observe the internals of the design. BTW, we're talking about a design that lives in a 676 ball BGA, so what's the marginal cost of a few pins? |

A reader who wishes to be anonymous writes:

|

In the latest Muse, some readers commented on debug features they find useful. As an engineer who supports product users, I'd like to share my perspective.

My employer sells industrial controls and many of our devices have LEDs to provide quick user feedback if a device is running, shutdown, or has an active alarm; this is very common in our industry. One specific product has a red LED and green LED on the PCB for internal debug. During normal operation, they flash with a heartbeat and status code... and it drives my customers nuts. At least once a month (more frequently when the product debuted), I get a call from a customer asking if the flashing red LED means the product is broken and how can they reset it.

Engineers and designers should keep in mind how their products will look to users. Today's debug feature is tomorrow's nuisance or security vulnerability (e.g., see this UART hack from DefCon: https://youtu.be/h5PRvBpLuJs?t=330). Debug features are very useful (especially to field engineers), but they should be removed, disabled, locked, or otherwise rendered safe in the shipping product. |

Phil Matthews longs even for available GPIOs

|

LEDs! Luxury!

I plead with the PCB layout guy to bring unused I/O pins out to pads, so that I can at least put a scope probe on or even solder on my own LED. |

Matthew Eshleman wrote an intersting article about this:

|

| Failure of the Week |

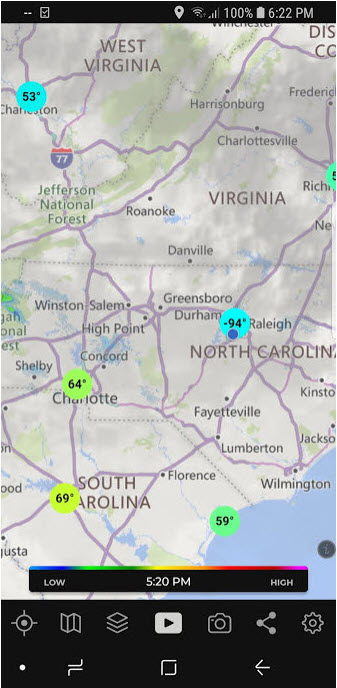

From Jack Morrison, who writes: "The icon shows the battery completely empty, the small popup says "255% available" (!), while the double-click popup windows says "100%" charged". Then after a while the small popup says "no battery detected" - yet I can unplug the power cord and the computer keeps going."

Steve Lund sent this bit of mischievousness:

Have you submitted a Failure of the Week? I'm getting a ton of these and yours was added to the queue. |

| This Week's Cool Product |

Arthur Conan Doyle serialized his Sherlock Holmes stories in the newspapers of the day. Stephen King serialized The Green Mile with new chapters published monthly.

Similarly, I've been getting an email every few weeks of what turns out to be chapters of a short book by Ralph Moore.

It's now available for free (registration required) here. In the last Muse I wrote about using MPUs to build reliable products. This book, Achieving Device Security,is about using MPU-aware RTOSes to build secure products. This is an important topic. I'm not sure that it's possible to build a non-trivial device that is secure without employing a memory protection unit or memory management unit to get hardware enforcement of security rules.

Recommended.

Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

| Joke For The Week |

These jokes are archived here.

From Clive Souter:

I went to the Fibonacci conference. It was great! It was as good as the last two combined. |

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster.

can take now to improve firmware quality and decrease development time. |