|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

||||

| Contents | ||||

| Editor's Notes | ||||

|

Tip for sending me email: My email filters are super aggressive. If you include the phrase "embedded muse" in the subject line your email will wend its weighty way to me. Jack's latest blog: One of my favorite books: A Canticle for Leibowitz. Though 60 years old its focus on troubled times and moral issues is still relevant. |

||||

| Quotes and Thoughts | ||||

Hardware works best when it matters the least. |

||||

| Tools and Tips | ||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. Tom Reaney likes vbuilder:

Tom included a sample flowchart which is much to big to include here, but is awfully impressive. Responding to Brian Cuthie's thoughts about watchdogs, Til Stork writes:

|

||||

| Freebies and Discounts | ||||

This month's giveaway is a BattLab-One current profiler from Bluebird-Labs. A review of this unit follows.

Enter via this link. |

||||

| The BattLab-One Current Profiler | ||||

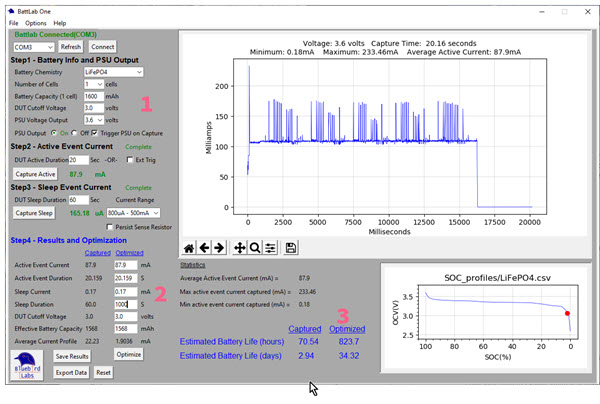

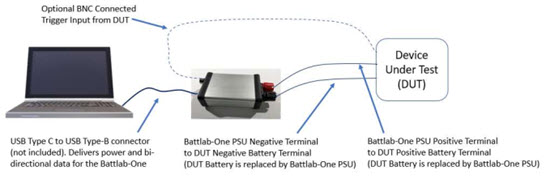

Battery-operated systems are the rage today, now that pretty amazing MCUs only sip at a stream of electrons. I've written extensively about optimizing these systems and have reviewed a number of tools to monitor power consumption. A new entrant is the BattLab-One. Bluebird-Labs sent me one to play with, and this is this month's giveaway. The BattLab-One is in a small but robust aluminum case. Connected to a PC via USB it replaces a device under test's (DUT) battery. A very intuitive PC application drives the unit, though it is slow to load. The user interface. I've added the red numbers to facilitate this discussion. Click on the image to call up a full-sized one in your browser. First, some specs:

As mentioned, the BattLab-One replaces the battery in your DUT:

The UI image above shows the application after I've captured some DUT data in both active and sleep modes. I added the red number "1" which is an area where you set the battery parameters. I've selected a LiFePO4 cell; the application automatically populates the other entries like battery capacity, voltage, and the like, but these fields can be edited as needed. I collected 20 seconds worth of data, which for the DUT I used covers a wake and portions of a sleep period. The graph shows active peaks of about 175 mA and, at the end, a big drop as the DUT goes to sleep. Average active current is displayed (87.9 mA) and average sleep (165.18 µA). There are two ways to capture data: press the "Capture Active" or "Capture Sleep" buttons, or configure your DUT to assert a triggering signal that the BattLab-One uses to start acquisition. This means in some cases you'll have to configure your system, perhaps by instrumenting the code, to assert a trigger signal to start acquisition. Or, one can tell the tool to acquire for a long time and get a representative overall view of the DUT's operation. Sleep current is shown as an average value only (it shows up in the graph but is so much less than the active value that it's hard to discern much quantitative data). But this is just the start. The BattLab-One's raison-d'etre is to play with different scenarios to optimize a DUT's energy consumption. At label "2" in the picture there are a number of parameters you can noodle with. Change some, and at label "3" the tallies will update. Perhaps a longer sleep time might be an option. In the picture I changed that from 60s to 1000s, and the "captured" battery life goes from 2.94 to 34.32 days. And that's pretty cool. There are some nice features that the manual doesn't address well. One is the "Persist Sense Resistor" (an undescriptive name) option. If you're collecting sleep data you'll probably select the 10 µA to 800 µA range. That uses a 100 ohm sense resistor; if the DUT were to come out of sleep mode it's possible the burden current would inhibit its operation. If unchecked "Persist Sense Resistor" automatically jumps back to the high-current (800 µA to 500 mA) range. Checked, that 100 ohm resistor stays in play. The curve at the bottom right shows the characteristics of the selected battery. The red dot indicates the point where it is effectively dead, but that can be changed in the parameters at label "1". I do wish there were three, instead of two, current ranges. The low range is 10 µA to 800 µA, which is probably fine for most designs, but ultra-low power systems sometimes have sleep currents of under a µA. And it would be nice to see a graph of the sleep-mode current, though that isn't really necessary when using the tool in its usual "what-if" mode (item "2" in the picture) to see how to optimize a system's energy consumption. Bluebird-Labs tells me they will be adding the ability to capture and graph the sleep data. Jetperch's Joulescope is my preferred energy monitor, but it's nearly 10x the cost of the BattLab-One, and it doesn't support "what-if" optimization doodling. At $99, or $79 as a bare board, the BattLab-One is a cost-effective tool for measuring and modeling a system's ampish behavior. Recommended. |

||||

| A Look Back: The In-Circuit Emulator | ||||

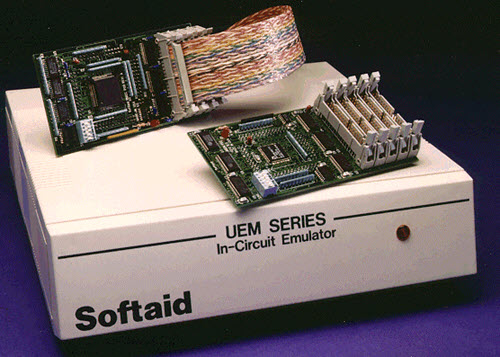

Several readers have written recently asking for some historical background on in-circuit emulators (ICE). These are mostly extinct debugging tools that dominated the embedded industry from the 70s till the end of the 90s. Today these have been replaced by JTAG debuggers (like SEGGER's J-Link and IAR's I-Jet). The first ICE was Intel's MDS-800, aka "blue box", a physically imposing tool for developing 8080 code. Around 1975 my boss, in an unusual moment of lucidity, let me buy one for a staggering $20k ($100k in today's dollars). It gave us most of the resources emulators provided 25 years later with the exception of source-level debugging. That was not much of a problem as all embedded work was done in Assembly language at the time. Remember ISIS? No, not that one. ISIS was the MDS-800's operating system. The unit needed an OS as it did have mass storage: two 8" floppy disks storing around 80 KB each. An ICE physically replaced the CPU/MCU in a target system. These tools were boxes that sported a large cable going to a plug; the user pulled the CPU chip from its socket and inserted the ICE plug in its place. A processor in the tool replicated the action of the target's CPU; substantial circuitry allowed the user to do all of the usual debugging activities like setting breakpoints, single-stepping, inspecting registers and memory, etc. Unlike today's debuggers ICEs contained RAM that could be mapped into the address space of the target. Flash memory didn't really show up till the end of the emulator era so it was common for firmware to reside in EPROM. It was tedious to change EPROM's contents: one had to place the chips under a UV light for tens of minutes and then "re-burn" the code into the devices. The emulator's RAM logically replaced the EPROM at the same addresses but allowed the developer to quickly download new code or patch changes. Decent emulators included more sophisticated features like performance analyzers that watched what the code was doing in real time to find bottlenecks, trace logic to capture execution flows, and very sophisticated breakpoints. Many of these features are now available in modern JTAG-style debuggers. While ICEs for CPUs generally ran user code on a processor identical to the one in the target board, MCUs posed special problems. With their own on-board memory it was often impossible to watch a bus to see what the core was doing. The semiconductor people understood this and made special versions of the chips available to ICE vendors. These "bond-out" parts had hundreds of pins to bring all bus activity out. Made in tiny volumes, they were a special curse to all involved. Expensive, yes. And often the silicon was a few revisions behind that which customers could buy, as there was no profit in keeping these parts up to date. Some were just sloppy. I remember working with a Toshiba bond-out part in a ceramic package where the top of the chips would just fall off! On the other hand, Intel was pretty good at keeping their bond-outs current. But even CPUs had problems. Cache made bus monitoring impossible, so ICE vendors had to tell customers to leave cache off during development. Prefetchers would fetch instructions that might later be discarded; to make trace data sensible the source debugger had to simulate the CPU's behavior and filter out discarded instructions. And then there were parts that should never have come to market. Zilog's Z280, for instance, was a nightmare of a chip that turned an ICE into a fabulously-complex Rube Goldberg of electronics (Heath Robinson to our British friends). This was Zilog's last hand-laid-out chip (Xacto-knife cutouts from Rubylith), which was eventually orphaned when they lost the layout! I have to chuckle when developers today complain about a several hundred dollar debugger. For two reasons: we're paid a lot of money, and these tools greatly speed our work. And in the ICE days an emulator could cost tens of thousands of dollars. Plug it in backwards and expensive smoke poured out. Pricing didn't kill the emulator: technology did. As clock rates rose it became impossible to propagate signals over a cable to a CPU socket. (I found those two college courses on electromagnetics baffling but designing and supporting emulators made the subject very real and very important). Imagine the travails of sending a bus over a 30 cm cable with today's multi-hundred MHz clocks!

An in-circuit emulator for a 186 processor. The little boards plug into a target's CPU socket. All of the bus signals are propagated to the big box over the ribbon cable, a James Clerk Maxwell nightmare as clock speeds soared. But CPU sockets went away when everyone started soldering CPUs to the board. There were some clumsy workarounds; it was possible to clip onto a PLCC package and tri-state its outputs, but a butterfly flapping its wings in Brazil could make the connection unreliable. BGA processors made any sort of connection impossible. I was doing a classified project with an oddball microprocessor and designed an emulator just for that, but slowly a light dawned that this could be a product. I re-engineered it for more mainstream 8 bitters and it sold well. With only 17 ICs (in the 80s ICs were pretty simple parts) and horribly-complex code it went for $600. All emulators at this point had just one or a few breakpoints as they used comparators to match a breakpoint address. I used a 64K x 1 RAM that watched the target's address bus. Writing a one into any location caused a breakpoint, so the emulator had 64k breakpoints. I learned, though, that customer support was a huge issue since every target system was different: impedances and voltage levels varied and some target designs were not particularly robust. We designed a succession of much more powerful products that sold for ten times the price. Support was about the same for a $6000 product as for the $600 version. One of the fun parts of being an ICE vendors was working with semiconductor vendors as they developed new processors. We'd get early silicon which invariably had a slew of flaws; finding those was challenging and interesting. Sometimes the silicon didn't exist and we'd get a very large board populated with lots of FPGAs programmed to act as the silicon (hopefully!) would. The emulator business imploded at the beginning of this century, and few of those vendors exist in any form today. Applied Microsystems was the biggest, selling some $40m of these tools a year. They folded. Lauterbach probably made the best ICEs and they still sell some legacy (68k and 186) products. I sold my emulator business in 1997; it prospered for a while but suffered from a new owner who didn't understand the business. Emulators came of age when transistors were expensive, so processors had no on-board debugging resources. We're very fortunate today that so many include so much debugging capability. The result: many vendors sell really great JTAG-style tools for a song. |

||||

| Muse 408 Responses | ||||

Here are a couple of reader replies to the last Muse. Stuart Jobbins responded to Get it Wrong, Pay the Price:

Robbie Tonneberger added his story about becoming an engineer:

|

||||

| Failure of the Week | ||||

Dave Smart sent in this week's failure:

-196 degrees seems to be a theme; I have a lot of these ridiculous failures where that temperature is displayed. Any ideas why? Oh, and the coldest temperature ever recorded in nature on Earth is -129 degrees at the Soviet Antarctic research station, so that might be a reasonable lower bound when deciding if a temperature is reasonable. The lesson is an old one: check your results! |

||||

| This Week's Cool Product | ||||

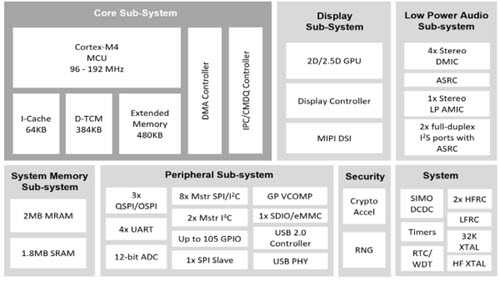

Ambiq has been pushing ultra-low power MCUs for some time. Their new Apollo 4 is claimed to require only 3 uA/MHz, which is about an order of magnitude less than other columb-adverse micros. Alas, the web site is pretty poor and neither datasheets nor pricing seems to be available. I assume this is a "typical" or "best case" number. However, the part does include an FPU and wide range of peripherals. Instead of flash it uses MRAM (Magnetoresistive Random-Access Memory). It will be available in a BGA, and intriguigly, an WLCSP package.

Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||

| Joke For The Week | ||||

These jokes are archived here. Tim Hoerauf sent this topical joke: It may be confusing to some which holiday we're observing, Halloween or Christmas, because OCT(31) = DEC(25)! |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |