|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

||||

| Contents | ||||

| Editor's Notes | ||||

|

. Jack's latest blog: We Shoot for the Moon. |

||||

| Quotes and Thoughts | ||||

If the code isn't important enough to document, throw it away right now. Phil Koopman |

||||

| Tools and Tips | ||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. Jacob Beningo wrote about frying his I2C tool, and gives good advice for isolating serial debug instruments. |

||||

| Freebies and Discounts | ||||

Tim Hoerauf won the second of the two Keysight DSOX1204G scopes last month. Enter via this link. |

||||

| Oscilloscope Color Temperature | ||||

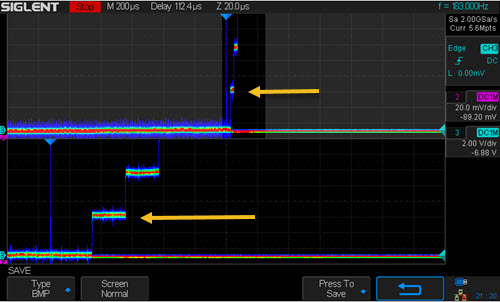

One intriguing feature found on some digital scopes is the so-called "color temperature." (A lousy name, if you ask me, as "color temperature" has a well-defined meaning in physics). The scope sweeps rapidly, acquiring thousands of sweeps a second. With color temperature enabled, each pixel is colored to indicate the probability of that value. Red indicates the most common value; blue the least. The following screen shot is from a Siglent SDS2304X scope which is displaying the output of a DAC whose value is incrementing. The top portion (upper arrow) has the scope gain cranked way up; the bottom part is that same value zoomed in. Note that the most common value measured by the scope is red, but due to some system noise, there's yellow and blue above and below the nominal value.

Color temperature could be useful in monitoring noise, and I imagine would really dress up eye diagrams. Yes, we traditionally use intensity to gauge these, but the colors would be a bit more quantitative. I'd like to come up with a trick to use this feature for debugging firmware - but so far, can't. Suggestions? |

||||

| Metamorphic Software Testing | ||||

When testing code we're faced with two problems that can seem intractable. First, who or what is the oracle (the entity that determines if a test result is correct)? The second is the issue of generating large quantities of tests. Fuzz testing can create lots of tests, but given that there's no oracle it is mostly useful for seeing if the code crashes, not whether a correct result occurred. There are some tools that will build vast numbers of unit tests. LDRA's Unit, for instance, can build enormous sets of unit tests by examining the code. I've seen that tool generate 50,000 lines of test code from a 10KLOC code base. In many cases it can derive the oracle, though sometimes it's up to the user to supply the expected answers. But what about situations like processing visual fields? Is the system properly identifying a pedestrian, no matter what the lighting might be, or if there's a raging snowstorm? Or, suppose you've written code that computes sin(x) to 1000 decimal places. How do you test that? Enter metamorphic testing (MT), a twenty-year-old idea that eliminates the need for an oracle while building test cases more or less automatically. It's getting a lot of traction in applications like machine learning and vision processing, where data sets can be huge. The March issue of the Communications of the ACM has an interesting article about using metamorphic testing to test LIDAR data. It cites an example of a vision system in a drone. The code worked, actually quite well. But if the sun was at just the right (wrong?) angle to the visual field the drone did silly things. Researchers fed actual images to the code, and then images corrupted by various random rotations of the sun angle. MT relies on creating metamorphic relations (MR). These are characteristics of the system that must hold true, but that result in many different inputs. For instance, for the sin(x) problem one could test for sin(x) = -sin(π + x), within the precision one is striving for. In a vision system, feed an image to a camera then add snow/noise; both should pick out the pedestrian. Randomly (and automatically) rotate and manipulate the added distortion and ensure the system still picks out the pedestrian. Another example of MT: suppose you have a function that computes the standard deviation of many inputs. Randomly shuffle the order of the inputs and check for identical results. Though I have not read any studies about this, I would think MT would be even more effective with the ample use of assert() macros.Is MT useful for testing, say, control systems like we find so often in embedded systems? Sometimes. Here's an article about using it for testing a PID controller:

MT alone isn't enough; it can guarantee the relations between test cases, but not absolute errors. If a sine routine returns sin(30°) = 2.5 and sin(180° + 30°) = -2.5 this particular MT test will pass even though the answer is bogus. In the real world, one defines many MRs with the hope that flaws will pop up. Still, in some cases MT can be a valuable adjunct to a test regime. |

||||

| More on Software Warranties | ||||

The always thoughtful Nick P had some thoughts in response to the articles in Muse 370 and Muse 369:

|

||||

| This Week's Cool Product | ||||

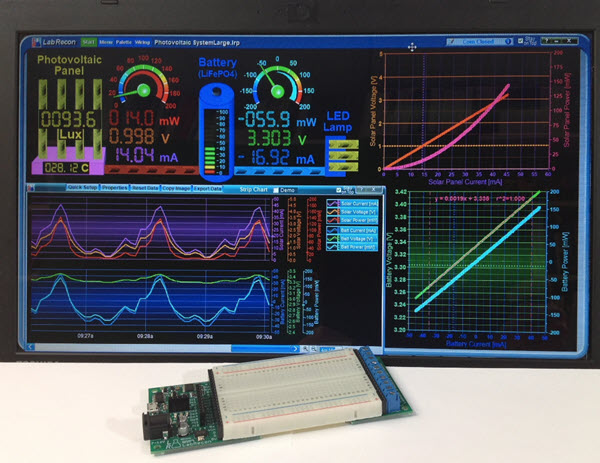

I'm having a little trouble getting my mind around this product as it's so vast, but am fascinated by the possibilities. From the developers: "LabRecon allows one to build rich graphical interfaces for remote IoT (Internet of Things) or local measurement and control applications. A drag-and-drop panel builder and graphical 'Wiring' programming environment allows one to easily build an interface and create the operating logic for any project. This operating logic can be further expanded using the 'code link' interface to text based languages such as Python, Java, C#, Visual Basic, etc. A USB connected 'Breadboard Experimentor' circuit board or LabRecon chip provides the measurement and control link. "One powerful feature is the 'Measurement Wizard', wherein one can choose from a built-in database of over 500 commercially available sensors to automatically configure sensor configurations. The wizard will further present circuits with component values for voltage and current measurements. "The 'Breadboard Experimentor' includes an on-board LabRecon chip, which provides many I/O options including 8 12-bit analog, frequency and digital inputs. Outputs comprise PWM, servo, frequency and stepper motor signals. Pins can also be configured to support 24-bit ADCs, 12 or 16-bit DACs and port expanders. "LabRecon also comprises a server to allow access of the GUI that one builds by computers or mobile devices. Furthermore, emails and text messages can be sent periodically or upon events. The server also includes a MQTT broker to allow MQTT clients to share data with the software."

If you go to the Labrecon site you'll be blown away by the resources provided. They sent me a board; I hope to play with it soon, and will report my findings. Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||

| Joke For The Week | ||||

Note: These jokes are archived here. In the last Muse I ran the lyrics to The Gates. In the same spirit here's White Collar Holler by by Nigel Russell. The only version I've heard performed was done by Stan Rogers, a cappella, and is a lot of fun. Well, I rise up every morning at a quarter to eight And it's Ho, boys, can't you code it, and program it right Then it's code in the data, give the keyboard a punch And it's Ho, boys, can't you code it, and program it right Then it's home again, eat again, watch some TV And it's Ho, boys, can't you code it, and program it right Someday I'm gonna give up all the buttons and things |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |