|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

||||

| Contents | ||||

| Editor's Notes | ||||

|

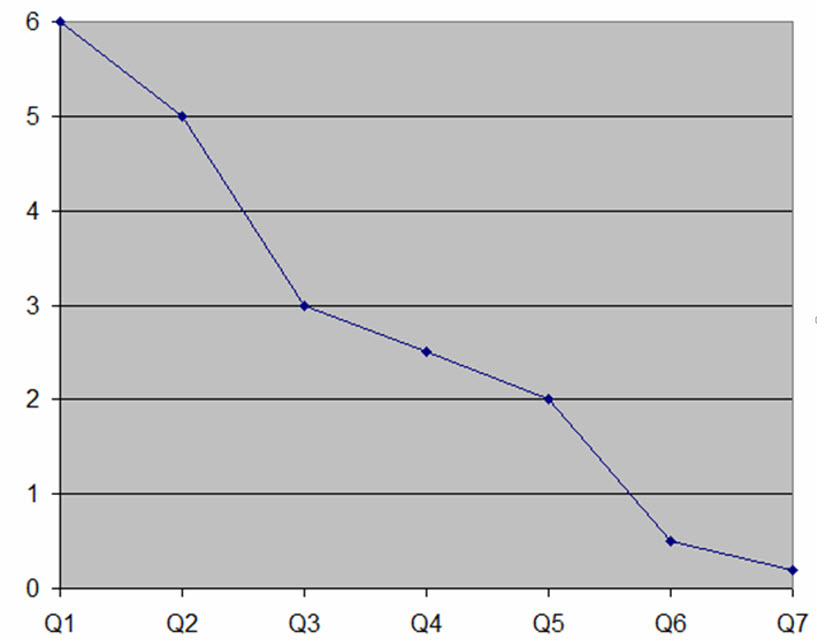

The average firmware teams ships about 10 bugs per thousand lines of code (KLOC). That's unacceptable, especially as program sizes skyrocket. We can - and must - do better. This graph shows data from one of my clients who were able to improve their defect rate by an order of magnitude (the vertical axis is bugs/KLOC) over seven quarters using techniques from my Better Firmware Faster seminar. See how you can bring this seminar into your company. |

||||

| Quotes and Thoughts | ||||

"Wisdom is always wont to arrive late, and to be a little approximate on first possession." Attributed to Francis Spufford |

||||

| Tools and Tips | ||||

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. In Muse 350 I wrote about some who speculate that deeply-embedded engineers are a vanishing breed. Frequent correspondent Charles Manning responded, telling that one of his Cortex M systems has to respond to interrupts in 4 µs. It had to be coded in assembly language, doing careful cycle-counting. You're not going to see Python programmers doing that! But I got to wondering: what's the fastest interrupt you've had to respond to? Did you have any cool tricks to do so? In the early 1970s we built a system using an 8008 that had to handle a fast input - I forget how fast, but it strained the CPU 0.8 MHz capabilities. The solution was to, after processing the interrupt, to jump to a HALT instruction just ahead of the ISR. We designed the hardware to jam a NOP instruction on the bus when the interrupt occurred, which resumed execution in the handler. (The 8008 responded to an interrupt with a normal fetch cycle, but it asserted an interrupt-acknowledge signal. Normally an interrupt caused the hardware to jam a one-byte call instruction, but that was too slow for this application.) Diego Serafin responded to my thoughts on using I2C as a debug port:

Ned Freed, in replying to last issue's article about power supplies, pointed out that Murata's OKI-78SR-E series of switching regulators are drop-in replacements for the venerable 78xx linear units. With efficiencies of 84% or better, their $7 single-unit prices are an order of magnitude more than the older linear units. Daniel Wisehart wrote:

|

||||

| Freebies and Discounts | ||||

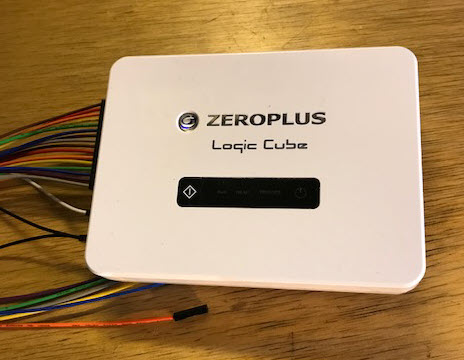

This month we're giving away the Zeroplus Logic Cube logic analyzer that I reviewed here. This is a top-of-the line model that goes for $2149. Also included is their two channel digital oscilloscope option, reviewed later in this issue.

Enter via this link. |

||||

| On MISRA C | ||||

The automotive industry is afraid of software. A bug could cost hundreds of millions or more. Yet software is at the very essence of cars. Consumers find software-enabled goodies represent 65-75% of the value of their vehicles. In an effort to tame the code, many years ago MISRA (Motor Industry Software Reliability Association) released the MISRA-C coding standard. It has evolved, with the latest version released in 2012. It comprises about 140 rules for the safer use of the C language. Unlike most software standards, a justification accompanies each rule. Some of these discussions are quite long, spanning several pages, with many examples of compliant and non-compliant code. An example: directive 4.6 addresses a fundamental weakness with C. The base types (int, float, etc.) are basically not defined. They depend on the CPU, the compiler, and the wind direction. 4.6 reads: typedefs that indication size and signedness should be used in place of the basic numerical types. For C99 the types in <stdint.h> should be used. C90 requires typedefs; examples include: typedef unsigned int uint32_t; typedef float float32_t; MISRA doesn't dictate the names used; one could substitute uint_32 instead of uint32_t. But the point is that the sign and length of the variable should be part of the type definition. (Some exceptions are noted in the standard.) I don't agree with all of the MISRA rules, but think this one is spot-on. Think how much easier it would be to port code to a new CPU and compiler whose base types are different from the original code! 16.5 is: a default label shall appear as either the first or the last switch label of a switch statement. This is a lot like rule 2.1, in that something that can't happen, well, too often does. Optimism is nice in daily life, but a wise engineer figures that unexpected bad stuff is likely to happen. And by locating the default in a standardized location it's easier to find when scanning the code. Rule 15.1 suggests avoiding the use of goto. The Barrgroup is soliciting input about this keyword for their standard. I believe goto should be allowed, though every use must be carefully audited. We do allow setjmp and longjmp, which certainly break the model of structured programming (as does break). Sometimes goto is an elegant way to clean up what would be a convoluted effort to follow the dictates of structured programming. They're like hand grenades: dangerous, but occasionally useful. Some of the rules and directives leave me scratching my head. Directive 4.1 says Run-time failures shall be minimized. What does that mean? How can one measure it? What does "minimize" mean, quantitatively? This rule almost leaves one thinking that it's an advisory against the use of C, which places the burden of taking care of runtime problems entirely in the programmer's lap. The guidelines suggest adding code to deal with specific problems and using static analyzers, both great ideas. But I can't see how one can claim conformance with the directive without making some sort of formal argument like a safety case. And that's quite an expensive proposition. I believe MISRA is incomplete as it doesn't address stylistic issues. These include the placement of braces, indentation, and the like. Every organization has their own take on these; sometimes they constitute the catechism of a religious war between developers. Fight a war over design issues, not style. Clarity is our goal; when inconsistent styles are used one's eyes get tripped up by odd structures. There's also a lot to be said for incorporating the CERT standard. You can get a .PDF of MISRA from here for 15 English Pounds. MISRA is the most well-known firmware standard, but few organizations make religious use of it. At just £15 I think all of us should at least read the standard, and adopt its best practices. |

||||

| Review: The Perfectionists | ||||

I like to keep The Embedded Muse tightly focused on embedded issues, but I recently read a new book that most engineers would enjoy. It's The Perfectionists by Simon Winchester. His other books are excellent - and if you have Y chromosomes, a chapter in his The Professor and the Madman will cause you to grit your teeth in sympathetic agony. The theme of his latest (2018) book is that of the human quest for ever more precision in our manufactured goods. It starts with a personal story from his youth, when his machinist dad brought a set of gauge blocks home and baffled the young Simon who could not pry the two apart. The surfaces were so flat - so perfect - that they stuck together, but then dad, in a fit of showmanship, easily slid them apart. Later he addresses the seemingly-boring subject of such blocks in fascinating detail. We often conflate precision and accuracy; a prolog nicely refines the difference. The heading of each chapter has a tolerance listed. For chapter 1 the tolerance is 0.1. The engineer in me bristles a bit in that no units are listed, but later one learns that, in this case, it's 0.1 inch, or the tolerance James Watt achieved in his steam engine for the piston to cylinder fit. Many histories of steam neglect or downplay the role of John Wilkinson, who had invented a more precise way to bore canon barrels. Watt's engines leaked steam profusely until he partnered with Wilkinson to construct cylinders where the steam stayed on the proper side of the piston. Winchester shows how those two inventors were synergistic and needed each other. Each succeeding chapter has a smaller tolerance heading, and the story told therein is about more precise work. Precision is the god of the story. Yet poor tolerances are sometimes the best choice. If one can use a 5% resistor instead of 1%, the product will be cheaper. Gunsmithing was once an art practiced by skilled artisans who made each component fit with exquisite precision. But parts were not interchangeable between guns, as that precision had little accuracy; it didn't match a standardized norm. Winchester shows how interchangeable parts came about, with the unexpected repercussion that those craftsmen weren't interested in working on an assembly line. There was no skill in pulling parts from a bin and fastening them in place. Ford had a similar problem. While Rolls Royce was famed for handcrafting a vehicle of great beauty and reliability, it did so at enormous cost as workers had to file and fit each component. (Their cars never "broke down." They "failed to proceed.") Ford wanted cheap cars for the masses in quantity, and the only way to achieve that was by making parts of great precision that fit without any handwork. But Ford found he couldn't keep workers who had no patience for repetitive endeavors. While Ford was praised for doubling wages to $5/day, a part of that was to keep bored employees from walking off the line. Many phrases we use today came from a quest for precision. "Lock, stock and barrel" was about making those gun parts interchangeable. "Cutting edge", "drill down" and other colorful idioms fell out of ever-improving manufacturing methods. How many of us remember the BSW screw standard? Joseph Whitworth was one of the first to standardize screw sizes, so one didn't need a custom nut for each fastener. His British Standard Whitworth screw sizes are still found from time to time. Thankfully, not too often on this side of the Atlantic, as we already must keep inventories of both metric and inch sizes on hand - and in both UNC and UNF thread pitches. Frank Whittle, one of the fathers of the jet engine, had a breathtakingly simple idea: 2000 HP with one moving part made with great precision. But look at the complexity of a modern 787 powerplant! Then there's the Hubble Space Telescope, whose mirror was made to an exquisite level of inaccurate precision. Winchester's writing is beyond elegant. An example: "Then came the computer, then the personal computer, then the smartphone, then the preciously unimaginable tools of today - and with this helter-skelter technological evolution came a time of translation, a time when the leading edge of precision passed itself out into the beyond, moving as if through an invisible gateway, from the purely mechanical and physical world and into an immobile and silent universe, one where electrons and protons and neutrons have replaced iron and oil and bearings and lubricants and trunnions and the paradigm-altering ideas of interchangeable parts, and where, though the components might well glow with fierce lights or send out intense waves of heat, nothing moved one piece against the other in mechanical fashion, no machine required that measured exactness be an essential attribute of every component piece." Yes, in some places the book is almost Melville-like in its wordsmithing (most of his sentences are shorter than this example). And, yes, in this section he writes about the mind-numbing precision of our field, building ICs, where parts are merely a handful of atomic diameters across, and photolithography using 193 nm light can, astonishingly, yield 10 nm features. What the IC industry has wrought simply beggars the imagination. The final chapter pays homage to imprecision: handcrafted products and the arts. I found that a bit strained. The book is about engineering manufactured objects, so I wish it had more illustrations of those devices. The pictures tend more towards portraits; I'd rather see the artifacts than the people. But you know what they say about us: an extroverted engineer looks at your shoes when he's talking to you, not his. Amazon Prime and One-Click shopping means boxes of books arrive here constantly. Some disappoint, others are wonderful. The Perfectionists is one of the best books I've read this year.

|

||||

| Review of Zeroplus's DSO Add-on | ||||

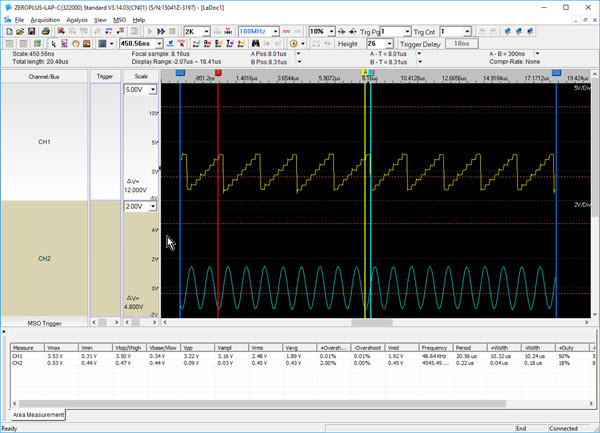

In the last issue I reviewed Zeroplus's USB-connected logic analyzer (LA). It's a very nice unit. Since then, they sent me an add-on: a digital oscilloscope. To my knowledge this is a somewhat unique device. The scope takes two analog inputs, digitizes them, and feeds them to the logic analyzer, which displays the result using the same IDE that controls the LA. Unusual and interesting.

It wasn't possible to resist the impulse to take this apart. There are a pair of Analog Device AD9283-100 eight-bit, 100 MHz ADCs. Interestingly, there's a SN74HC4020 14 bit counter on the board. I thought it might be to divide the 100 MHz oscillator for lower sample rates, but the counter has a 22 MHz max clock frequency. I didn't have time to scope around and tease out the puzzle.

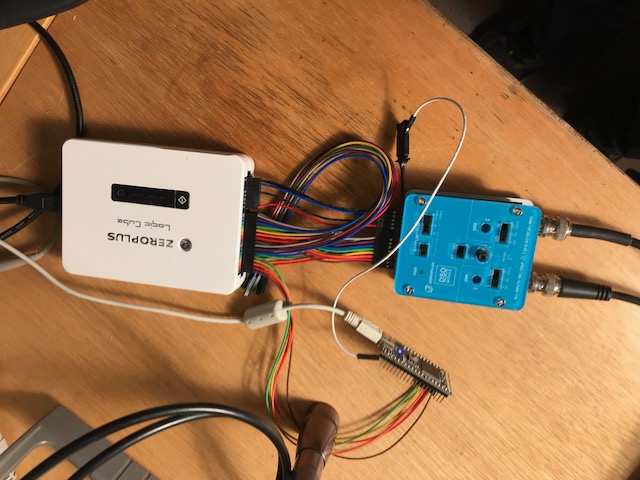

Sample rates to 100 Msps are supported. The unit has a rated analog bandwidth of 20 MHz. Record lengths of 8k, 16k and 32k per channel can be selected. The unit's input impedance is 1 MΩ/25 pF. The probe impedance is specified in Chinese. Using the 25 pF figure, though, that works out to 318 ohms at 20 MHz, which could significantly load a circuit. A 74AUC08 AND gate, for instance, can't come close to driving that load. This is an oft-misunderstood issue with all probes. Tektronix sells their nice TPP1000 passive probe which has only 3.9 pF of tip capacitance (2k ohms at 20 MHz)... but it's $1020. Feeling flush? Keysight's N2803A scope probe, with an astonishing 32 fF tip capacitance, is a blue light special at only $29,041. The supplied (two) probes each have a switch to select X1 or X2. Using a signal generator and bench scope I found that on the X10 setting, their -3 dB point was 26 MHz. USB scopes are all controlled by the PC-hosted UI. This DSO is different: though the UI displays the analog signals, switches on the unit set operating modes. The sample rate is set via two slide switches. A pot controls the trigger level. The ground reference is set by two pots, which are recessed so you need a small screwdriver to effect changes. And each channel can be set to 1, 2 or 5 volts/division (or a tenth that with a X1 probe setting). The slide switches are recessed a bit too much, and take a decent fingernail to change. The DSO includes a square wave output for compensating probes. The system (DSO and LA) form a mixed-signal scope. That is, the user interface (reviewed in Muse 351) will show both analog and digital waveforms at the same time. As the following picture shows, a cable connects the DSO to the Logic Cube LA. Two cables are provided: one for using a single DSO channel, thus leaving 23 LA channels available (on the more expensive 32 channel LAs; 7 digital on 16 channel models). Or, another supplied cable supports two analog and 16 digital channels (no digital on the 16 bit models).

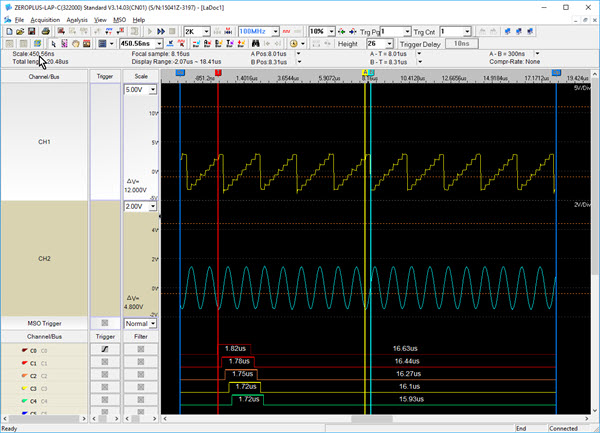

DSO connected to the LA. The little board on the bottom is a Cortex-M3 eval board that is outputting digital signals to the LA. Triggering is via the small knob on the DSO. I found I had to change this slowly as no trigger level is displayed on the UI, and it was easy to over- or under-shoot the sweet trigger spot. A pulldown menu on the user interface enables the DSO. In the following picture I've enabled two analog channels. You can see the displayed analog and digital signals. Triggering can be on either analog or digital signals.

The UI. The unit keeps a lot of statistics about the analog channels. Enable them, and they lie on top of the digital signals, but the vertical scroll bar will bring the digital into view.

The statistics are in the lower window. Though rated as having a 20 MHz bandwidth, I found good performance to 10 MHz, and increasing distortion of the signal as the frequency went up. Above 15 MHz a sine wave is pretty distorted. And the triggering on a sine wave gets a bit squirrelly above about 4 MHz - the signal jumps back and forth on the screen a bit with each acquisition. All in all, this is a reasonable USB scope if you already have the LA. At $130 it's a better deal than most other USB scopes as many won't go much above 5 MHz. There are few that will act as both LA and DSO, an important consideration when working on deeply embedded systems with analog and digital signals racing around. Pros will prefer a scope with better performance: it's hard to imagine doing much serious embedded work with less than 100 MHz bandwidth. But the Logic Cube is an excellent logic analyzer, and if you have that, and don't have a scope, this is a inexpensive way to gather analog signals. Zeroplus tells me they have a deal going now: if you buy a second LAP-C logic analyzer, and tell them what you're using it for, they'll send a free DSO module. |

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||

| Joke For The Week | ||||

Note: These jokes are archived at www.ganssle.com/jokes.htm. ENGINEER IDENTIFICATION TEST You walk into a room and notice that a picture is hanging crooked. You... A. Straighten it. B. Ignore it. C. Buy a CAD system and spend the next six months designing a solar-powered, self-adjusting picture frame while often stating aloud your belief that the inventor of the nail was a total moron. An engineer will answer "C" but partial credit can be given to anybody who writes "It depends" in the margin of the test or simply blames the whole stupid thing on "Marketing." |

||||

| Advertise With Us | ||||

Advertise in The Embedded Muse! Over 27,000 embedded developers get this twice-monthly publication. . |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |