|

|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. |

||||

| Contents | ||||

| Editor's Notes | ||||

IBM data shows that as projects grow in size, individual programmer productivity goes down. By a lot. This is the worst possible outcome; big projects have more code done less efficiently. Fortunately there are ways to cheat this discouraging fact, which is part of what my one-day Better Firmware Faster seminar is all about: giving your team the tools they need to operate at a measurably world-class level, producing code with far fewer bugs in less time. It's fast-paced, fun, and uniquely covers the issues faced by embedded developers. Information here shows how your team can benefit by having this seminar presented at your facility. Follow @jack_ganssle

I'm now on Twitter. |

||||

| Quotes and Thoughts | ||||

"A quality culture does not arise by chance ... Culture will not be changed by remote instructions or by documents. It responds only to leadership and example. Managers will need to develop an understanding of what culture is and what affects it, and to attend to their own behaviour as well as to what they say, so as to lead cultural improvement." Felix Redmill, sent in by Peter House |

||||

| Tools and Tips | ||||

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. I've been using Zamzar (where do these names come from?) lately. It's a free on-line file conversion service. It will convert between various video, audio, images, eBooks and the like. You can give it a file or the URL of an on-line file. They claim to support 1200+ file types. |

||||

| Freebies and Discounts | ||||

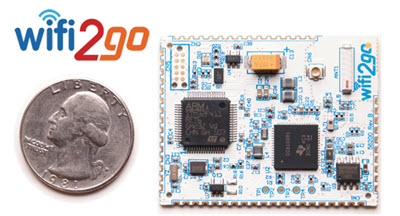

Thanks to the generosity of Imagecraft, this month we're giving away one of their Wifi2go starter kits. This includes the board shown, an ST-LINK/V2 and a set of development tools. Enter via this link. |

||||

| More on Generating Unique Error Codes | Alex Ribero had a comment about last issue's idea for generating unique error codes:

|

|||

| Goesintos Woes | ||||

It can be hard to get software right. But it's easy to employ best practices we've known for decades. When I started programming almost half a century ago we were admonished to "check your goesintos and goesoutas." That is, don't assume data passed between functions is correct. Even more fundamental, always figure that data from a source outside of your application is suspect. We all know this. Do we practice it? The number of exploits predicated on hosing a program with bogus data suggests that too few engineers routinely scrutinize incoming data. Chris Lee sent this email from Toyota:

I'm an enormous fan of design-by-contract (DbC) in which one salts functions with checks for preconditions (incoming data), postconditions (returned data) and invariants (things that shouldn't change). The Eiffel language supports DbC, as does the latest version of Ada. DbC is somewhat like assert() on steroids, though contracts can produce more reasonable behavior than assert()'s printed error message. But with or without DbC, in today's hostile world we simply have to check incoming data. Not doing so amounts to professional malpractice. |

||||

| Software Malpractice? | ||||

I just used the word "malpractice," and feel that we engineers need to think about this. According to Wikipedia's article on the subject, 'In the law of torts, malpractice is an "instance of negligence or incompetence on the part of a professional".' I believe engineering is a professional activity and so "malpractice" is probably a useful concept for us. Noted software researcher Capers Jones wrote: "The definition of professional malpractice is something that causes harm which a trained practitioner should know is harmful and therefore avoid using it." He even assigns metrics to activities that constitute malpractice. One example is removing fewer than 85% of the bugs before shipping. (This strikes me as being a little slippery since if one wrote a program that was so good there was only a single bug, and that got shipped, then 0% of the bugs were removed. Yet this might be some of the best code ever written). I suspect a doctor or other professional standing trial for malpractice generally uses a defense predicated on at least a little hand-waving. No doubt the issues are cloudy. But if you accept Jones' argument, we engineers could be held accountable to a quantitative standard. Taking Jones' definition apart, "harm" doesn't necessarily mean physical injury. His data comes from working as an expert witness in contract litigations. A product wasn't delivered or was defective. The harm is presumably, in those cases, economic. In the embedded world a lot of our products can cause injury. Or perhaps a security flaw leaks private data. There are a lot of "harms" that firmware can institute. "Malpractice" shrieks "litigation," but I think the term is useful from another standpoint. When a developer uses an approach "which a trained practitioner should know is harmful" doesn't this imply an ethical lapse? Licensed Professional Engineers are held to a very high standard - but we should hold ourselves to the same standard. If you know doing X is generally a bad idea, what does that say when we do X? I used the phrase "ethical lapse" because I think that, as professionals, when we do a poor job of our job, when we employ practices we know are substandard, we're not living up to our own principles. Noted theologian and ethicist Stanley Hauerwas thinks that what we do says something about who we are. That's true in how we treat people, as well as how we go about our daily work. Jones lists a number of activities which he says constitute malpractice. I disagree with some of them, and would add others. Others no doubt would come up with very different lists. If one of the most respected computer scientists writes "go to statement considered harmful," and malpractice envelopes "which a trained practitioner should know is harmful," does that mean any use of it is wrong? I'd say no. Undisciplined use of it surely is. Malpractice may indeed be too strong of a word. But, especially as software becomes ever more complex, and ever more able to inflict harm, we have an ethical responsibility to employ best practices. Playing on Hauerwas' thinking, using substandard (or worse) engineering approaches says something not enviable about ourselves. Real life is cruel; we're subject to the whims of bosses and customers. But I do think we have a serious responsibility to be knowledgeable about generally-accepted best practices, and to make every effort to employ them. |

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||

| Joke For The Week | ||||

Note: These jokes are archived at www.ganssle.com/jokes.htm. Schrodinger's cat walks into a bar... and doesn't. The tachyon orders a beer. A tachyon walks into a bar. Heisenberg is driving with Schoedinger in the car when a policeman pulls them over for speeding. "Do you know how fast you were going, sir?" the cop asks. "No." replies Heisenberg, "But I can tell you exactly where I am!" "Alright, wiseguy, pop the trunk!" Heisenberg complies. "Do you know you have a dead cat in your trunk?" the cop asks. To which Schroedinger replies, "I do now!" |

||||

| Advertise With Us | ||||

Advertise in The Embedded Muse! Over 25,000 embedded developers get this twice-monthly publication. . |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |