|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

||||

| Contents | ||||

| Editor's Notes | ||||

|

I'm told Embedded World, held this past February in Nürnberg, was a Coronavirused-shell of its former glory. The 2021 affair will be held in March. Hundreds of exhibitors have already signed up. And the Embedded Systems Conference in Silicon Valley is on in January. I admire the optimism of the promoters and exhibitors, but wonder if the conference world will be reshaped by the pandemic. In many disciplines the future is reasonably clear: history curricula changes slowly, in English literature grammar is nearly immutable. Electronics is different; it has always been hard to guess what we'll see a couple of years hence, and we engineers have grown comfortable with this uncertainty. But today even the near-future a month or three out seems awfully murky. I hope things improve enough to encourage travel and that these important conferences are big successes. What are your thoughts about the future of in-person conferences? Will you be comfortable attending these, and will your company be willing to pay for the travel? Jack's latest blog: The GP-8E, my first computer |

||||

| Quotes and Thoughts | ||||

All programmers are optimists. Fred Brooks |

||||

| Tools and Tips | ||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. Like many hobbyists, as a teenager I learned to design circuits. College augmented those practical lessons with theoritical rigor. But only when employed as an EE did I learn how to design circuits that worked and were reliable. One mentor helped me with that as he critiqued each design decision, sometimes to an infuriating degree. I don't see much in writing about this, but Jason Sachs' recent article on managing tolerance is very worthwhile. |

||||

| Freebies and Discounts | ||||

Enter via this link. |

||||

| Know Your Numbers | ||||

Do you know your how many defects will be injected into the next product during development? Or how many of those your team will fix before releasing it to the customer? If not… why not? Capers Jones has written: "All software managers and quality assurance personnel should be familiar with these measurements because they have the largest impact on software quality, cost, and schedule of any known measures." Jones is one of the world's authorities on software engineering and software metrics. He often makes it clear that teams that don't track defect metrics are working inefficiently at best. He suggests we track "defect potentials" and "defect removal efficiency." His article "Software Benchmarking" in the October 1995 issue of IEEE Computer defines "The defect potential of a software application is the total quantity of errors found in requirements, design, source code, user manuals, and bad fixes or secondary defects inserted as an accidental byproduct of repairing other defects." In other words, defect potential is the total of all mistakes injected into the product during development. Jones says the defect potential typically ranges between two and ten per function point, and for most organizations is about the number of function points raised to the 1.25 power. Function points are only rarely used in the embedded industry; we think in terms of lines of code (LOC). Though the LOC metric is hardly ideal we do our analysis with the metrics we have. Referring once again to Jones' work, his November 1995 article "Backfiring: Converting Lines of Code to Function Points" in IEEE Computer claims one function point equals, on average, about 128 lines of C. "Defect removal efficiency" tells us what percentage of those flaws will be removed prior to shipping. That's an appalling 85% in the US. But in private correspondence he provided me with figures that suggest embedded projects are by far the best of the lot, with an average defect removal efficiency of 95%, if truly huge (1m function points) projects are excluded. Jones claims only 5% of software organizations know their numbers. Yet in the many hundreds of embedded companies I've worked with, only two or three track these metrics. Since defects are a huge cause of late projects it's seems reasonable to track them. And companies that don't track defects can never know if they are best in class… or the very worst, which, in a glass-half-full way suggests lots of opportunities to improve. What's your take? What metrics does your company track? |

||||

| Zone Triggering | ||||

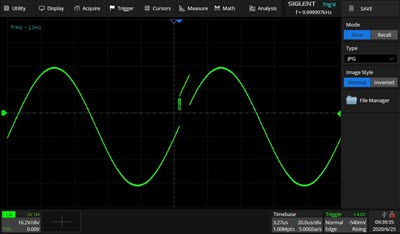

A common complaint heard from tools vendors is that customers don't know how to use some of the important features. From a user perspective, you've got dozens of instruments, each accompanied by a 500 page manual - who has the time to read all of that material? But some of those overlooked features can save a lot of time. Today some oscilloscopes have a very cool feature that can be occasionally a lifesaver called "zone triggering." You define a region of the screen that will start an acquisition if a signal strays into it. For instance, here's a 10 KHz sine wave with a 6 us pulse that's added to it once a second:

Normally this signal would be almost impossible to capture as it occurs so infrequently, and happens with a voltage level that's within the normal sine wave. Here I've defined a little square zone trigger visible as a small box on the leading edge of the pulse. Anything that wanders into that box triggers the scope. Nothing else does. The scope I used is a Siglent SDS5034X, though I know Tektronix and other support this feature on some of their instruments. The box is created using iPhone-like pinch-and-squeeze fingers on the touchscreen, though it's possible to set this up parametrically. This scope supports a pair of zone triggers; each can cause a trigger if a signal wanders into the zone, or if it is found outside that region. Or, the two can be combined with an AND operation for more nuanced acquisitions. A common use is to find bus contention where conflicting signals drive the voltage level to some value between a zero and a one. Or locating a short noise burst on an analog waveform. |

||||

| Software Element Out of Context | ||||

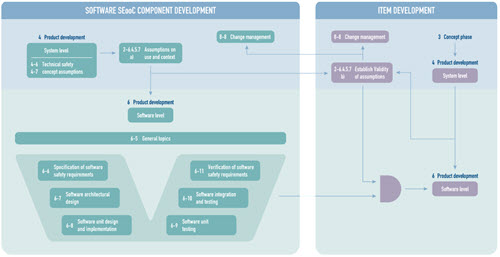

The notion of a "Software Element Out of Context" is gaining in importance in safety-critical code, and I've been getting an increasing amount of email from readers wanting to learn more. I asked Dave Hughes of HCC Embedded if he could provide some background, which he graciously did, as follows: All of computing history has been about creating building blocks and constantly improving and reconstructing them. This is equally true at both a software and hardware level; think about how you put together a PC or mobile phone; or how you build the software in them. It is a continuous process of improvement and in most cases the building blocks are built without an exact knowledge of the end-product. They just have a general use-case of which simple examples might be a microcontroller in hardware or a RTOS in software. A great description of components like this is "elements out of context" - they were designed to a specification and the integrator must ensure that these elements do what is required. You can think of standard software libraries in the same way: as something designed without the complete context of its final usage being defined. When we get to safety things change. Building a product that has safety considerations is completely different to standard products. A complete assessment of the risks associated with the device must be done and development methods used that fully mitigate those risks. However, safety products need to use proven technology, be it a microcontroller in an air bag or piece of networking software for relaying sensor information. For this reason, we need a methodology for ensuring that the appropriate level of risk mitigation can be done for these elements. In the automotive industry the ISO 26262 standard developed the concept of the Safety Element out of Context (SEooC) – taking general purpose components and integrating them with the development of a road vehicle. The standard covers the whole process of how you validate the element and gives several options for how you achieve this. The two main methods are to use proven-in-use arguments which is really only suitable for hardware components (software does not wear out) or by providing a full set of ISO 26262 development artefacts for the element ready to support its integration. The key to the whole process is the two sets of assumptions that are made. Firstly, there are the assumptions that the target system makes of the SEooC, and secondly the assumptions the SEooC makes of any system to which is integrated. If these are a perfect match, then you can integrate the element and validate it. Normally there will be gaps, particularly if it is software (since any change requires retesting). If changes are required, then you go through a tailoring process. Normally it is the SEooC that needs tailoring and if it has been developed within a safety development process with full traceability then this can be achieved relatively efficiently. Although this is an automotive standard, I see it as a methodology that should be mappable to any other industry, particularly for software where it is the process is more comprehensive a portable than in any other standard I have seen. Although software safety standards are heavily verticalized (ISO26262 for Automotive, IEC 62304 for medical devices, DO178C for aerospace; to name a few of very many) safety software in all industries has the same basic requirements in terms of specification, design, implementation, test and most importantly traceability. It is traceability that gives you the ability to modify with confidence when there are slightly different assumptions made by the target system. For a more detailed description of how SEooCs can be used for software please try our white paper that can be downloaded from: www.hcc-embedded.com/seooc Click on picture to get a full-sized image. |

||||

| Failure of the Week | ||||

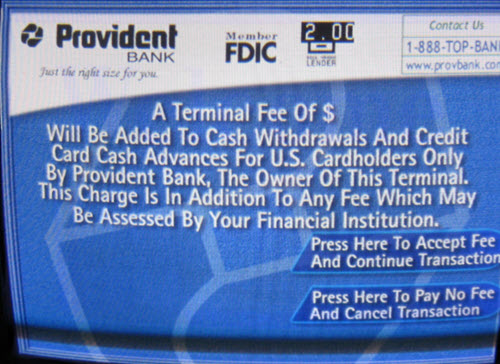

An ATM in Baltimore:

|

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||

| Joke For The Week | ||||

These jokes are archived here. The Definition of an Upgrade: Take old bugs out, put new ones in. |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |