|

||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

||||

| Contents | ||||

| Editor's Notes | ||||

|

Over 400 companies and more than 7000 engineers have benefited from my Better Firmware Faster seminar held on-site, at their companies. Want to crank up your productivity and decrease shipped bugs? Spend a day with me learning how to debug your development processes. Attendees have blogged about the seminar, for example, here, and here. A couple of recent articles bring up some interesting ethical issues of tech in cars. Bob Paddock sent a link to this article where a dealer sold a second-hand Tesla to a new owner... and Tesla disabled the "full self-driving mode" feature via an over-the-air update because the new owner didn't "pay" for the feature, even though the original owner did. Then there's Bryon Moyer's article where he describes a technology that allows automakers to verify that repairs only use OEM parts. Potentially, replacing something with an unauthorized widget could brick the car. |

||||

| Quotes and Thoughts | ||||

Ways to detect or prevent software errors should be designed in from the beginning. If written first, error-handling routines will get the most exercise. Many (and perhaps most) errors in operational software lie in the error-handling routines themselves. Nancy Leveson |

||||

| Tools and Tips | ||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. William Grauer sent this:

Yigal Ben-Zeev likes VSC:

|

||||

| Freebies and Discounts | ||||

This month's giveaway is a FRDM-KL25Z eval board based on the Cortex-M0+ microcontroller. The board is supported by mbed.org, which means you can do development without an IDE; the web site has an on-line compiler. The board appears as a disk drive on your PC. Compile the code with mbed's on-line tool, copy the resulting file to the board, and the code runs. I wouldn't build a real product with mbed, but it's great for playing around and building experimental code.

Enter via this link. |

||||

| On Management, Redux | ||||

In the last issue I took management to task for often not understanding the realities behind engineering and engineers. Now I'd like to discuss some truths about management that many engineers don't grok. Dilbert consistently depicts managers as clueless morons untethered to the real world. I've run into some of those. Yet most are decent folks who are trying to do a good job, caught between the CEO's needs and quite differing realities on the ground. Unfortunately, mid-level bosses are often promoted from technical work without being given any sort of management training. In engineering it works, or it doesn't. If it doesn't, it's usually possible to figure out why. Management is much different. If you perturb an electronic system five times the same way, you'll generally get five identical responses. Yet if you do the same to a person, you'll get five different reactions. The science we prize just doesn't work with people. Challenging? You bet. In many ways great management is more difficult than engineering. When things are working well, the boss is fending off a blizzard of requests for changes and features from his or her boss, sales, marketing, and more. Or at least filtering these to a reasonable few. Those people have very real needs, too, but those are often divorced from the company's requirements. How often have you heard a sales person say "I can't sell this piece of crap without X, Y and Z"? Sometimes this is really "I can't sell." It's easy to demand goodies, but hard to figure out real needs. Your boss is caught between the team and these shrill calls. Then there's arbitrary and capricious schedules. "It's gotta be done by March 1!" How annoying! What reality does this stem from? To us these mandates are insane, driven by no technical basis. Those in charge must be morons! In the engineering group I ran in the 70s schedules always came down from on high. This was a chronically undercapitalized company, and I'll never forget an effort to go public. One auditor, shaking his head in dismay, told me "I've seen every financial trick this outfit has used. But never all in one company." Years later the president told me that for 69 consecutive weeks Thursday nights there wasn't enough money to meet Friday's payroll. Can you imagine the stress? Product simply had to get out the door. Once I gave my boss a schedule and he said we needed to shave it by a third. I protested; he replied that if we missed his deadline I'd have to let three of my people go. What would you do? I shamefacedly agreed. I don't remember what happened, other than there was no layoff. Money is the grease of business. Without it the company goes under. My point is that management is often under pressure we don't see. I believe that in many companies there is, and always will be, an essential disconnect between management and engineering. The boss is being squeezed by forces that have nothing to do with our work. Investors want shareholder equity. The bank wants profits, today. In an ideal world if we could deliver a perfect product with a million lines of code in a month, the competition will soon follow. Management will then want to trim that to two weeks. What's the answer to this disconnect? I have no idea, but one element is regular open and frank discussions. And mutual respect between all participants. Management is hard, and we need to recognize those trials just as the boss has to acknowledge our real issues. |

||||

| Working Overtime | ||||

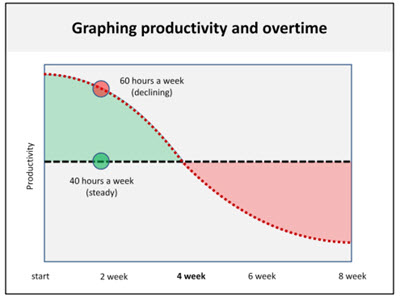

Though we all have a gut-sense that working too many hours is counterproductive, a short paper by John Nevison called Overtime Hours: The Rule of Fifty cites data from four studies conducted over a half century to show how productivity drops as overtime increases. The studies' results vary quite a bit. One shows that at 50 hours/week workers do about 37 hours work, dropping to just over 30 once the workweek increases to 55. The "best," if you can call it that, results were from a 1997 survey showed wielding the whip can yield increasing productivity, edging up to about 52 useful hours for a 70 hour week. But they all show a nearly impenetrable barrier of around 50 productive hours or less, regardless of time shackled to the desk. Unsurprisingly the data shows a sharp drop in results for those working excessive OT for weeks on end, averaging around a 20% drop after 12 weeks of servitude. That means, as the author concludes, the Rule of Fifty is a best-case estimate. The 2005 Circadian Technologies Shiftware Practices survey showed that productivity can decrease by as much as 25% for a 60 hour workweek, which jibes pretty well with Nevison's data. Circadian's results also demonstrate that turnover is nearly three times higher among workers putting in a lot of OT, and absenteeism is twice the national average. I'm not sure what that result means, since it's awfully hard for an absent worker to be putting in overtime. Fred Brooks claims that the average software engineer devotes about 55% of his week to project work. The rest goes to overhead activities, responding to HR, meetings about the health insurance plan, and supporting other activities. The German government claims that the nominal workweek in Germany is 37.5 hours because "The original reason for introducing this system was to combat rush-hour traffic congestion, but among the more direct gains are an improvement in employee morale, greater productivity, significant decreases in absenteeism, greater flexibility for women who juggle the demands of work, home and children, and the increased sense of individual dignity that the employees get from having a greater say in organizing their own time." The last phrase may be true but seems awfully hard to measure. However, their conclusions about morale, absenteeism and productivity parallel the survey results quoted above. One of eXtreme Programming's practices is to never work two weeks of overtime in a row. This graph, from "Laws of Productivity" shows that short sprints can be effective. After a few weeks the benefits disappear:

Here are some more data:

What's your take? When does overtime become counterproductive? |

||||

| Cortex M4F Trig Performance v. Custom Algorithm | ||||

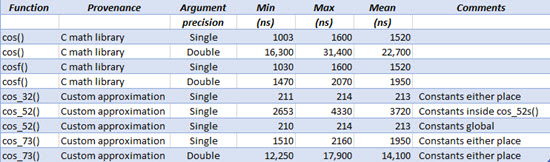

But how does the Cortex M4F's performance compare to writing custom versions of the cos() function? (The sin() function will be almost the same as cos() because sin() is just a 90 degree shift of cosine). The M4F has a speedy single-precision floating point unit (FPU). The M4 FPU has no ability to compute trig functions. The compiler's math library uses some sort of approximation. There are many approximations that compute trig functions. I chose three from the book Computer Approximations, by John Hart. These have different accuracies; if you can stand less accuracy the trig function will be computed faster. More information about these approximations is here. The approximation code is here. My experimental setup is:

Here are the results:

A few observations:

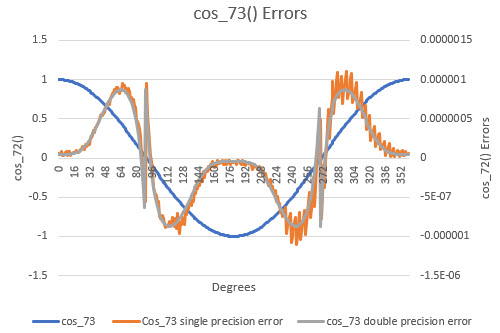

An alternative to cos_32() is a lookup table, given a few hundred entries in the table. That would scream. Only a handful of instructions would be needed, and at these clock rates the CPU is executing instructions in a matter of a few tens of ns or less (depending on wait states, etc.). To ensure cos_72()'s accuracy wouldn't be degraded by the resolution of a float, I coded it using doubles for all constants and calculations. Changing all of those to floats gave almost an order of magnitude improvement in speed. But how much accuracy was lost? Running that algorithm in Visual Studio against the double-precision cos() from the math library gives this:

"Cos_73 single precision error" is the error when all of the variables and constants in the algorithm are floats. "Cos_73 double precision error" reflects errors when everything is declared double. Other than some quantization noise there's not a lot of difference, which means most of the inaccuracy stems from the algorithm itself. The book I referenced, "Computer Approximations", has many higher-precision options, some of which are implemented in C in the code mentioned earlier. These errors are a combination of the errors implicit in the approximation algorithm, along with the effect of using floats rather than doubles. The worst error was 0.0003%, with an average error of 5.8E-05%. |

||||

| This Week's Cool Product | ||||

EMA Design Automation has a new free guide titled "The Hitchiker's Guide to PCB Design." It's a 100-page document with a lot of good advice on designing printed-circuit boards. If you're a Hitchikers fan, well, there are a lot of fun references to Adams' wonderful series. Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

||||

| Jobs! | ||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. | ||||

| Joke For The Week | ||||

These jokes are archived here. If it aint broke, fix it till it is broken. |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |