|

|||||||||||||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. To subscribe or unsubscribe go here or drop Jack an email. |

|||||||||||||||

| Contents | |||||||||||||||

| Editor's Notes | |||||||||||||||

|

As the year winds its way to a close, I wish everyone the best of the holidays, great family time, and a Happy New Year. Latest blog: R vs D - the truth (especially about scheduling). |

|||||||||||||||

| Quotes and Thoughts | |||||||||||||||

The most important single aspect of software development is to be clear about what you are trying to build. Bjarne Stroustrup |

|||||||||||||||

| Tools and Tips | |||||||||||||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. The book Embedded Software Development for Safety-Critical Systems by Chris Hobbs is a book only a standards zealot could enjoy. I did. One interesting point: the author notes that some systems have a natural "down" time; for instance, a plane lands, a car is turned off, bedside medical devices might not be on all of the time. So why not periodically reboot these devices when they are idle? A prophylactic reboot will free leaky memory and perhaps cure a number of lurking problems. This idea mirrors a design pattern some developers employ when they periodically reprogram peripheral setup registers in case those were corrupted by an ESD event. He doesn't say much about potential perils, but what about peripherals? Do the GPIO lines float to some undesired state for a time during reboot? Are comm glitches produced? Are you sure the system can recover from a reboot? The idea of taking action to prevent a future problem is appealing. But I'd go further, and, instead of a restart, would let the watchdog timer time out. Then the CPU will get hit with a hard reset, which could cure some hardware problems as well (e.g., a flipped bit due to a cosmic ray hit). But, again, one must mitigate against potential problems a reboot could create. There's more on this subject from a Muse reader here. On another topic, an article in IEEE Spectrum paints a grim picture of the semiconductor market's future, especially in the West. I was struck by this quote: "I hate IoT," said Hirsch. "I think IoT sucks. You have these stupid tiny chips, that cost a buck or so, going into a smart building [and they] can last 30 years. One of the things [that drives] the semiconductor industry is turnover. Why do I want to sell a chip that lasts 30 years and costs practically nothing? What I want is to sell a disposable chip that goes in the garbage almost as soon as I sell it." Wow. I figure these "stupid tiny chips, that cost a buck or so," that today offer a remarkable amount of performance, are instead the harbinger of an exciting future! Kim Pedersen sent a link to a nice website that converts C gibberish to English. It's fun to try. |

|||||||||||||||

| Freebies and Discounts | |||||||||||||||

This month's giveaway is a battered and beaten "historical artifact." It's a Philco oscilloscope from 1946. The manual, including schematic, is here. I picked it up on eBay a few years ago, and while it's kind of cool, have no real use for the thing. It powers up and displays a distorted waveform, usually, but is pretty much good for nothing other than as a desk ornament. I wrote about this here. (The thing is so old I'd be afraid to leave it plugged in while unattended).

Enter via this link. |

|||||||||||||||

| Are Watchdogs Necessary? | |||||||||||||||

Are watchdog timers crutches for lousy developers? In a number of recent emails some readers claim that great embedded products don't need a watchdog. Correspondents reason that watchdogs are the last line of defense against software crashes, so write great code and your system will be crash-proof. If only. Software is unique in that it's probably the only human endeavor - and certainly the only engineering field - where it's at least theoretically possible to achieve perfection. Software is unmarred by the gritty realities of poor castings, cyclic loadings and counterfeit parts that mechanical engineers must deal with. It doesn't suffer from EE nightmares like lightening strikes and poor solder joints. But software isn't something that comes down from on high. It's designed and built by imperfect humans, who craft their code from often-misinterpreted and vague requirements, and interface the software to other complex systems whose behavior is usually poorly-specified. Complexity grows exponentially; Robert Glass figures for every 25% increase in the problem's difficulty the code doubles in size. A many-million line program can assume a number of states whose size no human can grasp. Perfection, giving these challenges, will be elusive at best. And how can one prove their code is perfect? (The SPARK people can, using formal methods). The review board that studied the software-induced $500 million Airane 5 failure had a number of conclusions. One was that the organization had a culture that assumed software cannot fail. A half century of experience has taught us quite the opposite. Software doesn't run in isolation. It's merely a component of a system. Watchdogs are not "software safeties." They're system safeties, designed to bring the product back to life in the event of any transient event that corrupts operation, like cosmic rays. Xilinix, Intel, Altera and many others have studied these high energy particles and have concluded that our systems are subject to random single event upsets (SEUs) due to these intruders from outer space. Currently cosmic ray SEUs are thought to be relatively rare. One processor datasheet suggests we can expect a single error per thousand years per chip. That sounds pretty safe till you divide that by millions, or hundreds of millions of processors shipped per year. If these parts drove airplanes (at high altitudes where cosmic rays are more penetrating) without mitigation, aluminum would be raining out of the sky. Systems and software operate in a hostile world peppered with threats and imperfections that few engineers can completely anticipate or defend against. A watchdog timer, which requires insignificant resources, is cheap and effective insurance. It's the fuse EEs have routinely employed for a hundred years, and it's one that automatically resets. I've written a report about using watchdogs here. What do you think? Do your products use a watchdog? |

|||||||||||||||

| Auditing Firmware Teams | |||||||||||||||

From time to time companies have me come in to examine their firmware engineering practices. That involves poking around, interviewing team members, reviewing documents, and conferences with managers. In case you want to audit your own team, here are my most common findings, in no particular order:

Adapted from The Economics of Software Quality, Capers Jones Here's another take on this. It's Joel Spolsky's software team quality test:

|

|||||||||||||||

| No printf()? No Problem | |||||||||||||||

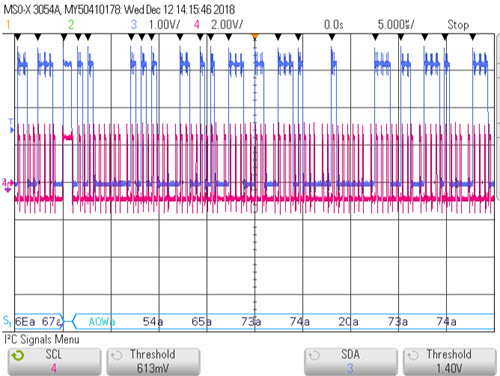

One of the challenges we face in debugging many embedded systems is that there's often no functioning printf(). But given a couple of unused GPIO bits and a protocol analyzer, it's easy to do a poor-person's printf(). "Protocol analyzer" includes many modern digital scopes, though sometimes this is an optional feature. I wouldn't buy a scope without it, as today's world is all about serial comm. I chose to use the GPIO bits to simulate I2C as it's a very simple interface, is not timing dependent (RS-232's timing is critical and hard to get right in bit-banging code), and is supported by most protocol analyzers. The trick is to bit-bang the GPIO to simulate I2Cs SCL (clock) and SDA (data) signals. The bit-banging code is here - feel free to use or abuse it in any way. Since I2C is a synchronous protocol timing isn't important as long as SDA and SCL are sequenced appropriately. Connect a scope channel to the SDA signal and another to SCL. You'll have to tell the scope which channel is gathering which signal. In the following picture SCL goes to channel 4 and SDA to channel 3:

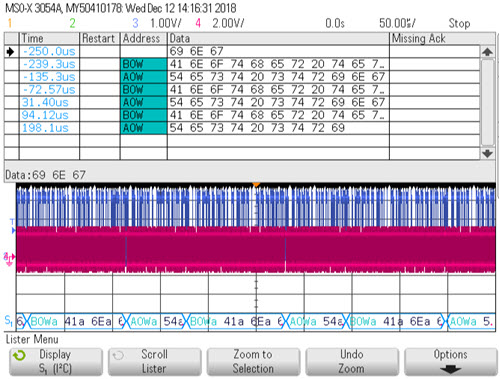

First, note the red and blue waveforms which are those two signals. They're pretty indecipherable to mere mortals. But across the bottom the protocol analyzer parses them into hex characters. The "a" after each number is a Keysight convention that indicates the I2C ack is low. Siglent uses the same convention. Or, enable the "lister" function, which creates a table of acquired data:

Some protocol analyzers will decode the hex into ASCII, but none of those in the scopes I have will do so. Saleae's logic analyzers support I2C and let you set the radix to ASCII. Of course, you can trigger the scope from any signal to sync the decoding to some event. Or, the scope will trigger on a specific pattern sent to the I2C bus. The bottom line is that you can call the I2C code to send any string to the protocol analyzer. I'm so glad so many of our conventional debugging tools (JTAG, etc.) are so powerful. But sometimes you need a different angle to get data from a system. Some approaches are a bit crazy; back around 1972 we used a shortwave radio to debug real-time minicomputer code. Tuned to the right frequency, the radio's tone told us which loops were executing! I suspect the FCC would not be too pleased about this today. |

|||||||||||||||

| This Week's Cool Product | |||||||||||||||

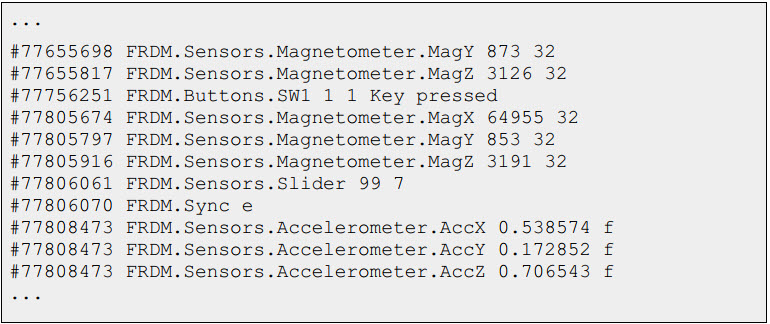

vcdMaker is a free/open-source tool that translates random log files into .vcd format (commonly used by Verilog folks in their simulations). It's easiest to explain in terms of a sample application using the Freescale FRDM evaluation board, which is loaded with sensors. A small program reads the sensors and outputs their values in no particular order to a log file. For instance:

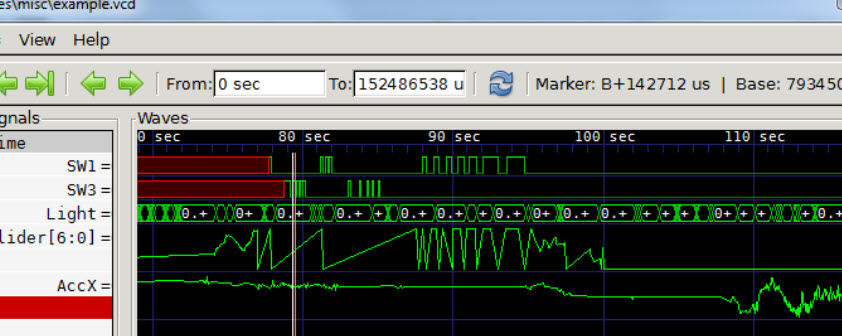

(This could be a huge log of thousands of collected datapoints.) You supply vcdMaker with a XML file that tells it how to parse the log. The XML specifies field names, values (float, decimal, binary, etc.) and more. vcdMaker uses the XML information to create a .vcd file. Then it's easy to use a .vcd viewer to display the converted log file in a useful way. For instance:

Note that the log, which is populated with randomly-interspersed sensor readings, is now more usefully shown with each sensor's data ordered in a logical fashion. (.vcd viewers can be expensive, but there are open-source versions, such as GtkWave, which produced the graph above.) Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |

|||||||||||||||

| Jobs! | |||||||||||||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

|||||||||||||||

| Joke For The Week | |||||||||||||||

Note: These jokes are archived here. You might be an engineer if...

|

|||||||||||||||

| Advertise With Us | |||||||||||||||

Advertise in The Embedded Muse! Over 28,000 embedded developers get this twice-monthly publication. . |

|||||||||||||||

| About The Embedded Muse | |||||||||||||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |