|

|

||||||||||

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. |

||||||||||

| Contents | ||||||||||

| Editor's Notes | ||||||||||

|

After over 40 years in this field I've learned that "shortcuts make for long delays" (an aphorism attributed to J.R.R Tolkien). The data is stark: doing software right means fewer bugs and earlier deliveries. Adopt best practices and your code will be better and cheaper. This is the entire thesis of the quality movement, which revolutionized manufacturing but has somehow largely missed software engineering. Studies have even shown that safety-critical code need be no more expensive than the usual stuff if the right processes are followed. This is what my one-day Better Firmware Faster seminar is all about: giving your team the tools they need to operate at a measurably world-class level, producing code with far fewer bugs in less time. It's fast-paced, fun, and uniquely covers the issues faced by embedded developers. Information here shows how your team can benefit by having this seminar presented at your facility. Embedded Systems Conference San Jose - I'll be there December 6 and 7, giving a few talks, checking out the expo hall, and hopefully meeting Muse readers. More info here. Discounted Better Firmware Faster on-site seminars in Europe: I'll be at the Embedded World show in Nuremberg February 27 to March 1. Without the cost and time required to travel, I will be offering on-site versions of this class in Europe for a reduced price shortly before and after the show. If interested, drop me an email. I hope readers in the USA have a great Thanksgiving weekend. As one gets older, family and tradition become more important. We spend Thanksgiving at my brother's house in Virginia with a big gathering of siblings, sons, daughters, parents, nieces and nephews, with the occasional boy/girlfriend and acquaintance or three tossed in. Part of the tradition is to play Alice's Restaurant, Arlo Guthrie's wonderful song about events that occurred on a Thanksgiving over 50 years ago, on the long drive there. |

||||||||||

| Quotes and Thoughts | ||||||||||

"The vast majority of accidents in which software was involved can be traced to requirements flaws and, more specifically, to incompleteness in the specified and implemented software behavior - that is, incomplete or wrong assumptions about the operation of the controlled system or required operation of the computer and unhandled controlled-system states and environmental conditions. Although coding errors often get the most attention, they have more of an effect on reliability and other qualities than on safety." Nancy Leveson. |

||||||||||

| Tools and Tips | ||||||||||

|

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. Many readers sent this link, which explores 21 different microcontrollers, pondering the strengths and weaknesses of each. Some vendors will not be pleased... In the last issue I ran a chart listing the size of programs vs. the number of pages of requirements. Scott Nowell, of Validated Software (they provide certification packages to show various products comply with standards like DO-178C) sent me the mapping between size and requirements for Micrium's uC/OS-II. This is interesting since that RTOS can be used in systems that must be certified for avionics use (under the stringent DO-178 umbrella), so is representative of very-carefully designed code. The RTOS has 4125 lines of code. The high-level requirements occupy 45 pages; low-level requirements an additional 392. If one were to print out the code at 50 lines/page, the listing would comprise a stack of paper only a quarter the size of the requirements. To clarify "high-level" and "low-level," DO-178C defines:

How do your products compare? The highest level of DO-178C is extremely demanding, and not appropriate for what most of us build. But it does represent a level of quality we should aspire to. |

||||||||||

| Freebies and Discounts | ||||||||||

Win a nifty ee701 differential preamp for a scope! Thanks to ee-quipment for donating the unit. A review is here. One lucky Muse reader will win this at the end of November, 2017.

Enter via this link. |

||||||||||

| Minimizing Optimism | ||||||||||

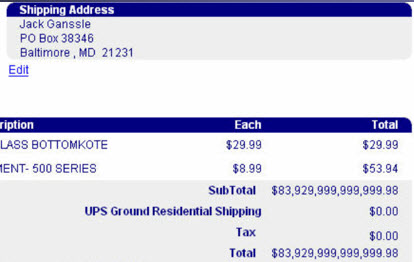

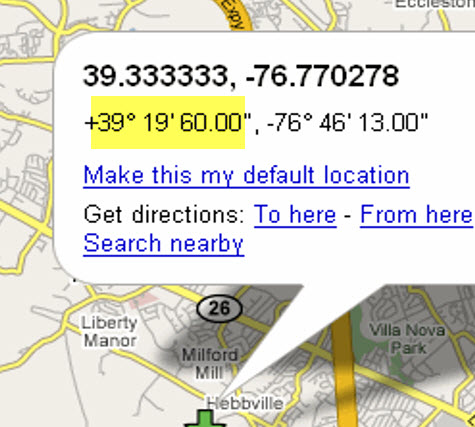

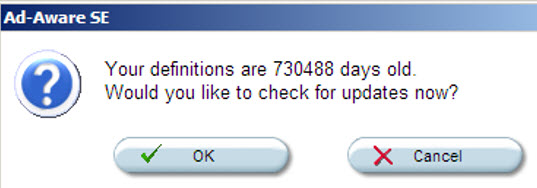

In Muse 317 I ranted a bit about software people who are too optimistic - they expect things to work, and all too often don't check corner conditions and the like. I recently came across a 2012 report by NASA's Inspector General about why too many of their missions fail, run late, or are over budget. The report claims their are four factors that contribute to these problems. The first is managers who are overly optimistic, who they assume things will be just dandy. Alas, bitter experience proves otherwise. It's the same in writing software. Expect everything to go wrong. Consider what sorts of errors may occur and take some sort of thoughtful remediation action, or at least fail gracefully. I was ordering parts for my sailboat. A quart of paint, a few filters for the engine. This popped up:

It didn't seem right to me. But at least they didn't charge tax. This is optimistic programming (at best)... or professional malpractice. When I learned to program we were repeatedly told to "check your goesintas and goesoutas." But half a century later we often ignore that bit of wisdom. Ironically, those distant days were the era of scarce resources, like memory and CPU cycles. (When I went to college there was one computer, a $10 million Univac 1108 mainframe. It had just one million words of memory). These resources are generally much more plentiful today. The cost to add sanity checks is low. Is the above example an aberration, a one-off, never-to-be-repeated mistake? Nope. I've got tons of examples. Here are a few:

More:

A Nasdaq above 16,000,000? I wish I had shorted the market. More:

The highest temperature recorded in nature on Earth is 134° F (56.7° C) at at Greenland Ranch, Death Valley, California. A wise developer might use that fact to come up with a reasonable bound for possible values. More:

119° with snow on the ground? More:

The lowest temperature ever recorded in nature on Earth is -129° F (-89.2° C), at the Soviet Vostok Station in Antarctica. That might be a reasonable lower bound. More:

More:

Really? Since that's 2001 years ago I'm surprised to find the message in English instead of Aramaic.

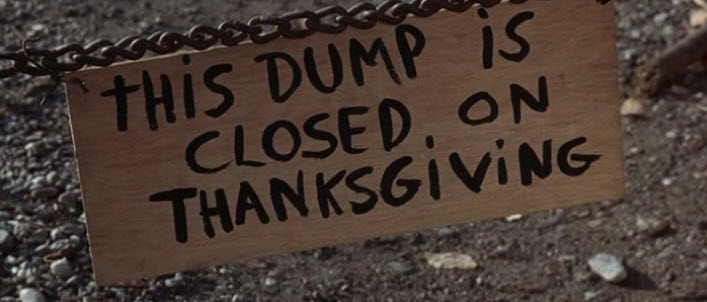

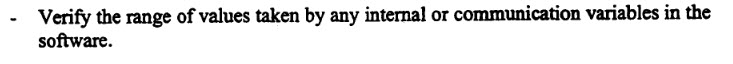

Closed on Thanksgiving? Must be a software problem. We had never heard of a dump closed on Thanksgiving before, and with tears in our eyes we drove off Into the sunset looking for another place to put the garbage. I could include many more examples (unfortunately). These are not new issues. The 1996 failure of Ariane 5 launch vehicle was due in part to a variable that overflowed. In fact, there were seven such variables which should have been monitored, but according to the inquiry board that investigated the accident, three weren't, and no one knows why. One recommendation the board made reads:

One of the rules of hardware engineering is to be a pessimist. In designing stuff, like circuits and structures, one always does worst-case analysis. What could go wrong? Sure, this capacitor is 10 μF at room temperature. What happens on a hot day? Suppose we get an R1 that is at the low end of its tolerance, but when R2 is at the high end how does the circuit then behave? Absent other information, a wise designer always uses components' worst-case specs. We should do the same in software engineering. What if this function is passed an "impossible" value? What if a calculation gives a result that simply makes no sense? It is hard to anticipate all of these error conditions. But that's our job. |

||||||||||

| More on Datasheets | ||||||||||

A lot of people wrote in about my take on datasheets in the last Muse. Peter Kazakoff wrote:

Remco Stoutjesdijk has some first-hand experience on both sides of the issue:

Bob Snyder had some ideas:

Stephen wrote:

Officer Obie tweeted:

|

||||||||||

| Jobs! | ||||||||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter. Please keep it to 100 words. There is no charge for a job ad.

|

||||||||||

| Joke For The Week | ||||||||||

Note: These jokes are archived at www.ganssle.com/jokes.htm. Not really a joke, but amusing: Ian Stedman wrote: I noticed something strange upon re-reading your 'Embedded Muse' column #337. On the picture of the multimeter (I didn't enter to win that one - I have plenty of meters. Let some other worthy win) the '6' (six) on a seven-segment display has its top bar illuminated. On the picture from the logic analyzer (that you swiped from the manual), the '6' (six) does NOT have its top bar illuminated. Now, personally, I'm in the camp that if that top bar ain't there, it's a 'b', not a six, and I will have no truck with the blasphemers who say otherwise. Conversely for a nine, without the bottom bar, that's not a nine, that's a backwards P. The matter is entirely irrelevant, but I think it might be amusing to some of your readers. Should sixes and nines have their top and bottom bars illuminated or not? Here are the pictures Ian referenced:

|

||||||||||

| Advertise With Us | ||||||||||

Advertise in The Embedded Muse! Over 27,000 embedded developers get this twice-monthly publication. . |

||||||||||

| About The Embedded Muse | ||||||||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |