Jon Titus' laments about the pain of getting an MCU configured generated a huge number of replies. Some of them follow:

Paul Carpenter wrote a long note about this issue here.

Russ Ramirez wrote:

|

Much sympathy to John Titus. Energia, and now mainstream Arduino IDE 1.6.10+ with Energia libraries, is a better way to go for playing than CCS. I gave up on the latter long ago... |

Charles Manning is unhappy about the overhead in some of these libraries:

|

I have a very different experience from vendor libraries and embed etc than Jon does.

These libraries are designed to make the part look very simple to use and therefore encourage their use. Very seldom do these libraries actually make for the useful basis of a product unless you massively over compensate (i.e. are using a 70 MHz 32-bitter to do things we would have done in the 1980s with an 8-bitter).

Two examples from projects I'm currently working on:

The first uses an STM32 at 72MHz. This project needs to generate quite a few different signals not supported by peripherals by bit-banging GPIOs. With the "warm fuzzy" libraries the fastest square wave you can achieve is about 4 MHz - not fast enough (absolute minimum is 12MHz). Spend a bit of time looking at the data sheets and figure out the direct register calls you can get about 17MHz.

The second is a system based on an NXP LPC43xx. This device streams data at approx 12MBytes/second (read by CPU doing 250k interrupts per second) into buffers from which it can be send by USB. The USB libraries are only "hello world" performance level and are incapable of sending data at any more than about 1MBytes/sec. The DMA library just glosses over the more useful DMA settings (burst size etc) causing unacceptable sampling jitter due to DMA bus contention with the CPU. End result: dig through data sheets and write my own functions.

If I relied on those vendor libraries for either of these products they would have either been abandoned or would have had to be massively redesigned to use far more expensive and more power-hungry circuitry. That would have cost my customers huge amounts of money.

These libraries are designed to lure in developers by showcasing some capabilities, but they seldom get anywhere near the optimum performance of the silicon.

So my advice is to never rely on those libraries being up to the job. If they happen to work for you - well that's a wind fall. |

Ray Keefe is sympathetic to TI:

|

The TI port complexity problem is really an ARM IP issue. the ARM technology licensed into the TI product is where the complexity arises. And although there are varying degrees of wizards from different vendors, we usually spend a day reviewing the IO mapping for a new design.

It isn't just that setting up the ports is complex. You also have to make sure you have allocated pins so you can access all the functions you want at the same time. If you want to use all the UARTs for instance, that may clash with some of the other IO allocations other peripherals you also want. You may not be able to use the Ethernet peripheral and the last UART at the same time. So this also affects chip selection. You might need the 100 pin version instead of the 64 pin version just to get the pin allocations you want. So this problem goes deeper than just setting up pins.

Because we develop a lot of products each year, we are adept at this complexity but I do understand it can be daunting to even find out what you need to do. On and Atmel AVR, if you enable the UART then the pins are automatically connected to the peripheral at the same time. No such expediency with a Cortex M4 ARM device. That UART may have 3 separate pin allocations possible for it. And correctly configuring the UART doesn't guarantee any communications can happen yet. So in these devices there currently isn't a substitute for wading through the details.

And I fully agree with the comment on TI Manuals which run to 700 pages and still don't seem to contain the basic information you are looking for. |

Lars Potter wrote:

|

IMHO that is only the tip of the iceberg. The hardware engineer I work

with asks me for basic Requirements (number of gpio pins needed, needed

interfaces I2C, UART,..). He then goes off and decides which chip to use

based on price, package,.. This sometimes means a different CPU from a

different vendor with a different architecture for each project. (with

very exotic requirements and high volume projects this is the only way

to go).

The usual approach of using vendor supplied frameworks gives you vendor

lock in for free! mbed and the like will always have a limited set of

supported hardware. And they always introduce bloat.

Then having a somewhat working firmware is not what we aim for. Once you

get deeper into development, especially the vendor provided stuff, shows

terrible bugs. So instead of "just using" the framework we now have to

understand and debug it.

I have no problem with looking into the data sheet, and sometimes it is

essential to use very specific hardware features to get a really good

solution.

I also don't blame the vendors. It is not their jobs to provide us with

perfect HALs for each exotic project. But paying more for the MCU just

because we have a working UART stack for that vendor also feel wrong.

Doing a universal HAL in C for all hardware without introducing bloat is

impossible. Not everything can be fixed by a faster CPU.

Maybe we need a different programming language / tool set for HAL

Implementing I2C drivers all the time can not be the solution right? |

A reader who wishes to remain anonymous wrote:

|

I echo Jon's frustration with the TI Launchpad and CCS. It is a total horror show in my opinion. One only needs to look at Microchip's offering to realize just how it should be done. With the PicKit one can be blinking an LED in an hour. Add another hour and you are doing it with timer interrupts. The tool makes it a cinch to take an image off of the development kit (or any design) and save it off. It's also good for field programming as no IDE is required.

The MSP430 is the only processor I have not used and it is tough going. Unfortunately a design has been handed to me and I'm stuck.

Microchip made a very wise technology choice in developing the new (ok now it is 4+ years old) IDE in that it runs on Java. A new release of the IDE comes out for Windows, MAC and Linux all at the same time. I'd be hard pressed to know what platform it is running on. In my experience designs can move from one platform to another with little or no difficulty. |

Gary Lynch has had similar experiences as Jon:

|

I sympathize with Jon Titus' anguish over the TI LaunchPad, having used both TI products and Code Composer Studio. I have observed over the years that eval boards are supported by engineers with about 2 years of experience. Once they hit 3 years they are promoted to management or fired and replaced with younger designers, but the experience level never goes up. So if you have been working more than 2 years, you are always going to be frustrated with these products. (This extends to almost all brands I have used and is not unique to TI.)

Then they are tasked with writing code for an entire family of processors with tiny architectural differences that are hard to keep straight, across half a dozen wildly-different IDEs; and they tend to come up with a single source deck that handles all the diversity with structures, unions, macros, and pre-processor constants set on the command line: i.e. a solution to their problem--not yours. Reverse-engineering a junior engineer's code is more work than reading the data sheet.

At this point in my career, I think these packages are only useful if you need to

show your boss some kind of result real quick, using only the projects in the kit

without modification (so the boss needs to be impressed by blinking LEDs).

I have used a number of vendor-provided code generators that initialize your I/O

ports using settings you entered into a GUI and they are only helpful if they work

out of the box. If there are problems with the initialization, you are totally

clueless and have to read the datasheet anyway.

Just my $2.0E-02 worth. |

Joe Martin sent this:

|

I wanted to add my thoughts to those from Jon Titus in issue 321 about the state of vendor tools with complex, modern MCUs, and about the Tiva line from TI specifically. It's been quite a while since I looked at CCS, TI's IDE offering, so I won't speak that here. Instead, I've had success using Eclipse and the GCC tools for my projects targeting ARM Cortex-M processors. The GCC tools are well integrated into Eclipse (they weren't in the past, and I'd encourage those who didn't use them for this reason to give them another chance), and allow me to do coding, building, and debugging from a single interface. While there isn't a hardware specific configuration GUI like Jon described, I've found that one isn't really necessary for working with the Tiva parts.

I use the TM4C1294NCPDT in several designs, which is the Big Brother of the processor Jon wrote about. I've found TI's Peripheral Driver Library very useful (after an initial learning curve), and usually only require 2-3 lines/function calls to fully configure a processor peripheral. This library handles most of the GPIO setup in Jon's example, which I suspect was left in to show what is normally hidden from the user. This example also illustrates a special case on this processor, which is pin 0 on Port F. This pin can be muxed inside the processor to be a Non-Maskable Interrupt instead of a GPIO pin. Due to the critical nature of this pin, any changes to it's configuration will be blocked until the user "unlocks" it by writing a special value to the port's unlock register (GPIO_PORTF_LOCK_R = GPIO_LOCK_KEY;). This is only necessary for pins which can be used as the NMI or JTAG interface, 9 pins in total. I've seen this done many times before, on many different processors. Automating this step seems to blunt the effectiveness of having critical pin lockouts on the device in the first place, and makes it more likely that a developer will use this pin without realizing that it's special.

I think the lesson here, at the end of the day, is that we have to remember that we're working with powerful, complex devices, and that consulting the manuals/datasheets/API documents/code examples isn't optional. I'm a big fan of the Tiva Launchpads, but I'll also be the first to admit that they are anything but simple. |

Jim Karpinski contributed:

|

I agree with the "We Need Simpler Ways to Access IO on MCUs". I am using the NXP LPC4330 chip and NXP provides a pin mux tool. It sounds great except that it is worthless. Besides all the usually disclaimers, there is this in the generated include file:

" * @note This file is for reference only. * PINMUX(Pin address, Function Number) need to be defined by user.".

The NXP pin mux tool generates a series of PINMUX() macro invocations. This doesn't provide much help when you need to write the PINMUX() macro yourself. The NXP tool does have a nice graphical interface that generates the IO config include file. It's just like they didn't finish the tool, which is worse than not providing anything. |

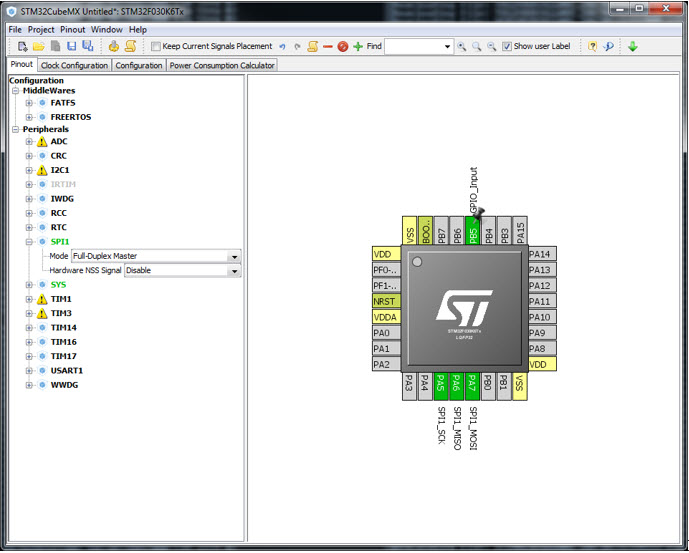

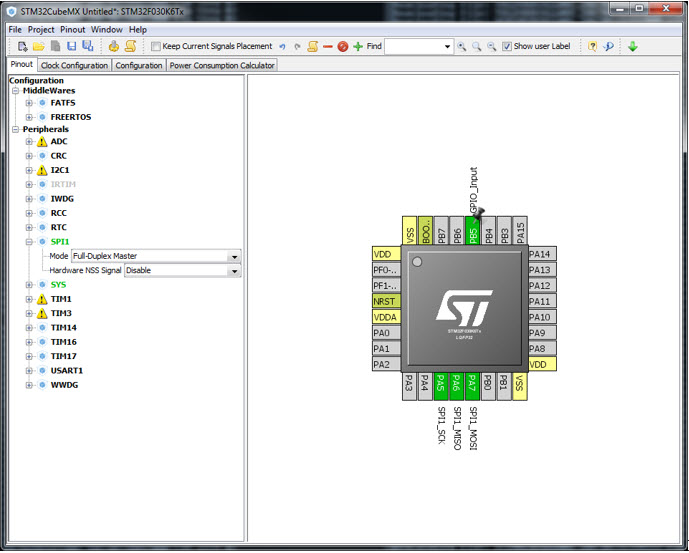

Matthew Mucker likes ST's STM32CubeMX:

|

Jack, to follow up on Jon [UTF-8?]Titus' gripe about setting up I/O on complex microcontrollers, I think that ST's STM32CubeMX is a step in the right direction. In the graphic below, I've configured PB5 as a GPIO input and SPI1 has been assigned to pins PA5-PA7. A menu item will create source code for me that does all of the peripheral setup based on my choices in the GUI. The Clock Configuration tab is extraordinarily useful for ensuring the many clocks in the chip are set to appropriate frequencies, and the Configuration tab allows more granular settings for each of the items selected in the pinout tab. Regrettably, installing STM32CubeMX and the required chip-specific libraries isn't as intuitive as it could be, and the interface between this GUI and your preferred editor/toolchain has a learning curve, but I think ST is on the right track here.

|

Ralph Moore wrote:

|

Since American universities abandoned teaching embedded engineering at precisely the wrong time (now, when it is most needed), it is good to see companies, such as yours, trying to rectify their dumb error. Nonetheless, nut-and-bolts embedded software engineering is moving to India and China. Having hired American embedded software programmers who did not last due to pathetic skills, our last three (and successful) hires have been Chinese embedded software programmers who do not want to live there. When old-timers like you and I really retire, there will be only Java apps programmers on this side of the Pacific.

I liked Jon's statement: "They're not." - pithy and powerful!

He points out a serious problem. Our industry has become dominated with Marketers of More and young engineers who thinks Works Is Good Enough. I was taught and have always strived to find the simplest solutions to complex problems. Of course this takes extra work - complexity is only the first cut, not the final product. So we are seeing layers of complex, inefficient software on top of complex, inefficient hardware -- SOUP on HOUP. ARM is on the path of risk, not RISC.

Now these pots of OUP (POUP) are being put on the Hacker's Highway and some people wonder if they will be safe! |

Vic Plichota is using the LaunchPad:

|

> The board's processor chip--a Tiva-family TM4C123GH6PM

[snip]

> which requires many GPIO setup functions:

Hah, indeed! I'm fooling with the same LaunchPad right now, and just wait

until you start wrassling with the A/D converter.

I can say the same for NXP's (and doubtless other vendors') "advanced

peripherals".

Still, you can't expect a simple API or setup procedure to accommodate such

flexibility as these subsystems offer, and we pay the price wrt spending many

more hours reading the docs, than writing code.

(Oh boy, try TI's RM57 Hercules' DMA controller, if you want to spend *days*.)

IMHO, it would be a big help if:

1 - the docs explained *why*, as well as "how", and

2 - the feedback-loop was tightened, so that customers were also privy to the

same maddeningly special "magic" knowledge that the test-engineers and

demo-writers possess.

3 - "HOWTO" shortcut tutorials should be written in plain English or pseudocode. It's not enough to simply say "use this support-library API thusly", because that doesn't explain a damn thing -- and we need to know how this silicon actually works and why it needs to be set up in such-and-such a way, without relying on blind trust or agonizing over vetting other peoples'

code. |

Jack A. Everett wrote:

|

You may already know that NXP (FreeScale) provides Processor Expert that fulfills to some extent the wishes of Jon Titus. |

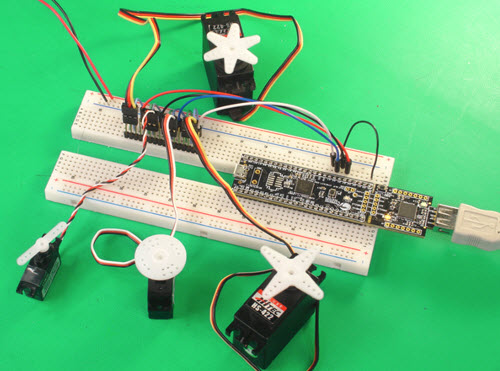

Finally, Jon Titus, whose comments started all of this, writes about the Cypress tools:

|

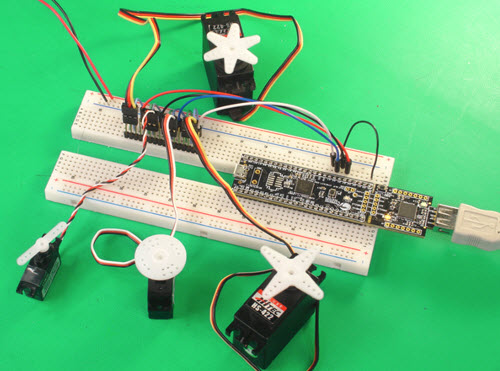

I should have mentioned Cypress in my piece as an example of what others should strive for. I have several of the company's boards and kits and think they're great. No comparison with most other MCUs. The PSoC Creator tool is wonderful. As a demonstration I showed an engineer how easy it is to control four servo motors (see photo). Pull up a PWM block, drag it into the schematic area and make a few connections. I calculated the PWM periods and needed clock frequency. Then I quickly duplicated this arrangement three times. The PWM pop-up menu makes setup a breeze, and it links directly to the PWM data sheet. The combination of the PSoC Creator, the Cypress architecture, and ARM Cortex-M3 and -M4 processors simplifies designs.

|

|

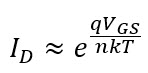

We think of FETs operating in one of three regions: the first is at cutoff, when no current flows. Then there's the ohmic and saturation modes where the FET is on. But the cutoff, AKA subthreshold, region is actually more interesting than the device simply being "off."

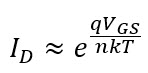

Vth is the threshold voltage; it's the voltage needed to turn a FET on. But when the gate-source voltage (VGS) is less than Vth some electrons do enter the channel, so there is some current flow from the source to the drain. Simplifying things:, in the subthreshold region drain current is:

Where q is charge, n is a constant, k is Boltzmann's constant, and T is temperature. Obviously, drain current is highly non-linear with the gate-source voltage.

Vth depends on a lot of factors, but is typically around half a volt. This means subthreshold operation is in the couple of tenths of a volt range. . As the equation shows, the drain current is also highly dependent on the FET's temperature. That's a good reason for avoiding operation in this region. But a circuit running there can offer extremely low active power consumption - as much as an order of magnitude lower than in the ohmic region.

The IoT needs very low-power MCUs and associated electronics to run for long times from batteries or energy-harvesting sources. Ambiq has a new MCU whose transistors operate in the subthreshold region. Their new Apollo2 has a (claimed) better than 10 uA/MHz running spec. That's an impressive spec for a 32 bit processor with gobs of on-board I/O.

Ambiq's engineers tell me it uses calibration structures, on-chip temperature monitors with compensation, and other mysteries shrouded in NDAs to run reliably over a wide temperature range.

I have an Apollo eval board here but haven't had a chance to play with it yet.

Note: This section is about something I personally find cool, interesting or important and want to pass along to readers. It is not influenced by vendors. |