|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. |

| Contents |

|

| Editor's Notes |

Better Firmware Faster classes in Denmark and Minneapolis

After over 40 years in this field I've learned that "shortcuts make for long delays" (an aphorism attributed to J.R.R Tolkien). The data is stark: doing software right means fewer bugs and earlier deliveries. Adopt best practices and your code will be better and cheaper. This is the entire thesis of the quality movement, which revolutionized manufacturing but has somehow largely missed software engineering. Studies have even shown that safety-critical code need be no more expensive than the usual stuff if the right processes are followed. This is what my one-day Better Firmware Faster seminar is all about: giving your team the tools they need to operate at a measurably world-class level, producing code with far fewer bugs in less time. It's fast-paced, fun, and uniquely covers the issues faced by embedded developers.

I'm holding public versions of this seminar in two cities in Denmark - Roskilde (near Copenhagen) and Aarhus October 24 and 26. More details here. The last time I did public versions of this class in Denmark (in 2008) the rooms were full, so sign up early! (There's a discount for early registrants). The discount for early registration ends September 24.

I'm also holding a public version of the class in partnership with Microchip Technology in Minneapolis October 17. More info is here. (There's a discount for early registrants). The discount for early registration ends September 17.

Or, I can present the seminar to your team at your facility.

|

| Quotes and Thoughts |

| <

"The problem with object-oriented languages is they've got all this implicit environment that they carry around with them. You wanted a banana but what you got was a gorilla holding the banana and the entire jungle." - Joe Armstrong, sent by Paul Carpenter. |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

David Fernandez Pinas recommends Ditto for expanding the clipboard in Windows. |

| Freebies and Discounts |

This month's giveaway is a 30 V 5 A power supply. Alas, it takes 110 VAC only. (It's hard to imagine anyone designing a product that way in this day). But it seems like a nice unit.

Enter via this link. |

| More on Backup Strategies |

The last Muse's article about backing up computers generated a lot of dialog. Robiee Tonneberger wrote:

|

After reading today's article on A Backup Strategy, I thought I'd add to the discussion.

I have 5 storage centers. The C drive in Windows 7 under VMWare is where I write my embedded F/W then backed up to the Mac's drive every couple hours as well as to my USB 2TB HD under the laptop stand.

I also have an Apple Time Capsule that provides the house's WiFi as well as 3TB of space that backs up my two computers every 15 minutes, every hour, every day and every week. Should either machine suddenly die, I can restore the complete disk image from not more than the last 15 minutes of its predecessor's life.

We hear all sorts of names for storage media, but my fifth drive definitely confuses everyone I tell it to, except for perhaps engineers who grew up in the country. At least once a month I go out to my barn to fetch my "barn drive" that backups all my un-replaceable folders. Why a HD drive in my barn you ask? Because should the house burn down, I still have all my last saved files in the barn. |

David Koellen contributed:

|

I read 'A Backup Strategy' in the 8/15 email and thought you should check out www.iosafe.com for backup drives that are fireproof and waterproof. They can be used for backup and will survive a fire and the ensuing water used to put out the fire. They are more expensive than your run of the mill backup drives but worth it for peace of mind in case of a fire, you can also bolt them down to the floor to make them resistant to theft. I have been using them for years with good results and fortunately have not tested the fire or water proof feature!

They also make enterprise level products including fire and water resistant NAS, and backup and data recovery servers |

Sergio Caprile likes Bacula:

|

I do love Bacula... he is in charge and sucks data when appropriate... been using it for many years. That is for information (datasheets and stuff).

For systems, I run a full system partition backup once a while with Clonezilla and a USB drive, there is not much installation stuff going on. User data is on a different partition.

Source code is in CVS (yes, really, I enforce centralized storage for company projects, external developers use git via gitolite, documents and data (Micro-Cap files, etc) are in SVN. Repositories are cross-copied to another location for geographical redundancy, synced every Saturday. Servers use a cheap 2-disk RAID1. |

Charles Manning doesn't like FAT:

|

On Backups If you want a solid backup system, there are a couple of main points to make:

1) Don't use FAT file system. FAT sucks badly. The NT file system is better, but Linux file systems such as ext3 are way better.

2) Preferably don't use Windows for any of your servers. That frees you from ransomware worries and gives you better options such as ext3.

I use Dropbox for a lot of my stuff (accounts, data sheet archive, development notes...). I find this very handy because:

1) It keeps an offsite copy automatically.

2) It also replicates onto all my 4 or so computers meaning I always have the data I need with me.

With these policies in place I can literally go to a new town (or country), buy a new laptop and restore my whole professional life in less than a day. |

David Fernandez Pinas recommends using the Linux strategy outlined here.

Bastian Neumann wrote:

|

Adding to the Backup Strategy from last muse I can add a little bit of my strategy to get around the ransom ware issue.

I back up files every night to a NAS running Linux with the system drive mounted read only. The Files are available in a 15 day ring buffer. Access to the files is via a SMB server but read-only. We had the ransomware issue once and did not lost a single file. Just had to copy the read-only files from the network back into the ordinary hard drive. |

Martin is another Linux fan:

|

At home, I use a NAS (Network attached storage) box. It runs some kind of Linux, so I can access its volumes using standard Linux tools like rsync. Using rsync e.g. from a cron job is a very simple way to backup your files from a Linux box (at home, I prefer to use Linux). In addition, I have a CVS repository on the NAS box for my private software projects, and I use unison to synchronize selected directory paths between various machines. In the end, all important data is copied to the NAS box using some scripted solutions. I do prefer scripting and automating these things, it is less prone to manual errors. Using a regular schedule, I do a manual backup of the NAS box to a external USB drive (connected to any Linux box available) using rsync. The USB drive is stored still inside my home, but in another room.

I've no automated strategy to backup the remaining windows box, this is done manually by simple copying important files to the NAS box. |

Ron Aaron has a different approach:

|

I've found the most reliable backup method for my data is to use a distributed "SCM" (I use Fossil) and to store the repository on multiple devices.

Though Fossil's intended for source-code, I use it as well for documents in binary formats. I create a repository for each project, and clone it on different devices I use.

What's this provide?

* Ease of updates. Changes I make to a file on one device can be easily mirrored on another

* Reliability. Since the SCM stores all historic versions of a file, I can go back in time to a known-good version, should someone or something corrupt things.

* Security. I store my project repos on a server I control, and allow access only via HTTPS and to users for whom I control access rights.

* Defense in-depth. If my main server goes down, or is corrupted, I still have the repos available on other machines (and can serve from them in a few minutes, in needed).

I don't bother with regular backups any more. I simply copy GPG-encrypted versions of my repositories to a cloud provider for off-site storage once a day, and rely on the local 'live' repos for normal use. So far, I've never had to restore from the cloud's version of things -- it's more for disaster recovery than anything else. |

Paul Carpenter had some good thoughts on questions to consider:

|

On some of my customer servers I use ROBOCOPY that allows for multithreading and backup mode transfers, with all sorts of exclude list (who needs Word temp files '~*.*', most log files or temp folders). Allows user to exclude Windows Junctions (Windows version of Linux hard/soft link files/folders), so you do not get a recursion issue on My Pictures etc. It has its own logging capability so you can log your batch files and the actual copying details in a separate file. Also log to console and logfile TEE option.

Robocopy is available as better than XCOPY on Windows 7 and above. Might also be available on Vista. This obviously includes Windows Server 2008 which is only Windows 7 with Server extensions.

The important questions you need to ask before setting up a backup strategy

1/ How much data/time loss can I withstand when a catastrophe happens?

2/ How far back do I need to go ? (How many versions)

3/ How quickly must my recovery be? How fast for different failure scenarios? |

|

| Active/Idle Meters |

Andreas Hagele had a twist on an idea from Muse 310 to measure active or idle time:

|

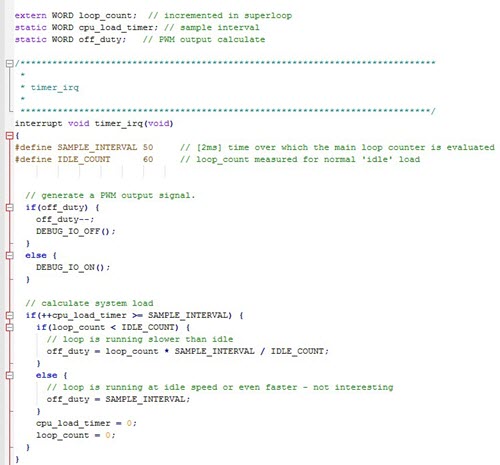

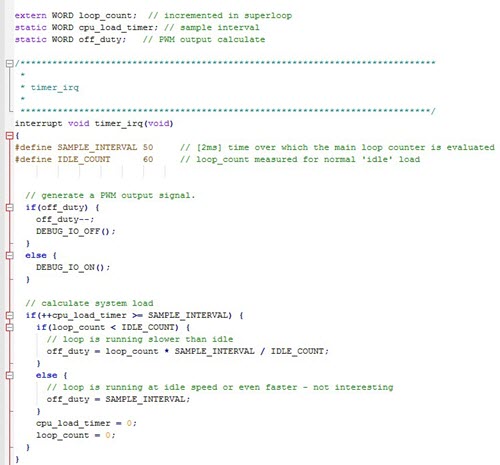

Having a Superloop really means there is no idle time at all. The load of the system is defined by the speed the main loop runs. And it's deadly if one module eats up too much time and slows down everything else too.

So my approach is to measure the loop speed and toggle an DIO output accordingly in a PWM fashion to connect a voltmeter.

To measure the speed a time reference is needed. In my case a 2ms timer interrupt. Then it's simply to increment a loop counter in the Superloop and read it at fixed interval rate, in my case every 100ms (i.e. 50 timer interrupts). With this method there is no need to add any time delays. The only extra time needed is the load calculations and debug IO output.

Depending on the speed of the main loop the reading needs to be factored and offset to get the most possible output range. In my case the the 'idle' speed is about 60 loop runs within the interval rate. I then scale the output so this idle rate to give me 0 or very low output. Due to the integer arithmetic the code for that is not very generic and would need some tuning for other systems.

When the loop rate drops the output goes up with only very few loops per interval resulting in a max output value.

In general I'm not interesting when things run faster as normal - so all readings above the 'idle' rate are resulting in a 0 output.

My simple 8-bit MCU has no PWM output so a simple duty cycle across the same timer interval time is generated. Adding a RC filter to the output (10kOhm and 10uF in my case) allows to feed the signal into an analog or digital meter.

For capturing longer time intervals an Oscilloscope can be used on the slowest horizontal time setting. The screen could then look a bit like the fancy line graphs the 'real' operating systems are offering.

Below the code snippet of the timer interrupt doing the load calculations.

Instead of an analog output it could also easily be changed to look for peaks and have an LED connected. A flickering would then show a heavy load. Adjusting the constants for the device inspected will need a bit of attention so the output does cover the range of interest. I have already found a few surprises in my code where things take much longer than I had assumed.

|

Graham instruments code like this:

|

Jack, saw your piece on debugging outputs. I use a LCD built for a mobile phone and the Adafruit_ILI9341 library to drive it. The embedded hard/software runs equally with or without the LCD attached, and there's room for all kinds of debugging output on it.

Sound is my input; so having a graphical device on which to display output is really useful when evolving the application. |

|

| On Windows 10 |

Bob Snyder on Windows 10:

|

I've been offline for a couple of days due to reinstalling Windows. I recently bought a solid state drive to replace my hard drive, so I decided to do a clean install of Windows 7 Ultimate on the new drive. The installation went fine, but when I tried to install all of the updates using the Windows Update feature, I ran into trouble. Apparently I'm not alone. Articles have been written about the problem.

http://www.computerworld.com/article/3062154/windows-pcs/patching-windows-7-can-be-painfully-slow.html

http://www.zdnet.com/article/sticking-with-windows-7-the-forecast-calls-for-pain/

It seems that when you perform a clean install, and Windows needs to download hundreds of updates, the process is excruciatingly slow, and there is no reliable way to tell whether the process is hung or just taking a long time. Some people have reported waiting as long as 12-48 hours before abandoning the update. Numerous "fixes" are suggested by numerous websites. I tried many of them, but had no success.

After wasting an entire day, I decided to buy a copy of Windows 10 Professional. I'm glad that I did. The installation was a snap. All of my existing hardware and software are working fine, and the user interface is really not that much different from Windows 7. I've only been using it for a day, but I'm beginning to think that the negative reviews of Windows 10 are making mountains out of molehills. With Windows 10 and my new SSD, my four-year-old laptop has never been snappier. |

I'm running Windows 10 as well and have had no problems with it. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

| Joke For The Week |

Note: These jokes are archived at www.ganssle.com/jokes.htm.

From Charlie Moher:

How was copper wire invented ?

Two Scotsmen fighting over a penny. |

| Advertise With Us |

Advertise in The Embedded Muse! Over 27,000 embedded developers get this twice-monthly publication. . |

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster.

|