|

You may redistribute this newsletter for non-commercial purposes. For commercial use contact jack@ganssle.com. |

| Contents |

|

| Editor's Notes |

Better Firmware Faster classes in Denmark and Minneapolis

I'm holding public versions of this seminar in two cities in Denmark - Roskilde (near Copenhagen) and Aarhus October 24 and 26. More details here. The last time I did public versions of this class in Denmark (in 2008) the rooms were full, so sign up early!

I'm also holding a public version of the class in partnership with Microchip Technology in Minneapolis October 17. More info is here.

Or, I can present the seminar to your team at your facility.

I'm now on Twitter. |

| Quotes and Thoughts |

"For every 25% increase in the problem complexity, there is a 100% increase in the complexity of the software solution." Robert Glass |

| Tools and Tips |

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past.

Not really a tool... but a computer made from, gasp, transistors! James Newman just finished building a 16 bit processor from 40,000 discrete transistors. His web site gives an excellent overview. I'm not sure why anyone would want to invest £40,000 into building a machine like this, but it sure is cool.

Steve Leibson sent a link to an article about the last remaining paper copy of the Apollo Guidance Computer code. You can view the code used in the Lunar Module here. It's also on Github.

Tin whiskers continue to be a problem in modern electronics. These microscopic fingers grow and can create short circuits. They're tiny; in the olden days they might have just burned out with no symptoms, but today's low-power circuits are more susceptible. And the lead-tin solders of yesteryear were much less likely to form whiskers than today's RoHS solders. Bob Landman sent some interesting links about whiskers in general, and speculation that they may have contributed to unintended acceleration incidents. |

| Freebies and Discounts |

Last month's winner was Brian Gleason.

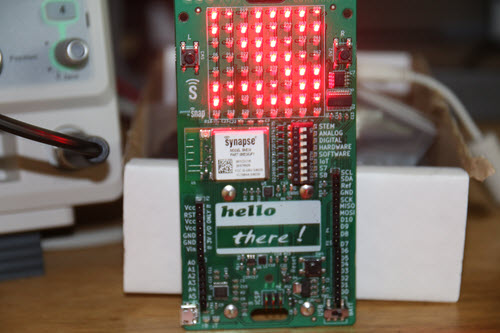

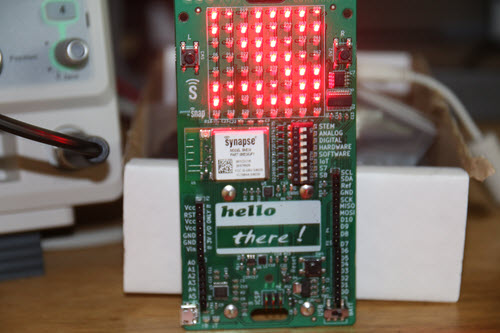

This month's giveaway is an electronic badge from the recent Boston Embedded Systems Conference. Hundreds of these were distributed. They advertise the wearer's interests (set via a DIP switch) and can form mesh networks with badges worn by other attendees. It's a clever bit of engineering and a lot of fun.

Enter via this link. |

| Even More on Software Malpractice |

In response to some questions posed last issue about software malpractice, Bill Gatliff wrote:

|

Tyler Herring asks some interesting questions:

|

Just an interesting side thought on your piece about software malpractice... If it ever came to be, in a legal sense, I do sort of wonder what kind of change it would drive in the industry at large.

1) Increase in indemnity clauses in contracts or product documents (manuals, terms of use, etc.)? |

Not likely to help, though vendors will still try. Law doesn't let you indemnify against negligence, even if the customer signs in blood.

|

2) Contractors or software houses getting insurance for malpractice? |

They should already.

|

3) Increase in code audits/code reviews? Having to document these for legal purposes?

4) Relaxation of project timelines to allow for audits and software cleanup? |

Ditto.

|

5) Does the individual developer assume the liability? His or her boss? The company at large? |

This is the question I fear most in our "gig economy".

It isn't practical to expect an engineer to assume liability for a company's product, and no engineer in their right mind would ever enter into such a relationship. But nowadays everybody is a subcontractor, and the engineer might be forced to choose between taking on that liability vs. unemployment. And, to put it lightly, companies have LOTS of incentives---and leverage---for distancing themselves from liability.

Uber has confirmed many of my fears regarding this subject.

|

8) What the first inevitable really, really big court case to establish case law on this would be. |

It's probably already happened.

Software and electronics aren't unique animals as far as the Court is concerned. In our adversarial system, you can't bring a case unless you've suffered harm. Once that harm occurs, the liability is borne by the active agent: the box that caught fire, the car that ran over the pedestrian, etc.

The pedestrian's claim is at the intersection of the car's bumper and their body. The fact that the car's throttle pedal was ignoring the operator is a secondary concern: the car delivered the injury, so the car must make the claimant whole again. The seized throttle pedal was just an accessory to the event, and that knowledge is useful only for the insurance companies to figure out where to recover THEIR losses from later. |

He also had the following comments:

|

Jones asserts that litigation activities have a -9 or larger impact on product quality. That simplification risks mis-reading the cause and effect of his point.

Having observed a couple of malpractice-related proceedings, and in discussions held both before and after same, I've observed that there's nothing quite like actual discovery and/or litigation to sharpen an engineer's senses regarding their personal impact on overall product quality. When done well, the event can be a HUGE positive turning point for an organization---assuming they survive it, that is.

Good managers teach their employees that the whole point of discovery and litigation is to get at the TRUTH behind an event, and that even if the overall finding is unfavorable, having the truth out in the open is always better than attempting to hide it.

"The truth is always the path of least energy." (*)

Shortly thereafter, engineers become paranoid at the thought of standing in front of a jury of grandparents and explaining why their "bad day" caused someone's injury or loss. Those engineers stop producing altogether for a while.

Once an engineer gains confidence that his employer won't throw him under the bus, however, something cool happens: he starts writing code (or designing circuits, etc.) that he WILL stand behind. And in an even more ideal world, he'll start openly and constructively challenging his peers to do likewise so that they can stand behind the system as a whole.

Working while litigation is underway DEFINITELY has a negative impact on a developer's productivity. But afterwards, if the situation is handled well, it can improve the situation by at least the same amount. Don't waste the opportunity.

(*) - I just made that up, but I think that something similar has been said before.

|

It's a little scary to think of litigation being a force for improving software engineering. But this is an interesting point. Then there's the law of unintended consequences: I have seen engineering departments strip documentation from the code, because that will have to be produced during the discovery phase of a case. Sometimes engineers aren't allowed to maintain engineering notebooks for the same reason.

Mat Brennion wrote:

|

I think Gary Lynch is being a little harsh:

“But with the passage of time, I have been finding fewer and fewer people who understand me when I try to explain this standard. They seem to be trained to believe you have to ship product with known defects and deal with them only when somebody complains. I fear when you and I are gone, no one will remember that it used to be different.”

Personally I’m amazed at the millions of lines of code on my phone that just work, pretty much perfectly, pretty much of the time. Just think about the code involved in rendering a font on the screen – there’s font libraries, graphic drivers, windowing systems, right down to trig functions for working out the curves. The question should be why are we embedded engineers so slow? One answer is that often embedded / safety critical software must be shown to be correct before it is deployed, as it’s hard or impossible to update it in service. This need will always remain, but is dwarfed by the quantity of software for which that constraint is relaxed. |

Mat makes a good point. For a very long time I've been focused on improving the quality of embedded systems, so tend to see the problems rather than the successes. Fact is, we have built an astonishing world that works incredibly well. Yes, a few times a year my iPhone goes wonkers and has to be rebooted. But most of the time it functions perfectly. The roads are filled with cars whose systems compute correctly almost all of the time.

We have to continually improve, of course, but embedded engineers deserve a salute for the incredible products that enrich so many lives. Here are the very first paragraphs of my first book, published in 1992:

|

These are the days of miracles and wonders - Paul Simon

I just can't get no respect - Rodney Dangerfield

How many of us designing microprocessor based products can explain our jobs at a cocktail party? To the average consumer the word "computer" conjures up images of mainframes or PCs. He blithely disregards or is perhaps unaware of the tremendous number of little processors that are such an important part of everyones' daily lives. He wakes up to the sound of a computer-generated alarm, eats a breakfast prepared with a digital microwave, and drives to work in a car with a virtual dashboard. Perhaps a bit fearful of new technology, he'll tell anyone who cares to listen that a pencil is just fine for writing, thank you; computers are just too complicated.

So many products that we take for granted simply couldn't exist without an embedded computer! Thousands owe their lives to sophisticated biomedical instruments like CAT scanners, implanted heart monitors and sonograms. Ships as well as pleasure vessels navigate by LORANs and SATNAVs that tortuously iterate non-linear position equations. State of the art DSP chips in traffic radar detectors attempt to thwart the police, playing a high tech cat and mouse game with the computer in the authority's radar gun. Compact Disk players give perfect sound reproduction using high integration devices that provide error correction and accurate track seeking.

It seems somehow appropriate that, like molecules and bacteria, we disregard computers in our day to day lives. The microprocessor has become part of the underlying fabric of late twentieth century civilization. Our lives are being subtly changed by the incessant information processing that surrounds us.

Microprocessors offer far more than minor conveniences like TV remote control. One ultimately crucial application is reduced consumption of limited natural resources. Smart furnaces use solar input and varying user demands to efficiently maintain comfortable temperatures. Think of it - a fleck of silicon saving mountains of coal! Inexpensive programmable sprinklers make off-peak water use convenient, reducing consumption by turning the faucet off even when forgetful humans are occupied elsewhere. Most industrial processes rely on some sort of computer control to optimize energy use and to meet EPA discharge restrictions. Electric motors are estimated to use some 50% of all electricity produced - cheap motor controllers that net even tiny efficiency improvements can yield huge power savings. Short of whole new technologies that don't yet exist, smart, computationally intense use of resources may offer us the biggest near-term improvements in the environment.

|

|

| On Stacks |

Even today we have only the most limited ways to troubleshoot a blown stack, or to estimate how much to allocate. Million line programs with explosive numbers of automatic variables and intricate inheritance schemes chew through stack space while masking the all of the important usage details. Add asynchronous nested interrupt processing and we're faced with a huge unknown: how big should the stack or stacks be? Most developers use the scientific method to compute anticipated stack requirements.

We take a wild guess.

Get it wrong and you're either wasting RAM or inserting a deadly bug that might not surface for years when the perfect storm of interrupts and deep programmatic stack usage coincide.

I've read more than a few academic papers outlining alternative approaches that typically analyze the compiler's assembly listings. But without complete knowledge of the runtime library's requirements, and that of other bits of add-in software like communications packages, these ideas generally collapse.

However, there are a few products today that can predict stack needs. An example is IAR's toolchain that does stack usage prediction. It does so for each call graph root - a function that is not called by any other code, like the program entry point. Interestingly, interrupts are also roots, and the tool predicts stack needs for each of those.

AdaCore's GNATstack takes Ada or C code and generates an assessment of required stack space. It, too, produces a separate assessment of interrupt needs.

With both tools it's left to the developer to decide, or perhaps take a wild guess at, how many different interrupts could be nested in the worst case. They also can't track stack space consumed by function pointers.

They're all ex post facto, of course - you don't know much much stack is needed till the code is complete. (A wise engineer will monitor the numbers throughout development.) In a limited RAM situation one could discover very late in the project that the system can't be built without a redesign of the hardware.

Absent these tools we can monitor stack consumption using a variety of methods, like filling a newly-allocated stack with a pattern (I like 0xDEAD) and keeping an eye on how much of that gets overwritten. Some RTOSes include provisions to log high-water marks. Micrium has a nice paper about this, as does Express Logic.

Some years ago Ben Jackson suggested:

|

One trick I used last year is to use GCC's '-finstrument-functions' flag which brackets every compiled function with enter/exit function calls. You can write an enter function which tests the amount of stack space left. It's not ideal, but it can save a lot of time. After I did this for the kernel to track down a problem, one of the application engineers applied the idea to a thread-intensive application and found several potential problems.

Another trick is to look for functions where people have mistakenly put large structures or arrays on the stack. Use a tool like 'objdump' to disassemble your program and then a one-line awk or perl script to find the first 'sub...esp' in each function and sort them by size. If you find an irq with 'struct huge', it's just waiting to blow your stack. |

But these are all ultimately reactive. We take a stack-size guess and then start debugging. When something goes wrong, change the source code to increase the stack's size. It violates my sense of elegance, paralleling as it does the futile notion of testing quality into a product. It's better to insert correctness from the outset. But there seems to be no hint of an approach that predicts stack size early in a project.

The Barr Group will have a free webinar about figuring stack size in September. I plan to listen in. |

| Jobs! |

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intent of this newsletter.

Please keep it to 100 words. There is no charge for a job ad.

|

| Joke For The Week |

Note: These jokes are archived at www.ganssle.com/jokes.htm.

Two items this issue. First, Paul Carpenter sent me a link to a site of developer humor which could be a great way to lose many hours.

And second, Scott Nowell sent this bit of amusing irony:

|

I could argue with the accuracy of your second sentence in this piece "Alas, only a tiny fraction of us use any standard religiously."

The sad truth is too many engineers do use standards religiously, i.e., once or twice a year. |

|

| Advertise With Us |

Advertise in The Embedded Muse! Over 27,000 embedded developers get this twice-monthly publication. . |

| About The Embedded Muse |

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and

contributions to me at jack@ganssle.com.

The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get

better products to market faster.

|