|

|

||||||||

You may redistribute this newsletter for noncommercial purposes. For commercial use contact jack@ganssle.com. |

||||||||

| Contents | ||||||||

| Editor's Notes | ||||||||

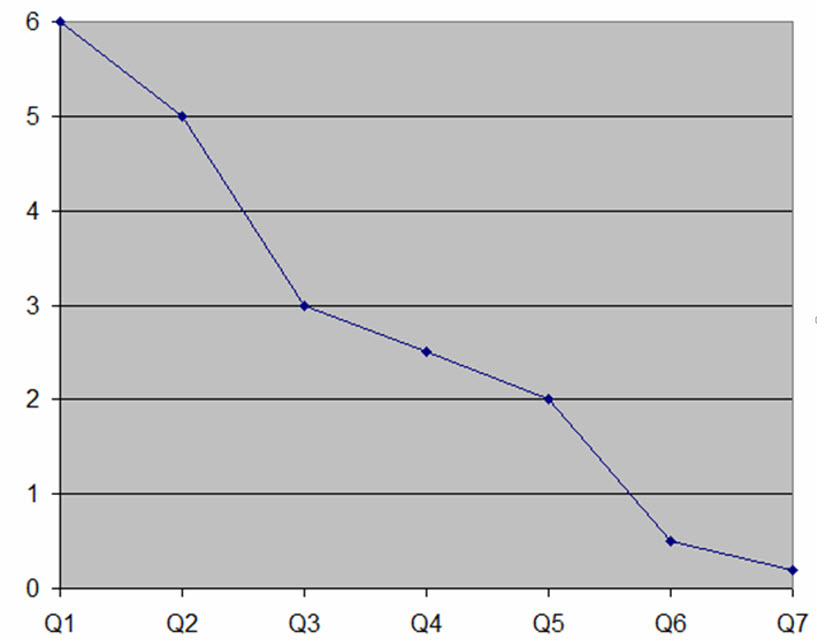

The average firmware teams ships about 10 bugs per thousand lines of code (KLOC). That's unacceptable, especially as program sizes skyrocket. We can - and must - do better. This graph shows data from one of my clients who were able to improve their defect rate by an order of magnitude (the vertical axis is bugs/KLOC) over seven quarters using techniques from my Better Firmware Faster seminar. The seminar is all about giving your team the tools they need to operate at a measurably world-class level, producing code with far fewer bugs in less time. It's fast-paced, fun, and uniquely covers the issues faced by embedded developers. Information here shows how your team can benefit by having this seminar presented at your facility.

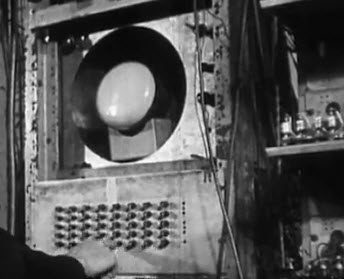

A Williams tube in the Manchester Baby The Manchester Baby was the world's first stored-program computer, yet it wasn't designed to be a computer; it was a testbed for the Williams tube, which was an early (1948) form of random-access memory that predated even core. I stumbled across a video which describes the machine and includes what appears to be footage of the original unit plus the 1998 replica. It's well worth the 8 minutes if you're a fan of the history of this industry. Follow @jack_ganssle

I'm now on Twitter. |

||||||||

| Quotes and Thoughts | ||||||||

"Software is too important to be left to programmers." - Meilir Page-Jones. (See here for my comments about this quote). |

||||||||

| Tools and Tips | ||||||||

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. Are you using a commercial or open-source graphics package to add a GUI to your embedded system? If so, which one, and how do you like it? I'll post replies in the next Muse. Some developers are embracing functional programming. Alas, most references on that subject drown the reader in complex ideas that mask the real issues.

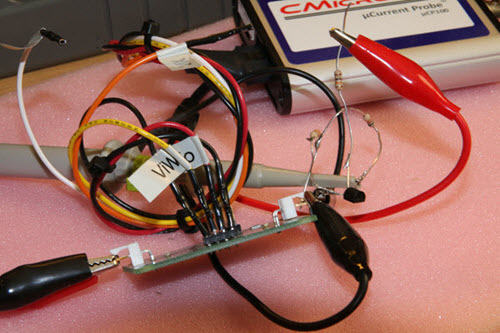

The uCP100 places either an internal sense resistor or your own in series with the power supply and drives an oscilloscope to show how much current a system uses as the drain changes over time -- for instance, in going in and out of sleep modes. The uCP100 measures currents from 5 nA to 100 mA; its brother, the uPD120 covers 50 nA to 800 mA. Some readers have complained that these sorts of units are little more than sense resistors with an amplifier, but the reality is that it's hard to get this right. To measure low currents means using either a big resistor a lot of gain. But op amps struggle to get decent bandwidth as the gain increases. When using a scope you need even more gain as it's hard to see millivolt-level signals. Unlike most other devices targeting this market, the uCP100 can measure over a 20 volt range. If your system runs from solar you could see voltages approaching this. What's really unusual is the output to the scope can swing from 0 to 40 volts. This means at low currents you can zoom in easily yet still catch high-current events. The scope connects via a standard BNC connection. On the input side, one wire goes to the supply and one of three wires is used to connect to the target system's power input. One is used if you're providing your own sense resistor. Another is for "precision" mode which goes from 5 nA to 100 uA; the other is for "wide-range" mode for 5 uA to 100 mA. DIP switches select zoom in, normal, or zoom out gains. What this means is you can measure current to high levels of precision over narrower dynamic ranges, or a bit less precision over up to a 20,000:1 range. A critical parameter (which is often not specified) is the sense resistor voltage drop, which in the uCP100 is 7 µV/nA in precision mode and 7 µV/uA in wide-range mode. An optional break-out board eases connections. It goes between the supply and the uCP100. Here's the breakout board in the foreground and the unit itself behind it:

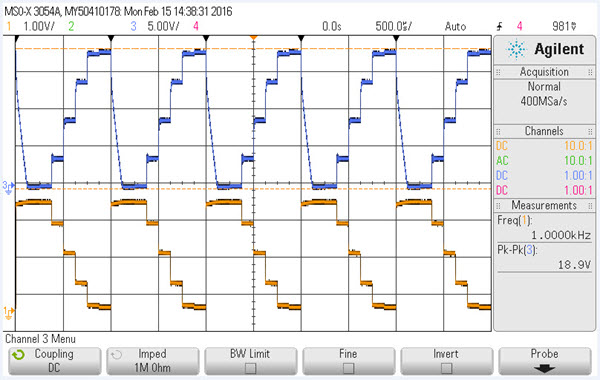

See the transistor and resistors? To run a realistic test I drove the base of the transistor from an arbitrary waveform generator (a Siglent SDG 2042X). One channel (yellow in the following picture) of a scope is attached to the collector of the transistor; the voltage there is inversely proportional to the current used by the transistor. The other channel goes to the output of the uCP100:

A couple of things to notice: the unit is responsive. The scope is set to 500 us/div. Another, the peak-to-peak is a whopping 18.9 volts! That allows for a lot of resolution. It's a nicely-made piece of equipment and comes with a very complete kit, including grabbers to connect to the power pins and all connectors. I like it, and felt it's a worthwhile addition to any lab that needs accurate ways to measure wide dynamic ranges of current. At $495 I feel it fulfills its value proposition... but some may balk at that price. That is a tenth of Keysight's nifty N2820A current probe. |

||||||||

| Freebies and Discounts | ||||||||

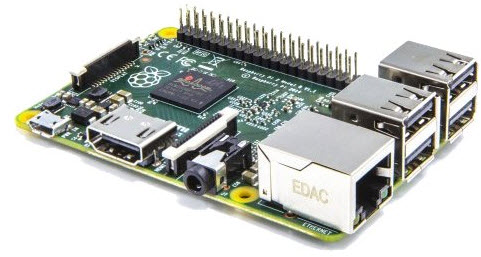

This month I'm giving away a Raspberry Pi 2 Model B. The contest will close at the end of March, 2016. It's just a matter of filling out your email address. As always, that will be used only for the giveaway, and nothing else. Enter via this link. |

||||||||

| Software is Too Important to be Left to Programmers | ||||||||

I'm not sure what the context for the quote (above) from Meilir Page-Jones was, but it touches on one of my sore points: we're not programmers. Software engineering is a very young profession. There's much we still don't know and some that we're inventing even now. We do have a very important body of knowledge to draw upon (e.g., this). There are known practices that yield good results; practices, alas, too few of us employ. I truly believe that as time goes on most of us will adopt a more formal, engineering, approach to building software, and we'll use metrics to guide our work. There is plenty of poorly-crafted code out there. But let's do remember that the world does run on software today, and that code is by and large doing a pretty darn good job of it. When I was a young engineer the average person had never seen a computer in person and had little to no first-hand experience with software. Now software (and especially firmware) mediates many aspects of everyone's lives. Can you imagine building systems out of transistors or ICs without software? What would a device that implements, say, Excel, built without software, look like and cost? The complexity would be staggering, yet the magic of computer software means one can run a spreadsheet on a ten ounce tablet, almost for free. Despite the problems, software has been an incredible success story. |

||||||||

| On Craftsmanship | ||||||||

In Muse 300 I wrote about craftsmanship in software. Readers had much to say. Tim Wescott wrote:

Tim's point is valid, and one that's debated pretty fiercely. Why, for instance, has avionics been such a software success story? Is it because the code is done to a rigorous DO-178 standard? Or is it because of an ingrained safety culture in that industry? There's little data to go on. From Harlan Rosenthal:

Jim Brooks added:

Vlad Z wrote:

|

||||||||

| Jobs! | ||||||||

Let me know if you’re hiring embedded engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intents of this newsletter. Please keep it to 100 words. There is no charge for a job ad. |

||||||||

| Joke For The Week | ||||||||

Note: These jokes are archived at www.ganssle.com/jokes.htm. In Muse 300 I made a comment about huge levels of indirection using C. Tim Wescott sent this in response. Is it a joke or a terror alert? /* We don't need no stinkin' comments */ #include <stdio.h> int main(void)

{

int ralph = 1;

int * bob = &ralph;

int ** sue = &bob;

int *** mary = &sue;

int **** tom = &mary;

int ***** gary = &tom;

int ****** chris = &gary;

int ******* frank = &chris;

int ******** alex = &frank;

int ********* burnie = &alex;

int ********** hillary = &burnie;

int *********** miriam = &hillary;

int ************ neil = &miriam;

int ************* barney = &neil;

int ************** foo = &barney;

int *************** fighter = &foo;

int **************** brittany = &fighter;

int ***************** trevor = &brittany;

int ****************** newt = &trevor;

int ******************* robin = &newt;

int ******************** i = &robin;

******************** i = 0;

printf("Well, I %s know what it means\n", ralph ? "guess I don't" : "do too");

} |

||||||||

| Advertise With Us | ||||||||

Advertise in The Embedded Muse! Over 25,000 embedded developers get this twice-monthly publication. . |

||||||||

| About The Embedded Muse | ||||||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |