|

|

||||

You may redistribute this newsletter for noncommercial purposes. For commercial use contact jack@ganssle.com. |

||||

| Contents | ||||

| Editor's Notes | ||||

Best in class teams deliver embedded products with 0.1 bugs per thousand lines of code (which is two orders of magnitude lower than most firmware groups). They consistently beat the schedule, without grueling overtime. Does that sound like your team? If not, what action are you taking to improve your team's results? Hoping for things to get better won't change anything. "Trying harder" never works (as Harry Roberts documented in Quality Is Personal). Did you know it IS possible to create accurate schedules? Or that most projects consume 50% of the development time in debug and test, and that it’s not hard to slash that number drastically? Or that we know how to manage the quantitative relationship between complexity and bugs? Learn this and far more at my Better Firmware Faster class, presented at your facility. See https://www.ganssle.com/onsite.htm. |

||||

| Embedded Video | ||||

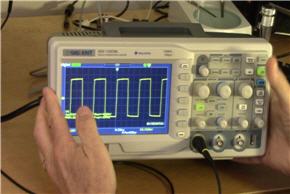

The nice folks at Siglent sent me one of their SDS1102CML 100 MHz bench scopes for evaluation. This $379 unit offers a ton of value for the price. I have made two short videos about the unit. Part 1 is here, and part 2 here. I plan to do a follow up review in a year or so to see how the unit holds up.

|

||||

| Quotes and Thoughts | ||||

How did you go bankrupt? Slowly at first, then all of a sudden. - Anonymous How does a large software project get to be one year late? One day at a time! - Fred Brooks |

||||

| Tools and Tips | ||||

Please submit clever ideas or thoughts about tools, techniques and resources you love or hate. Here are the tool reviews submitted in the past. I mentioned the low-cost Siglent digital bench oscilloscope above. Other vendors have units at similar price/feature points. Do you have comments about any of these units? I have a serious addiction to the Unix command shell and use it for all sorts of scripting needs. Cygwin is one option to move those capabilities to Windows. Do check out the free UnxUtils tools, which are native Windows applications and are very fast. There are about 120 tools (like grep, awk, sed, tr) provided. Another option for creating complex scripts under Windows is the free AutoHotKey. It sure beats writing C code if you're doing a similar operation to a mass of text files, for instance. I have found that it can be tedious to get the scripts right. Here are the comments from one of my AutoHotKey scripts that give a flavor for what it can do. The entire script, with comments, is 222 lines: ; Operation: This converts a directory full of files. ; - Each file is opened in Word ; - The title is extracted and stored in variable Title ; - If "Poll question" appears it, and all text till the next pair of CRs is found, is deleted ; - The title in the Word file gets <h1> and </h1> tags ; - Every pair of Enters is replaced with a <p> tag ; - The file's creation date is put at the end of the file ; - The entire article is put on the clipboard ; - Word is closed ; - Ultraedit is loaded and the article pasted into it. ; - Ultraedit then reformats it to remove non-WWW characters ; - The article is then copied to the template and Ultraedit is closed ; - Ultraedit is loaded with the "template.htm" file ; - The Title tag is found and is set to the value in variable Title ; - The string showing the insertion point is found, and the clipboard pasted in ; - Ultraedit then saves it to a file with the same name as the Word file, but an extension of .html, ; all the spaces removed, and all lower-case. |

||||

| Another Argument for MISRA | Earlier this year a bug was discovered in Apple's SSL code. Can you spot it? The second goto from the bottom should not have been there. In this small snippet the double gotos do sort of "jump" out (pardon the pun), but this is buried in a 2000 line module. MISRA rule 15.6 says "The body of an iteration-statement or a selection-statement shall be a compound statement." Had that rule been followed, the code would have looked something like:

... which I think would have made the error much more obvious. The MISRA standards (available here for £15 each in .PDF form) define rules for the safer use of C and C++. Few people like all of the rules; I have quibbles with a few. In school a 90% is an A, and that often applies to life as well. The MISRA rules easily score 90% and are recommended. (And, yes, I know that in software even a 99% is an F! Roughly speaking, a million lines of code with 99% correctness means 10,000 errors). |

|||

| The Value of IP | ||||

Assets in Microsoft's 2013 annual report As the old Wendy's ad exclaimed, where's the beef? This is the assets section of Microsoft's 2013 annual report. Cash, equities, equipment and other tangible and intangible ("Goodwill") assets are listed. But doesn't their IP have some value? Isn't it astonishing that accountants still view software and other forms of intellectual property as valueless? How much is Windows worth to Microsoft? If every physical asset disappeared in some catastrophe, they'd survive. But if that same fate befell their IP, if Windows' source code disappeared, they'd likely fail. Windows is worth billions, maybe hundreds of billions to that company, yet no accountant recognizes its value. They're too busy toting up the cost of the stationary. At most companies inventory lives behind a locked fence. A stern-faced clerk makes you sign for every part. Want a tenth-of-a-cent resistor? Fill out the form. In duplicate. But inventory is itemized on the balance sheet, so accounting insures that asset is as protected as the business's cash balance. Yet IP often lives on uncontrolled servers, vulnerable to angry employees and acts of God. Or simple typos. Type in rm -r and a lot of stuff goes up in smoke. If the balance sheet listed IP all source would live behind some virtual locked fence, guarded by a dour-faced clerk or unfriendly bit of software. And that might indeed be a good thing. Over half of us don't make use of even the most minimal sort of configuration management, so we routinely experience expensive problems. The NEAR spacecraft failure was partially attributed to a CM issue -- they launched the wrong code! Two versions of the 1.11 flight code existed; one was tested, and the other was used. The FAA announced in the late 90s they lost all of the code to control flights between Chicago O'Hare and the regional airports. No one used a version control system and an angry employee purposely deleted it all. If CPAs tracked IP they'd quickly understand the ethereal nature of software and other designs, and they'd quickly learn how a single transient can wipe out most of a company's value. Petty pilferage of inventory would drop to a side issue as they designed robust backup strategies. And that might be just what we need. Uttering the words "it's just a software change" would become a firing offense. Mention a quick upgrade and the accounts would gasp "you want to do what? Do you have any idea of the value of that asset you plan to desecrate?" Track the real value of software and the accountants will complain bitterly to upper management when impossible schedules threaten to wreak havoc with the code. Maybe -- just maybe -- estimation would become a real process respected by everyone. After all, no CFO will permit management to raid cash coffers unless there's a demonstrated need. They require a disciplined cash management strategy, one recognized by the SEC and their own accounting board. Track the value of IP, and the only companies abusing their code will be the Enrons, social outcasts doomed to fail. I have worked with three companies in the last couple of years whose entire engineering departments were consumed by fire. Two were fine; they valued their IP carefully (though not on the balance sheet), and kept extensive off-site backups. One didn't, and the company folded as a result. Version control is the first element in protecting IP, as the VCS stores the code on a server backed up regularly by IT (hopefully). Engineers are generally not good at the routine of daily rollouts. On the hardware side, developers need to be thinking about VCS as well to protect their IP -- the schematics, VHDL, Verilog and all that can be as complex as the code. (I have discussed valuing IP on balance sheets with accountants and their reaction is that it's a hard thing to do. No doubt that's true. But it's even harder to create the stuff.)

Most popular version control systems in the embedded space in 2013 |

||||

| More About Computing WCET | ||||

A lot of people wrote about computing and/or managing worst-case execution time (WCET). Here are a couple of interesting comments. Ray Keefe, of the brilliantly-named Successful Endeavours Pty Ltd, wrote:

Nick Parimore had this to say:

|

||||

| Jobs! | ||||

Let me know if you’re hiring embedded

engineers. No recruiters please, and I reserve the right to edit ads to fit the format and intents of this newsletter.

Please keep it to 100 words. |

||||

| Joke For The Week | ||||

Note: These jokes are archived at www.ganssle.com/jokes.htm. John Black sent this link about a smoking power supply. |

||||

| Advertise With Us | ||||

Advertise in The Embedded Muse! Over 23,000 embedded developers get this twice-monthly publication. . |

||||

| About The Embedded Muse | ||||

The Embedded Muse is Jack Ganssle's newsletter. Send complaints, comments, and contributions to me at jack@ganssle.com. The Embedded Muse is supported by The Ganssle Group, whose mission is to help embedded folks get better products to market faster. |