The Middle Way

It is possible to create accurate schedules, here's how.

Published in Embedded Systems Programming

By Jack Ganssle

1986 estimates pegged F-22 development costs at $12.6 billion. By 2001, with the work nearing completion, the figure climbed to $28.7 billion. The 1986 schedule appraisal of 9.4 years soared to 19.2 years.

A rule of thumb suggests that smart engineers double their estimates when creating schedules. Had the DoD hired a grizzled old embedded systems developer back in 1986 to apply this 2x factor the results would have been within 12% on cost and 2% on schedule.

Interestingly, most developers spend about 55% of their time on a project, closely mirroring our intuitive tendency to double estimates.

Traditional Big-Bang delivery separates a project into a number of sequentially-performed steps: requirements analysis, architectural design, detailed design, coding, debugging, test, and, with enormous luck, delivery to the anxious customer. But notice there's no explicit scheduling task. Most of us realize that it's dishonest to even attempt an estimate till the detailed design is complete, as that's the first point at which the magnitude of the project is really clear. Realistically, it may take months to get to this point.

In the real world, though, the boss wants an accurate schedule by Friday.

So we diddle triangles in Microsoft Project, trying to come up with something that seems vaguely believable, though no one involved in the project actually credits any of these estimates with truth. Our best hope is that the schedule doesn't collapse till late into the project, deferring the day of reckoning for as long as possible.

In the rare (unheard of?) case where the team does indeed get months to create the complete design before scheduling, they're forced to solve a tough equation: schedule= effort/productivity. Simple algebra, indeed, yet usually quite insolvable. How many know their productivity, measured in lines of code per hour or any other metric?

Alternatively, the boss benevolently saves us the trouble of creating a schedule by defining the end date himself. Again there's a mad scramble to move triangles around in Project to, well, not to create an accurate schedule, but to make one that's somewhat believable. Until it inevitably falls apart, again hopefully not till some time in the distant future.

Management is quite insane when using either of these two methods. Yet they do need a reasonably accurate schedule to coordinate other business activities. When should ads start running for the new product? At what point should hiring start for the new production line? When and how will the company need to tap capital markets or draw down the line of credit? Is this product even worth the engineering effort required?

Some in the Agile software community simply demand that the head honcho toughens up and accepts the fact that great software bakes at its own rate. It'll be done when it's done. But the boss has a legitimate need for an accurate schedule early. And we legitimately cannot provide one without investing months in analysis and design. There's a fundamental disconnect between management's needs and our ability to provide.

There is a middle way.

Do some architectural design, bring a group of experts together, have them estimate individually, and then use a defined process to make the estimates converge to a common meeting point. The technique is called Wideband Delphi, and can be used to estimate nearly anything, from software schedules to the probability of a spacecraft failure. When originally developed by the Rand Corporation in the 40s it was used to forecast technology trends, something perhaps too ambitious due to the difficulty of anticipating revolutionary inventions like the transistor and laser. Barry Boehm extended the method in the 70s.

At the recent Boston Embedded Systems Conference several consultants asked how they can manage to survive when accepting fixed priced contracts. The answer: use Wideband Delphi to understand costs. How do we manage risks from switching CPUs or language? Use Wideband Delphi. When there's no historical cost data available to aid estimation by analogy, use Wideband Delphi.

Wideband Delphi

The Wideband Delphi (WD) method recognizes that the judgment of experts can be surprisingly accurate. But individuals often suffer from unpredictable biases, and groups may exhibit "follow the leader" behavior. WD shortcuts both problems.

WD typically uses 3 to 5 "experts" - experienced developers, people who understand the application domain and who will be building the system once the project starts. One of these people acts as a moderator to run the meetings and handle resulting paperwork.

The process starts by accumulating the specifications documents. One informal survey at the Embedded Systems Conference a couple of years ago suggested that 46% of us get no specs at all, so at the very least develop a list of features that the marketing droids are promising to customers.

More of us should use features as the basis for generating requirement documents. Features are, after all, the observable behavior of the system. They are the only thing the customer - the most important person on the project, he who ultimately pays our salaries - sees.

Consider converting features to use cases, which are a a system. The description is written from the point of view of a user who has just told the system to do something in particular, and is written in a common language (English) that both the user and the programmer understand. A use case captures the visible sequence of events that a system goes through in response to a single user stimulus. A visible event is one the user can see. Use cases do not describe hidden behavior at all.

While there are lots to like about using UML, it is a complex language that our customers will never get. UML is about as useful as Esperanto when discussing a system with non-techies. Use cases grease the conversation.

Here's an example for the action of a single button on an instrument:

- Description: Describes behavior of the "cal" button

- Actors: User

- Preconditions: System on, all self-tests OK, standard sample inserted.

- Main Scenario: When the user presses the button the system enters the calibrate mode. All 3 displays blank. It reads the three color signals and applies constants ("calibration coefficients") to make the displayed XYZ values all 100.00. When the cal is complete, the "calibrated" light comes on and stays on.

- Alternative Scenario: If the input values are below (20, 20, 20) the wrong sample was inserted or the system is not functioning. Retry 3 times then display "-----" and exit, leaving the "calibrate" light off.

- Postconditions: The three calibration constants are stored and then all other readings outside of "cal" mode are multiplied by these.

There are hidden features as well, ones the customer will never see but that we experts realize the systems needs. These may include debouncing code, an RTOS, protocol stacks, and the like. Add these derived features to the list of system requirements.

Next select the metric you'll use for estimation. One option is lines of code. Academics fault the LOC metric and generally advocate some form of function points as a replacement. But most of us practicing engineers have no gut feel for the scale of a routine with 100 function points. Change that to 100 LOC and we have a pretty darn good idea of the size of the code.

Oddly, the literature is full of conversions between function points and LOC. On average, across all languages, one FP burns around 100 lines of code. For C++ the number is in the 50s. So the two metrics are, for engineering purposes at least, equivalent.

Estimates based on either LOC or FP suffer from one fatal flaw. What's the conversion factor to months? Hours are a better metric.

Never estimate in weeks or months. The terms are confusing. Does a week mean 40 work hours? A calendar week? How does the 55% utilization rate factor in?

The Guestimating Game

The moderator gives all requirements including both observed and derived features to each team member. A healthy company will give the team time to create at least high level designs to divide complex requirements into numerous small tasks, each of which gets estimated via the WD process.

Team members scurry off to their offices and come up with their best estimate for each item. During this process they'll undoubtedly find missing tasks or functionality, which gets added to the list and sized up.

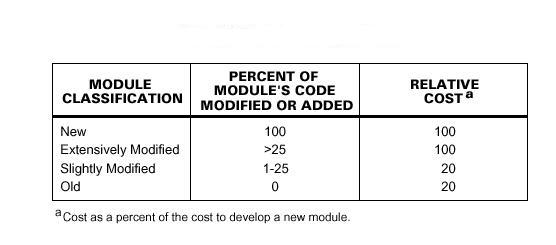

It's critical that each person log any assumptions made. Does Tom figure most of Display_result() is reused from an earlier project? That has a tremendous impact on the estimate. The figure shows typical reuse benefits: (source: ://sel.gsfc.nasa.gov/website/documents/online-doc/84-101.pdf)

Team members must ignore schedule pressure. If the boss wants it done January 15 maybe it's time to bow to his wishes and drop any pretense at scheduling. They also assume that they themselves will be doing the tasks.

Use units that make sense. There's no practical difference between 21 and 22 hours; our estimates will never be that good. Consider emulating the time base switch on an oscilloscope that has units of , 1, 2, 5 and 10.

All participants then gather in a group meeting, working on a single feature or task at a time. The moderator draws a horizontal axis on the white board representing the range of estimates for this item, and places Xs to indicate the various appraisals, keeping the source of the numbers anonymous.

Generally there's quite a range of predictions at this point; the distribution can be quite disheartening for someone not used to the Wideband Delphi approach. But here's where the method shows its strength. The experts discuss the results and the assumptions each has made. Unlike other rather furtive approaches, WD shines a 500,000 candlepower spotlight into the estimation process.

The moderator plots the results of a second round of estimates, done in the meeting and still anonymously. The results nearly always start to converge.

The process continues till four rounds have occurred, or till the estimates have converged sufficiently. Now compute the standard deviation of the final reckonings.

Each feature or task has an estimate. Sum them to create the final project schedule:

![]()

Combine all of the standard deviations:

![]()

With a normal distribution at one standard deviation you'll be correct 68% of the time. At two sigma count on 95% accuracy. So there's a 95% probability of the project finishing between Supper and Slower, defined as follows:

![]()

![]()

Conclusion

An important outcome of WD is a heavily-refined specification. The discussion and questioning of assumptions sharpens vague use cases. When coding does start, it's much clearer what's being built. Which, of course, only accelerates the schedule.

Wideband Delphi might sound like a lot of work. But the alternative is clairvoyance. You might as well hire a gypsy with her crystal ball.